Printer Friendly Version in PDF Format (98 PDF pages)

ABSTRACT

Many people with behavioral health disorders receive suboptimal care and suffer poor health outcomes, including premature death. States, health plans, providers, and other stakeholders need a strong set of measures targeting this population to improve the quality of their care. In this project, we developed and tested measures reported by health plans that focus on screening and monitoring of care for comorbid conditions among people with serious mental illnesses (SMI) and/or alcohol or other drug dependence (AOD). For the SMI population, these measures focused on assessing comprehensive diabetes care; controlling high blood pressure; and screening for body mass index (BMI), high blood pressure, tobacco use, and unhealthy alcohol use. For the AOD population, the measures focused on screening for high blood pressure, depression, and tobacco use. We also developed a measure for health plan reporting to assess the extent to which people discharged from the emergency department (ED) for mental disorders or AOD receive timely follow-up care. In March 2015, the National Quality Forum (NQF) endorsed 11 measures from this project.

DISCLAIMER: The opinions and views expressed in this report are those of the authors. They do not necessarily reflect the views of the Department of Health and Human Services, the contractor or any other funding organization.

TABLE OF CONTENTS

- A. Project Purpose

- B. Report Roadmap

II. SELECTION OF MEASURE CONCEPTS

- A. Scan of Measures

- B. Focus Groups

- C. Evidence Review

- D. Technical Expert Panel Meeting

- E. Final Measure Concepts

III. SPECIFICATION OF MEASURES

- A. Specification of Screening and Monitoring Measures

- B. Specification of Follow-up after Emergency Department Measure

IV. APPROACH TO MEASURE TESTING

- A. Testing Questions

- B. Quantitative Testing of Screening and Monitoring Measures

- C. Characteristics of Health Plans that Participated in Testing

- D. Health Plan Data Collection

- E. Approach to Quantitative Testing of Follow-up after Emergency Department Measure

- F. Approach to Gathering Stakeholder Feedback for All Measures

- G. Data Security

V. TESTING RESULTS FOR SCREENING AND MONITORING MEASURES

- A. Characteristics of Denominator Population Selected for Testing of Screening and Monitoring Measures

- B. Number of Patients Included in Denominator for Screening and Monitoring Measures

- C. Service Utilization among Denominator Populations for Screening and Monitoring Measures

- D. Screening and Monitoring Measure Testing Results

- E. Variation in Screening Measure Rate by Medical and Behavioral Health Data Sources

- F. Inter-rater Agreement for Screening and Monitoring Measures

- G. Conclusion from Testing of Screening and Monitoring Measures: Revisions to Measure Specification and National Quality Forum Submission

VI. TESTING RESULTS FOR FOLLOW-UP AFTER EMERGENCY DEPARTMENT MEASURE

- A. Characteristics of the Denominator Populations

- B. Measure Exclusions

- C. State Variation in Follow-up after Emergency Department Performance using Different Numerator Options

- D. Follow-up after Emergency Department by Beneficiary Characteristics

- E. Relationship Between Follow-up after Emergency Department and Inpatient Stays

- F. Reliability of Follow-up after Emergency Department Measure

- G. Stakeholder Feedback on Follow-up after Emergency Department Measure

- H. Final Outcome of Testing: Revisions to Measure Specification and National Quality Forum Submission

VII. OTHER LESSONS

APPENDICES

- APPENDIX A: Technical Expert Panel Members

- APPENDIX B: Measure Specifications

LIST OF FIGURES

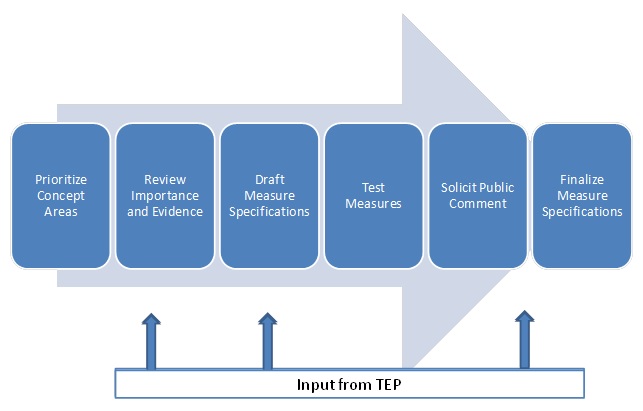

- FIGURE I.1: Measure Development Process

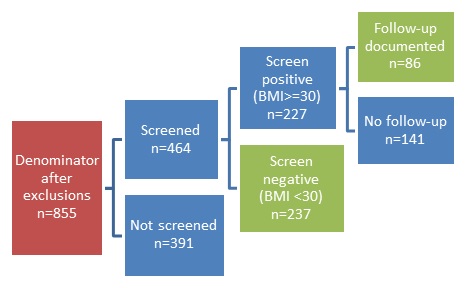

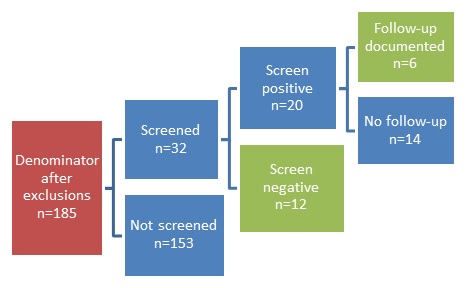

- FIGURE V.1: BMI Screening and Follow-up for Patients with SMI

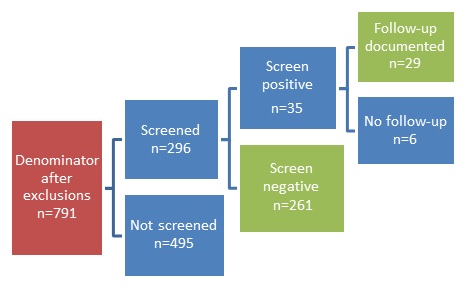

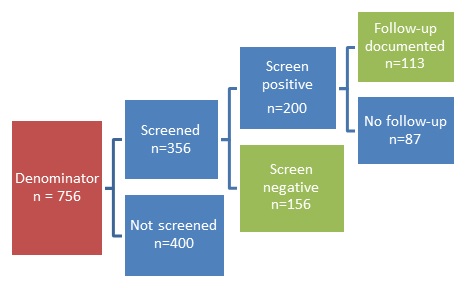

- FIGURE V.2: Alcohol Screening and Follow-up for People with SMI

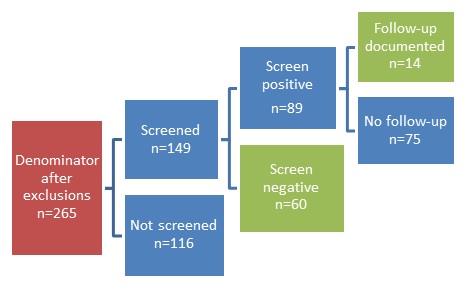

- FIGURE V.3: High Blood Pressure Screening for People with SMI

- FIGURE V.4: High Blood Pressure Screening for People with AOD

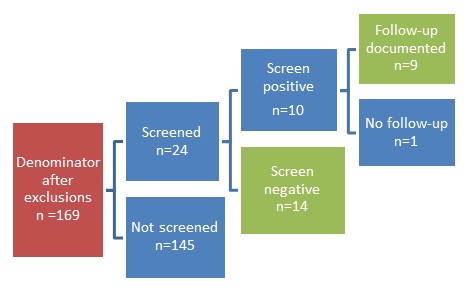

- FIGURE V.5: Tobacco Use Screening and Follow-up for People with SMI

- FIGURE V.6: Tobacco Use Screening and Follow-up for People with AOD

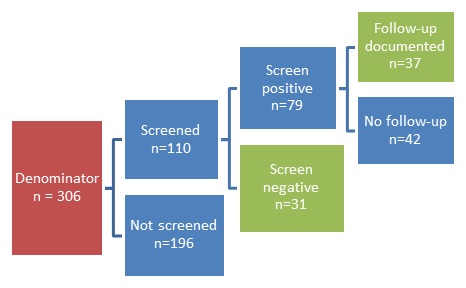

- FIGURE V.7: Clinical Depression Screening and Follow-up for People with AOD

LIST OF TABLES

- TABLE ES.1: Measures Tested, Performance, and Submission to NQF

- TABLE II.1: Data Sources and Key Search Terms for the Environmental Scan

- TABLE II.2: Ambulatory Measure Concepts Selected for Specification and Testing

- TABLE III.1: Measures Tested for SMI and/or AOD Population

- TABLE IV.1: Quantitative Testing and Analysis of Screening and Monitoring Measures

- TABLE IV.2: Characteristics of Health Plans that Participated in Pilot Test

- TABLE V.1: Characteristics of Denominator Populations Selected for Screening and Monitoring Measures by Health Plan

- TABLE V.2: Denominator Size for Screening and Monitoring Measures before Exclusions by Health Plan

- TABLE V.3a: Health Care Utilization in 2012 for Patients in SMI Denominator of Screening and Monitoring Measures

- TABLE V.3b: Health Care Utilization in 2012 for Patients in AOD Denominator of Screening and Monitoring Measures

- TABLE V.4: BMI Screening and Follow-up for People with SMI by Health Plan

- TABLE V.5: Alcohol Screening and Follow-up for People with SMI by Health Plan

- TABLE V.6: High Blood Pressure Screening and Follow-up for People with SMI or AOD by Health Plan

- TABLE V.7: Tobacco Use Screening and Follow-up for People with SMI or AOD by Health Plan

- TABLE V.8: Clinical Depression Screening and Follow-up for People with AOD by Health Plan

- TABLE V.9: Overall Measure Rate among People with SMI by Patient Characteristics

- TABLE V.10: Overall Measure Rate among Patients with AOD by Patient Characteristics

- TABLE V.11: Comparison of Health Plan Screening Measure Results with ACOs that Report Through PQRS

- TABLE V.12: Comprehensive Diabetes Care for People with SMI by Health Plan

- TABLE V.13: Controlling High Blood Pressure for People with SMI by Health Plan

- TABLE V.14: Comprehensive Diabetes Care Indicators and Controlling Blood Pressure Rates by Patient Characteristics

- TABLE V.15: Service Utilization among People with SMI Who did not Meet Measure Requirements

- TABLE V.16: Service Utilization among People with AOD Who did not Meet Measure Requirements

- TABLE V.17: Overall Measure Rate by Medical or Behavioral Health Data Sources

- TABLE V.18: Inter-rater Reliability for Screening and Monitoring Measures

- TABLE V.19: Summary of Testing Results and Stakeholder Feedback for Screening and Monitoring Measures

- TABLE VI.1: Characteristics of Beneficiaries in the Follow-up after Emergency Department Measure Denominator after Exclusions

- TABLE VI.2: Proportion of Eligible Discharges Excluded from the Follow-up after Emergency Department Measure

- TABLE VI.3: Follow-up after Emergency Department Rates after Applying Denominator Exclusions

- TABLE VI.4: Number and Percent of Follow-up after Emergency Department Denominator Remaining after Exclusions, by State

- TABLE VI.5: Performance of Follow-up for MH ED Measure by Numerator Options

- TABLE VI.6: Performance of Follow-up for AOD ED Measure by Numerator Options

- TABLE VI.7: Follow-up after Emergency Department Performance Rates by State

- TABLE VI.8: 7-day and 30-day Follow-up Rates after Mental Health Discharge from the Emergency Department, by Patient Characteristics

- TABLE VI.9: 7-day and 30-day Follow-up after AOD Discharge from the Emergency Department, by Patient Characteristics

- TABLE VI.10: Relationship Between Follow-up after Emergency Department Measure Performance and Inpatient Stays

- TABLE VI.11: Summary of Testing and Stakeholder Feedback for Follow-up after Emergency Department Measure

- TABLE A.1: Technical Expert Panel Members

- TABLE B.1: Specifications of Parent Measures and New Measures Submitted to NQF

- TABLE B.2: Specification of Parent Measure and Measures Tested but not Submitted to NQF

ACKNOWLEDGMENTS

Mathematica Policy Research and the National Committee for Quality Assurance (NCQA) prepared this report under contract to the Office of the Assistant Secretary for Planning and Evaluation (ASPE), U.S. Department of Health and Human Services (HHS) (HHSP23320100019WI/ HHSP23337001T). Funding support was provided by the HHS Substance Abuse and Mental Health Services Administration (SAMHSA). The authors appreciate the guidance of Kirsten Beronio, Joel Dubenitz, Richard Frank, D.E.B. Potter (ASPE), and Lisa Patton (SAMHSA). Jeremy Biggs, Jung Kim, Sean Kirk, and Jessica Nysenbaum (Mathematica) contributed to the data collection and analysis. Melissa Azur (Mathematica) and Mary Barton (NCQA) provided feedback on this report and guidance throughout the project.

The views and opinions expressed here are those of the authors and do not necessarily reflect the views, opinions, or policies of ASPE, SAMHSA, HHS, or the technical expert panel. The authors are solely responsible for any errors.

ABSTRACT

Summary: Many people with behavioral health disorders suffer comparatively poorer health outcomes, including premature death. Quality measures targeting this population utilized by states, health plans, providers and other stakeholders may improve the quality of their care. In this project, we developed and tested measures reported by health plans that focus on screening and monitoring of care for co-morbid conditions among people with serious mental illness (SMI) and/or alcohol or other drug dependency (AOD). For the SMI population, these measures focused on assessing comprehensive diabetes care; controlling high blood pressure; and screening for body mass index (BMI), high blood pressure, tobacco use, and unhealthy alcohol use. For the AOD population, the measures focused on screening for high blood pressure, depression, and tobacco use. We also developed a measure for health plan reporting to assess the extent to which people discharged from the emergency department for mental disorders or AOD receive timely follow-up care. In March 2015, the National Quality Forum (NQF) endorsed 11 measures from this project.

Major Findings: Measures that assessed diabetes care, high blood pressure control, BMI screening, and tobacco screening among the SMI population, as well as tobacco screening among the AOD population, demonstrated strong reliability and meaningful variation across health plans, suggesting they are suitable to differentiate the quality of care. The alcohol screening measure for the SMI population showed less variation across health plans but received support from stakeholders. The blood pressure and depression screening measures performed poorly and stakeholder support was divided. The follow-up after emergency department measure showed wide variation across state Medicaid programs and received strong stakeholder support. We identified several challenges for developing and using measures focused on behavioral health populations, including a lack of evidence to support some measure concepts and difficulty accessing data to calculate measures. Multistakeholder engagement throughout the project was critical to developing meaningful measures.

Purpose: We focused on developing measures for health plan reporting that address: (1) co-morbid conditions among SMI and AOD populations; and (2) follow-up care after discharge from the emergency department for a mental disorder or AOD. We tested the measures using quantitative and qualitative methods to assess attributes consistent with NQF endorsement criteria: importance, feasibility, usability, and scientific acceptability.

Methods: We reviewed existing measures and gathered input from consumers, providers, health plans, state agencies, and performance measurement experts to identify opportunities for new measures. After reviewing the evidence to support measure concepts, we specified and tested measures that addressed priority conditions and populations. We tested the follow-up after emergency department measure using Medicaid claims data. All other measures were piloted at three diverse health plans. Quantitative testing of all measures involved calculating performance rates to examine variation across health plans or states, along with differences in performance between subpopulations. We examined the reliability of the measures using various psychometric tests. Finally, we solicited public comments and held focus groups with a range of stakeholders to get input on the measure specifications and to understand whether the measures yield findings that can be used to inform quality improvement efforts. We also sought their perspectives on practical barriers to implementing the measures. A technical expert panel provided guidance throughout the project. After the testing, we refined the measure specifications and submitted 11 measures to NQF for endorsement.

ACRONYMS

The following acronyms are mentioned in this report and/or appendices.

| ACA | Affordable Care Act |

|---|---|

| ACO | Accountable Care Organization |

| AHRQ | HHS Agency for Healthcare Research and Quality |

| AMA-PCPI | American Medical Association Physician Consortium for Performance Improvement |

| AOD | Alcohol or Other Drug Dependence |

| AOD ED | Alcohol or Other Drug Dependence Emergency Department |

| ASPE | HHS Office of the Assistant Secretary for Planning and Evaluation |

| BH | Behavioral Health Record |

| BMI | Body Mass Index |

| BP | Blood Pressure |

| CDC | HHS Centers for Disease Control and Prevention |

| CHIPRA | Children's Health Insurance Program Reauthorization Act |

| CMS | HHS Centers for Medicare & Medicaid Services |

| CPT | Current Procedural Terminology |

| CQM | Clinical Quality Measures |

| D-SNP | Dual Special Needs Plan |

| EHR | Electronic Health Record |

| FFS | Fee-For-Service |

| FU ED | Follow-up Emergency Department |

| G-code | G Programming Language |

| HbA1c | Glycated Hemoglobin |

| HEDIS | Healthcare Effectiveness Data and Information Set |

| HHS | U.S. Department of Health and Human Services |

| HITECH | Health Information Technology for Economic and Clinical Health Act |

| HMO | Health Maintenance Organization |

| HRSA | HHS Health Resources and Services Administration |

| IET | Initiation and Engagement of Alcohol and Other Drug Dependence Treatment |

| IOM | Institute of Medicine |

| IPFQR | Inpatient Psychiatric Facility Quality Reporting |

| IQR | Interquartile Range |

| LDL | Low-Density Liporotein |

| MAX | Medicaid Analytic eXtract |

| MBHO | Managed Behavior Health Organization |

| MH ED | Mental Health Emergency Department |

| MH/SA | Mental Health and Substance Abuse |

| MU | Meaningful Use |

| NCQA | National Committee for Quality Assurance |

| NQF | National Quality Forum |

| ONC | HHS Office of the National Coordinator for Health Information Technology |

| PQRS | Physician Quality Reporting System |

| SAMHSA | Substance Abuse and Mental Health Services Administration |

| SD | Standard Deviation |

| SMI | Serious Mental Illness |

| TEP | Technical Expert Panel |

| USPSTF | U.S. Preventive Services Task Force |

| VA | U.S. Department of Veterans Affairs |

EXECUTIVE SUMMARY

Given the prevalence of mental health and substance use disorders, and their toll on the health care system, national advisory groups have noted the dearth of behavioral health quality measures ready for implementation (AHRQ 2010). A recent National Quality Forum (NQF) committee identified several gaps in behavioral health quality measures that can be used to hold state agencies, health plans, providers, and other entities accountable for care. Specifically, the committee noted the need for measures that focus on transitions in care and that address co-morbid physical health conditions among individuals with serious behavioral health conditions (NQF 2012).

With the establishment of the National Behavioral Health Quality Framework, the U.S. Department of Health and Human Services (HHS), Substance Abuse and Mental Health Services Administration (SAMHSA) has articulated priorities for improving the quality of behavioral health care consistent with the National Strategy for Quality Improvement in Health Care. The framework defines goals for all aspects of care including preventing behavioral health problems, implementing and improving treatment, and promoting and supporting recovery. It also targets populations from young children to elderly and includes specialty behavioral health treatment settings as well as broader health care provider and community-based efforts. Within this framework, new measures are needed to monitor the quality of care and inform quality improvement efforts.

Purpose of Project

In September 2011, the HHS Office of the Assistant Secretary for Planning and Evaluation, with support from SAMHSA, contracted with Mathematica Policy Research and the National Committee for Quality Assurance to develop behavioral health quality measures. This three-year project began by reviewing existing measures and gathering input from consumers, providers, health plans, state agencies, and performance measurement experts to identify opportunities for new measures. We then specified and tested the measures listed in Table ES.1. Twelve of the measures focus on screening or monitoring of co-morbid conditions that are highly prevalent among individuals with serious mental illness (SMI) and/or alcohol or other drug dependency (AOD). These conditions include diabetes, hypertension, and alcohol use for the SMI population, and depression and tobacco use for the AOD population. In addition, we developed a measure to assess whether individuals who are discharged from the emergency department for mental health disorders or AOD receive timely follow-up care in the community. These measures were specified for health plan reporting, as such plans have an opportunity to ensure that individuals are connected with community providers and receive preventative screening and monitoring of chronic conditions.

| TABLE ES.1. Measures Tested, Performance, and Submission to NQF | |||

|---|---|---|---|

| Measure | Variation in Measure Performance Across Health Plans or States (% of patients who met measure requirement)1 |

Reliability2 | Received NQF Endorsement |

| BMI Screening and Follow-up for People with SMI | 11.6 - 55.0 | 0.84 | X |

| Alcohol Screening and Follow-up for People with SMI | 1.5 - 58.4 | 0.79 | X |

| High Blood Pressure Screening and Follow-up for People with SMI or AOD | 12.8 - 38.0 for SMI population

8.2 - 12.1 for AOD population |

0.86 | |

| Tobacco Use Screening and Follow-Up for People with SMI or AOD | 9.8 - 64.1 for SMI population

8.8 - 30.4 for AOD population |

0.74 | X |

| Clinical Depression Screening and Follow-up for People with AOD | 1.7 - 20.6 | 0.77 | |

| Comprehensive Diabetes Care for People with SMI3 | |||

| HbA1c Testing | 15.7 - 65.4 | 0.65 | X |

| HbA1c Control (<8.0%) | 6.0 - 48.8 | 0.51 | X |

| HBA1c Poor Control (>9.0%) | 44.9 - 92.8 | 0.49 | X |

| Eye Exam | 1.2 - 27.5 | 0.74 | X |

| Medical Attention for Nephropathy | 6.0 - 61.4 | 0.76 | X |

| Blood Pressure Control | 12.0 - 61.4 | 0.75 | X |

| Controlling High Blood Pressure for People with SMI | 12.5 - 60.3 | 0.88 | X |

| Follow-Up After Emergency Department Use for Mental Health Conditions or AOD4 | 53.8 - 92.4 for mental health follow-up within 30 days

30.8 - 91.5 for AOD follow-up within 30 days |

0.98 | X |

NOTES:

|

|||

To align reporting for the SMI and AOD population with the general population, the measure specifications developed in this project were based on existing measures that health plans report as part of Healthcare Effectiveness Data and Information Set (HEDIS®) or that providers report through the Physician Quality Reporting System (PQRS). Throughout the project, we sought input from a technical expert panel (TEP) and the PQRS measure developers and stewards to ensure that our specifications adhered to the original intent of the measure and to gather their feedback on our testing results.

Our testing of the measures was designed to gather information about their importance, feasibility, usability, and scientific acceptability, in accordance with NQF endorsement standards. We tested the follow-up after emergency department measure using Medicaid claims data. All the other measures (which use both administrative/ claims data and data abstracted from patient records) were piloted at three geographically diverse health plans: two Medicaid health plans and one Dual Special Needs Plan for individuals enrolled in both Medicaid and Medicare. Our quantitative testing involved calculating measure performance rates to examine variation across health plans or states, and differences in performance among subpopulations. We also examined the reliability of the measures using different psychometric tests depending on the data source (inter-rater agreement for measures that used data from patient records and beta-binomial testing for the follow-up after emergency department measure). Finally, we solicited public comment and conducted focus groups with a range of stakeholders to get input on the measure specifications and understand whether the measures yield findings that can be used to inform quality improvement efforts. We also sought their perspectives on practical barriers to implementing the measures. At the conclusion of the testing, we refined the measure specifications and submitted 11 measures to NQF in July 2014 (Table ES.1). After NQF review, all 11 measures were endorsed on March 6, 2015.

Measure Testing Results

Based on our testing, the measures with the strongest results and stakeholder support for the SMI population included those focused on comprehensive diabetes care, controlling high blood pressure, body mass index (BMI) screening, and tobacco screening. The tobacco screening measure also had strong performance and stakeholder support when applied to the AOD population. As summarized in Table ES.1, all these measures demonstrated strong reliability and meaningful variation across health plans, suggesting that they are suitable to differentiate the quality of care. For example, the proportion of individuals with SMI who met the requirement of the BMI measure (that is, they received BMI screening and follow-up care, if obese) ranged from 11.6 percent to 55.0 percent across health plans. There was a similar pattern for the other measures. In addition, the health plans, TEP, and other stakeholders reported that scores on these measures accurately reflected their expectations given the challenges associated with delivering care to these populations. When compared with either the overall 2012 Medicaid HEDIS rates or the rates of similar provider-level measures reported through PQRS, all these measures demonstrated much lower average rates in our testing -- suggesting disparities in care for the SMI and/or AOD population relative to the general population.

The alcohol screening measure demonstrated variation across health plans, but received less support from stakeholders and the TEP because an unusually low proportion of individuals with SMI were identified as unhealthy alcohol users. Nonetheless, they also perceived that this measure was important for health plans given the prevalence of alcohol use among the SMI population. Our analysis concluded that the measure had value for health plans and was suitable for submission to NQF.

Performance of the blood pressure screening measure was not as strong as the other measures. Health plans found that the measure specification (based on the PQRS measure) was overly complicated to implement. The TEP and other stakeholders echoed such concerns and perceived that screening for new cases of hypertension was less of a clinical and measurement priority than blood pressure control. There was little variation in the performance of the blood pressure screening measure for individuals with AOD, and stakeholders were not supportive of the measure for several reasons, including the lack of strong evidence to suggest that individuals with AOD are at greater risk for hypertension. Based on our analysis of the quantitative results and stakeholder feedback, we did not submit this measure to NQF.

Although there is evidence that depression is highly prevalent among people with AOD, and the TEP and stakeholders were generally supportive of the need for depression screening among this population, the depression screening measure did not yield information useful to health plans. Because the measure is intended to identify new cases of depression, individuals with a diagnosis of depression within the past year or who are already receiving depression treatment are excluded from the denominator of the measure. In our testing, nearly all individuals with depression had already been identified in the past year, and therefore, the measure resulted in a very low rate of identification and had limited value to health plans. Our analysis, based on only three health plans, suggested that a measure to monitor the quality of depression treatment among people with AOD may have more value for health plans than a measure designed to identify new cases of depression. Thus, we did not submit this measure for NQF endorsement.

Finally, when our follow-up after emergency department measure was tested using Medicaid claims data, it adequately distinguished performance between states and demonstrated very strong reliability. The proportion of individuals who received follow-up care after mental health and AOD emergency department visits varied widely across states. In addition, this measure received strong support from the TEP and stakeholders. Our analysis suggested that this is a useful measure to monitor follow-up care and therefore was submitted to NQF.

Other Lessons

This project identified several challenges and opportunities for developing and implementing quality measures focused on individuals with behavioral health conditions that may be useful for future efforts.

Multistakeholder engagement is critical to ensure that measures are meaningful and have the best chance for implementation. Our focus groups with consumers, providers, health plans, state officials, and performance measurement experts early in the project were critical to identify gaps in measurement, understand what entities could realistically be held accountable for performance on the measures, and identify data sources for measures. These stakeholders also provided valuable feedback to refine the measure specifications at several points in the project. They often have different perspectives, and finding common ground on quality measurement priorities can be difficult. In this project these stakeholders shared the concern that individuals with SMI and AOD have many co-morbid conditions that require better screening and monitoring, and that better monitoring of care transitions is needed. But they also proposed more controversial measurement concepts, including shared decision making, inappropriate use of psychotropic medications, monitoring of medication side effects, re-admissions, and others. For many of these concepts, there was no clear path forward to develop measures due to insufficient evidence or challenges identifying an entity accountable for the measure performance. Nonetheless, these are important concepts to consider for future work and it will be important to gain the input of all stakeholders to ensure that the final measures yield meaningful and actionable information.

Fragmentation of physical health and behavioral health coverage and services leads to fragmentation in accountability, creating obstacles for positioning and calculating measures. During the early stages of this project, for each measure concept that was proposed, we investigated the feasibility of existing data sources to calculate the measure and where the measure could be best positioned (providers, health plans, states, and such) to have the greatest impact on the quality of care. One of the major challenges we encountered is that no single entity is accountable for the quality of care for individuals with behavioral health conditions. Specialty mental health and substance abuse services are often carved out from general medical care or provided through special grant-funded systems of care that are not well connected with physical health plans, Medicaid, or other state agencies. This creates obstacles to accessing data across entities to calculate measures, and makes it difficult for these entities to act on the results of measures for which they perceive they have little influence. Many health plans initially volunteered to test our measures (indicating their interest in the health needs of individuals with SMI and AOD) but could not accurately calculate the measures because they did not have access to the full record of service utilization for their patients -- including both physical and behavioral health records and claims -- due to behavioral health carve-out arrangements or other limitations on data sharing. Stronger collaboration between the various entities responsible for providing the full array of services to the behavioral health population is necessary to facilitate the widespread implementation of quality measures, and to promote shared accountability for performance on such measures.

Measures of psychosocial care would provide a more comprehensive understanding of the quality of care. Many stakeholders were concerned about the lack of NQF-endorsed measures focused on psychosocial care to complement existing measures that assess medication use and adherence. There was a particular concern among stakeholders that measures are needed to monitor the accessibility and outcomes of evidence-based psychosocial care, including various psychotherapies and other community-based mental health and social services. As we considered developing measures focused on psychosocial care, we discovered the lack of a data collection and reporting infrastructure to support such measures. As part of this project, we summarized the challenges involved in developing and implementing such measures, and proposed several avenues for future measure-development -- with an emphasis on advancing the measurement of outcomes (Brown et al. 2014). Further work is needed to move psychosocial measures forward.

Interpretation of data confidentiality hinders implementation of quality measures for behavioral health populations. During our testing, we found that even health plans that have responsibility for comprehensive physical health and behavioral health benefits have trouble accessing records for their patients with behavioral health conditions, particularly records for individuals with AOD. Some health plans interpret federal and state privacy laws as preventing them from accessing behavioral health records, and overcoming the legal hurdles to access such data is very burdensome and time consuming. In addition, the health plans that piloted our measures found that many behavioral health providers are unaccustomed to providing records for quality improvement purposes, and may not respond to such requests out of fear of violating privacy rules. Greater clarity of the privacy laws is needed to give health plans and providers confidence in their ability to share data for quality improvement purposes while protecting the rights and privacy of consumers.

Although the measures tested in this project fill critical gaps, more measures are needed to implement on a national scale to fully understand the quality of care provided to individuals with behavioral health conditions. Such measures must align with other federal and state initiatives (such as the electronic health record incentive program and Medicaid quality reporting) and take advantage of existing data sources and the evolving infrastructure for measurement.

I. PROJECT RATIONALE

A number of barriers have contributed to the lack of progress in the measurement of quality for behavioral health care (IOM 2006; Pincus et al. 2011). The lack of objective measures for diagnosis, poor documentation by providers, and limited implementation of evidence-based treatments make specifying and reporting quality measures challenging. Responsibility for behavioral health care is divided among providers and multiple funding streams. For low-income and disabled patients, it is split between federal and state funding streams, including Medicaid, Medicare, state MH/SA agencies, and other state programs. Further, although quality improvement efforts among health plans and providers have spurred attention to the accessibility, costs, and outcomes of care, these are largely unconnected to public sector efforts such as U.S. Department of Health and Human Services (HHS) Substance Abuse and Mental Health Services Administration's (SAMHSA's) Uniform Reporting System and its national surveys of MH/SA programs.

The current focus on quality in federal health reform initiatives presents compelling opportunities to redress this lack of attention to quality in behavioral health. The expansion of coverage through Medicaid and exchanges has resulted in larger enrollment of low-income adults, among whom behavioral health problems are common. The Affordable Care Act (ACA) established the HHS Centers for Medicare & Medicaid Services (CMS) Inpatient Psychiatric Facility Quality Reporting (IPFQR) program. It also authorized demonstrations of new care models designed to improve integration of care between primary care and MH/SA services. For the first time, standardized reporting by states on the quality of care for children enrolled in Medicaid and the Children's Health Insurance Program, as well as for adults in Medicaid, is occurring through provisions of ACA and the Children's Health Insurance Program Reauthorization Act (CHIPRA). Through the Health Information Technology for Economic and Clinical Health (HITECH) Act, thousands of providers receive incentives for implementing and demonstrating "meaningful use" (MU) of electronic health records (EHRs). To date, few behavioral health measures are included in these landmark efforts. For example, only two measures with a behavioral health focus (that is, Preventive Care and Screening: Tobacco Use: Screening and Cessation Intervention and Preventive Care and Screening: Screening for Clinical Depression and Follow-up Plan) are included in the 2014 list of measures for the CMS EHR incentive program (CMS 2014).

With the establishment of the National Behavioral Health Quality Framework, SAMHSA has articulated priorities for improving the quality of behavioral health care consistent with the National Strategy for Quality Improvement in Health Care. The framework defined goals for all aspects of care, including preventing behavioral health problems, implementing and improving treatment, and promoting and supporting recovery; targets populations from young children to elderly; and includes MH/SA settings as well as broader health care provider and community-based efforts. Although this framework has the potential to drive quality improvement in behavioral health care, new measures are needed to monitor the quality of care and inform quality improvement efforts. Further, it is essential that efforts to identify, test, and implement new measures are aligned with other federal and state initiatives (such as the CMS EHR incentive program and Medicaid quality reporting) and take advantage of existing data sources and the evolving infrastructure for measurement (such as SAMHSA's ongoing reporting initiatives, health plan quality reporting, and new capabilities of health information technology).

A. Project Purpose

In September 2011, the HHS Office of the Assistant Secretary for Planning and Evaluation (ASPE), with support from SAMHSA, contracted with Mathematica Policy Research and the National Committee for Quality Assurance (NCQA) to develop quality measures focused on populations who receive behavioral health care. Although this project did not begin with a mandate to develop measures for a specific public reporting program, ASPE and SAMHSA wanted the measures to be broadly applicable to Medicaid and other populations, and be suitable to potentially incorporate into national reporting programs such as the Medicaid Adult Core Set and others.

As illustrated in Figure I.1, the first step in this three-year project involved prioritizing importance measure concepts, which was informed through an environmental scan and focus groups with a range of stakeholders to identify measure gaps and priorities. The process identified several potential measure concepts, including measures that focused on preventative care and co-morbid conditions among people with serious mental illness (SMI) and/or alcohol or other drug dependency (AOD), as well as measures that focused on transitions between settings of care. We then reviewed the strength of evidence supporting each measure. After final measure concepts were selected, we developed measure specifications and pilot tested the measures. The pilot testing involved both quantitative data collection to examine the performance and psychometric properties of the measures, and qualitative data collection, including focus groups and a public comment period. Based on the findings from the testing, we refined the measure specification and submitted the strongest measures to the National Quality Forum (NQF) for endorsement in July 2014. A Technical Expert Panel (TEP) provided guidance throughout the project.

In July 2012, the contract for this project was modified to support the development of measures for the CMS IPFQR program. The development of the IPFQR measures has a different history and time line from the measures that began in September 2011, and therefore are not included in this report. A separate report for the IPFQR measures is available from ASPE.

| FIGURE I.1. Measure Development Process |

|---|

|

B. Report Roadmap

This report summarizes the development and testing of the ambulatory quality measures. Chapter II describes the process for selecting measure concepts. Chapter III describes the process for specifying the measures. Chapter IV describes the methods used to test the measure, and Chapter V and Chapter VI summarize the findings. The final chapter offers lessons learned from this project that may be applicable to future measure development and implementation efforts.

II. SELECTION OF MEASURE CONCEPTS

The selection of measure concepts involved several steps: (1) conducting an environmental scan of existing measures to identify gaps; (2) holding focus groups with stakeholders to gather input on measurement priorities and where to position measures; (3) reviewing the strength of the evidence to support the measure concepts; and (4) convening a TEP to provide input on measure concepts and the evidence supporting those concepts. This chapter briefly describes these steps and how they influenced the development of the measures.

A. Scan of Measures

After initial meetings with ASPE and SAMHSA to identify priority measure-development areas, we conducted a review of existing behavioral health measures and measure-development initiatives to identify opportunities for potential measure concepts. The review was organized according to the SAMHSA Behavioral Health Quality Framework's six domains. This task involved multimode data collection drawing upon various data sources to identify the gaps in quality measurement.

Develop search criteria and taxonomy. We first developed definitions of terms used to search for and categorize data according to the domains in the framework. To align our review with other federal initiatives, we also included other domains in the taxonomy, such as those recommended by the HHS Office of the National Coordinator for Health Information Technology (ONC) Policy Committee Quality Measures Workgroup for Meaningful Use measures and categories used to organize the core sets for the CHIPRA of 2009 and Adult Medicaid. We built on the taxonomy NCQA developed for organizing measures for consideration for the Medicaid Adult Core set.

Identify and collect measures. We then searched the three most widely used sources of measures: the National Quality Measure Clearinghouse, NQF, and the online inventory maintained by the Center for Quality Assessment in Mental Health. We also reviewed measures used or developed by SAMHSA (including those developed under the Mental Health Statistics Improvement Program), the U.S. Department of Veterans Affairs (VA), and the National Association of State Mental Health Program Directors. The scan did not include measures developed from international sources, measures pertaining to dementia (per feedback from ASPE and SAMHSA), or measures for all co-morbid physical conditions that could be applied to people with behavioral health conditions. Table II.1 provides a summary of the data sources and key search terms used for the scan.

To identify any additional measures that may not have been captured in these sources, we supplemented the search by reviewing findings from prior environmental scans conducted for the following projects:

-

Subcommittee of the HHS Agency for Healthcare Research and Quality's (AHRQ's) National Advisory Council: Identifying Quality Measures for Medicaid Eligible Adults.

-

American Recovery and Reinvestment Act HITECH Eligible Professional Clinical Quality Measures (CQM).

-

ONC CHIPRA Electronic CQM Development.

-

Development, maintenance, and support of hospital outpatient, outpatient imaging efficiency, psychiatric inpatient, and cancer hospitals quality of care measures.

| TABLE II.1. Data Sources and Key Search Terms for the Environmental Scan | |

|---|---|

| Data Source | Key Search Terms/Categories |

| National Quality Forum |

|

| National Quality Measures Clearinghouse |

|

| Center for Quality Assessment in Mental Health |

|

We created a detailed spreadsheet categorizing each measure by name, description, numerator, denominator, exclusion populations or criteria, NQF identification number, domain, data source, level of specification, type of measure, condition, and age range for the relevant population. We also assigned measures to a priority area of the SAMHSA quality framework and created domains and subdomains within the framework to provide greater specificity. The domains and subdomains were based on a categorization scheme developed for the International Initiative for Mental Health Leadership project, which conducted a scan of international initiatives in mental health quality measurement (Fisher 2012).

B. Focus Groups

The focus groups were intended to obtain stakeholder input on the most relevant topics for measure development. We conducted discussions with six groups of stakeholders in February and March 2012 to identify priorities and gaps in behavioral health quality measurement. These stakeholders included: (1) consumers and consumer representatives; (2) researchers and performance measurement experts; (3) representatives of state MH/SA agencies; (4) state Medicaid program representatives; (5) health plans; and (6) providers, including specialty MH/SA providers and primary care and family practice providers. Each discussion included 6-8 individuals and had a slightly different focus given the expertise and knowledge of different stakeholder groups.

During each discussion, we asked participants to identify their priorities for behavioral health quality measurement. We asked participants to focus on quality measures for working-age adults who receive behavioral health services (mental health or substance abuse treatment) in primary care or specialty behavioral health care settings. The discussion facilitators emphasized that participants should not limit their consideration of measures to any particular payer (for example, Medicaid or private plans); accountable entity (such as the state, Medicaid, health plans, or providers); or diagnostic group. We encouraged participants to suggest measure areas and specific measures that could apply to a range of populations and service settings based on what they viewed as the most pressing quality concerns or gaps in quality measurement. Below is a brief summary of the focus of each discussion:

-

In our first discussion, we asked consumers and consumer representatives about the challenges that consumers encounter when trying to access care and points in the service system that require quality improvement.

-

We held a second discussion, with researchers and performance measurement experts, to gather their input on gaps in quality measurement, existing measures that could be refined, or areas in which new measures are needed.

-

Our third discussion, with representatives of state MH/SA agencies, focused on gathering input on the types of measures that would help their agencies monitor and improve the quality of care.

-

Our fourth meeting, with representatives of state Medicaid programs, focused on the types of measures that would help Medicaid programs monitor and improve care.

-

Our fifth meeting, with representatives from health plans, allowed us to gather information about their experiences with existing quality measures, the types of new measures that would help them monitor and improve care, and the feasibility of reporting different types of measures.

-

Our final meeting, with providers, focused on the saliency and clinical relevance of selected measure concepts, and the feasibility of reporting certain measures from the provider perspective.

Following the focus groups, the team reviewed the transcripts and notes to find convergence on key themes and identify divergent viewpoints. We determined to what extent the measure priorities differed across stakeholder groups. We also summarized challenges that participants identified related to development, adoption, or implementation of the measures. We submitted a memo to ASPE summarizing key findings from all the focus groups and then debriefed ASPE and SAMHSA to select measure concepts with the strongest support.

C. Evidence Review

Based on the environmental scan and focus groups, we prioritized measure concepts for ASPE and SAMHSA to review. We then conducted evidence reviews on the prioritized concepts in order to assess whether there is clear guidance to specify the denominator and numerator of a measure. The evidence reviews also addressed a critical component of NQF review -- the importance of a measure, including the extent to which it reflects a high-impact aspect of the national health care system and the evidence base supporting the measure.

We conducted reviews in five areas: (1) screening and monitoring of general health conditions among individuals with SMI and substance use disorders; (2) preventive services for risky sex behaviors among high-risk substance using populations; (3) discharge planning and post-discharge follow-up from inpatient, emergency department, or residential care; (4) shared decision making in behavioral health care; and (5) medication-assisted opioid treatment.

The reviews drew on evidence-based clinical guidelines, systematic reviews (including meta-analyses), and the recommendations of authoritative government agencies and task forces, including the U.S. Preventive Services Task Force (USPSTF), the HHS Centers for Disease Control and Prevention (CDC), and others.

The approach and methodology of each review varied depending on the measure concept. For all measure concepts, we began with a search of evidence-based clinical guidelines and systematic reviews. For some concepts, we did not find clinical guidelines or systematic reviews focused on our target condition or specifically on the SMI or AOD populations. For the measure concepts of screening and follow-up for general health conditions and infectious diseases, we reviewed USPSTF, CDC, and other recommendations for the general population. We also examined whether there is evidence of higher prevalence of certain health conditions or disparities in screening or treatment for those conditions among individuals with mental health disorders or AOD to determine whether it would be sensible to adapt existing measures for our target population. For the measure concept focused on discharge planning and post-discharge follow-up, in the absence of clear guidelines or systematic reviews focused on our target population, we examined existing quality measures to identify opportunities for adapting them or developing new measures.

D. Technical Expert Panel Meeting

The TEP was convened to provide input on the selection of measure concepts and offer feedback on the measure specifications and testing results throughout the duration of the project. The TEP included experts in behavioral health quality measurement, the treatment of behavioral health disorders, and the organization and financing of behavioral health services. It also included representatives from consumer and family organizations, state MH/SA agencies, provider organizations, health plans, and state Medicaid programs. Representatives from several federal agencies also attended all the TEP meetings. (See Appendix A for the list of TEP members.)

The initial TEP meeting was held in July 2012 and focused on reviewing the findings from our environmental scan and focus groups. We reviewed the evidence summaries, which were provided to TEP members prior to the meeting. The TEP then prioritized measure concepts for further specification and testing.

E. Final Measure Concepts

At the conclusion of this process, ASPE and SAMHSA selected seven measure concepts for further specification and testing (Table II.2). These measures fell into three broad categories: (1) screening and follow-up for physical health and co-morbid conditions among people with SMI and AOD; (2) monitoring of chronic physical health conditions among individuals with SMI; and (3) follow-up after discharge from an emergency department for individuals with mental health conditions and AOD.

| TABLE II.2. Ambulatory Measure Concepts Selected for Specification and Testing | ||

|---|---|---|

| Measure Concept | Specified and Tested for SMI Population | Specified and Tested for AOD Population |

| BMI assessment and follow-up | X | |

| Alcohol screening and follow-up | X | |

| Blood pressure screening and follow-up | X | X |

| Tobacco assessment and follow-up | X | X |

| Depression screening and follow-up | X | |

| Comprehensive diabetes care (includes 6 indicators)1 | X | |

| Blood pressure control | X | |

| Follow-up after discharge from emergency room2 | X | X |

NOTES:

|

||

There were several factors that influenced the selection of these measure concepts, as described below:

-

Focus group and TEP support. The focus groups and TEP strongly supported improving screening for co-morbid conditions and monitoring of chronic conditions among individuals with SMI and AOD. They also strongly supported measures that focus on transitions between different settings of care because these transitions present opportunities for individuals to lose contact with the health care system.

-

Measures fill an important gap. The recommendations of the focus groups and TEP were consistent with our review of measure gaps. There is a lack of NQF-endorsed measures that assess whether individuals with SMI and AOD receive preventive care and monitoring of chronic conditions. In addition, their recommendations are consistent with the recommendations that NQF "examine its portfolio of existing outcome measures and consider stratification for the MHSU [mental health and substance use] populations, thereby allowing these measures to be applied to persons with various MHSU conditions across care settings" (NQF 2011).

-

Strong evidence for the measures. Certain co-morbid physical health conditions (obesity, diabetes, and hypertension) and health behaviors (tobacco use) are more common among individuals with SMI and AOD. These conditions are often undetected or poorly managed in these populations; individuals with SMI die, on average, two decades early due in part to these co-morbid conditions and health behaviors.

The process used to select measure concepts also identified a need for measures focused on the delivery and outcomes of psychosocial care. Although there are evidence-based psychosocial treatments for a number of conditions, there is a lack of quality measures to track the uptake and outcomes of these treatments. Such quality measures could help encourage greater use of evidence-based practices by providing tools for monitoring and rewarding the adoption and implementation of effective psychosocial treatments. Unfortunately, data systems commonly used for quality measurement (claims and medical records) have limited ability to capture information on the use or outcomes of effective psychosocial treatments.

In the fall of 2012 we received input from members of our TEP and several leading experts to help us identify next steps for developing quality measures focused on psychosocial care. Based on that feedback, we wrote a white paper that described the strengths and limitations of various strategies for measuring the quality of psychotherapy, and proposed next steps for the development of such measures (Brown et al. 2014).

III. SPECIFICATION OF MEASURES

The next step in the project involved developing measure specifications. After gathering feedback from our TEP and other stakeholders, we determined that our measures were most suitable for health plan reporting. Health plans have an opportunity to ensure that their patients receive preventive care and monitoring of chronic conditions as well as follow-up during care transitions.

As described below, in an effort to align our specifications for the SMI and AOD populations with measures used for the general population, we modeled the specifications on existing measures reported by health plans and providers (referred to as the "parent" measures in Table III.1). The process for specifying the screening and monitoring measures was somewhat different than the process for specifying the follow-up after emergency department measure given the different data sources used for the measures. Here we summarize the steps in the specification process and the major adaptations that were made to the parent measures. Appendix B includes the final measure specifications.

A. Specification of Screening and Monitoring Measures

Identification of data sources for measures. Based on feedback from stakeholder focus groups and our TEP, we determined that patient record review was necessary to accurately capture the numerator of these measures because several numerator components are not reliably reported using claims data. All of the screening measures, and the measures to monitor diabetes and hypertension, were specified using "hybrid" data sources. These measures use health plan administrative/claims data to identify the denominator-eligible for the measure, and primarily medical records (paper or electronic) to calculate the numerator. Depending on the measure, some numerator components can also be identified using claims data (for example, claims codes for smoking cessation treatment count toward the numerator of the tobacco screening measure).

We modeled our measures on health plan measures that are reported as part of the Healthcare Effectiveness Data and Information Set (HEDIS®) and on provider-level measures that are included in the CMS Physician Quality Reporting System (PQRS) (Table III.1). Here we briefly describe the overarching approach to the specification and adaptation process.

Adaptation of PQRS measures. We sought to align our health plan specifications for the tobacco, body mass index (BMI), depression, alcohol, and blood pressure screening measures with the provider-level measures that are included in PQRS. These provider-level measures are reported using G-codes or Current Procedural Terminology (CPT) Category II codes. These are non-payment codes that can be submitted on claims forms. All the measures we adapted have also been specified for electronic reporting and several are included in the CMS Meaningful Use Incentive Program. Because the CPT II and G-codes are not routinely used, we used the narrative specification developed as part of the electronic specifications to guide our health plan specifications.

| TABLE III.1. Measures Tested for SMI and/or AOD Population | |||

|---|---|---|---|

| New Measure Developed for This Project (NQF # assigned for review) |

Parent Measure that Served as Model (NQF #) |

Parent Measure Steward | Parent Measure Use in Federal Programs (PQRS measure # where applicable) |

| BMI Screening and Follow-up for People with SMI (2601) | Preventive care and screening: BMI screening and follow-up (0421) | CMS | PQRS (128), MU Stage 2, NQF Duals |

| Alcohol Screening and Follow-up for People with SMI (2599) | Unhealthy alcohol use: Screening and brief counseling (2152) | AMA-PCPI | None (screening component of measure is similar to PQRS 173) |

| High Blood Pressure Screening and Follow-up for People with SMI or AOD (not submitted to NQF) | Preventive care and screening: Screening for high blood pressure and follow-up documented (not NQF-endorsed) | CMS | PQRS (317) |

| Tobacco Use Screening and Follow-up for People with SMI or AOD (2600) | Preventive care and screening: Tobacco use screening and cessation intervention (0028) | AMA-PCPI | PQRS (226), MU Stage 2, NQF Duals |

| Clinical Depression Screening and Follow-up for People with AOD (not submitted to NQF) | Screening for clinical depression and follow-up plan (0418) | CMS | PQRS (134), Adult Medicaid Core Set, MU Stage 2, NQF Duals |

| Comprehensive Diabetes Care for People with SMI: | Comprehensive diabetes care: | NCQA | |

|

|

Adult Medicaid Core Set | |

|

|

Medicare Stars, MU Stage 2 | |

|

|

MU Stage 2 | |

|

|

Medicare Stars, MU Stage 2 | |

|

|

Medicare Stars, MU Stage 2 | |

|

|

MU Stage 2 | |

| Controlling High Blood Pressure for People with SMI (2602) | Controlling high blood pressure (0018) | NCQA | Adult Medicaid Core Set, Medicare Stars, SNP, MU Stage 2 |

| Follow-Up After Emergency Department Use for Mental Health Conditions or AOD (2605) | Follow-up after hospitalization for mental illness (0576) | Adult Medicaid Core Set, SNP, NQF Duals | |

We made four main adaptations to the existing PQRS specifications, described below:

-

Expanding the data source for measure exclusions. We sought to keep the original measure exclusions. The parent PQRS measures identify exclusions through the medical record. Given that health plans have access to administrative and claims data, our health plan specification allows for the exclusions to be identified with these data sources to reduce the data collection and reporting burden on health plans, and to ensure that exclusions not documented in medical records are captured. For example, for the BMI screening measure, our specifications allow for individuals to be excluded due to pregnancy if documented in medical record or using claims codes for pregnancy. For some measures we also changed the exclusion criteria when the original exclusion was not appropriate for health plan reporting. For example, the parent specification for the alcohol screening measure allowed for patients to be excluded from the denominator if there was a medical reason that interfered with screening during the visit (as would be appropriate for a provider-level measure) but such an exclusion is not necessary or appropriate for health plans, which have a longer time period to ensure that their patients receive screening and follow-up care.

-

Refining denominator population to focus on SMI and/or AOD and require continuous enrollment in health plan. The denominator for each measure was limited to the SMI and/or AOD population (depending on the measure) rather than the general population. We specified the denominator based on evidence that the target condition was either more prevalent among the SMI and/or AOD population or that these populations experience disparities in care for the target condition. The specification of the SMI and AOD denominators aligned with other NQF-endorsed HEDIS measures reported by health plans. Consistent with other health plan measures, the denominator also required that the patient was consistently enrolled in the health plan for the time frame required to assess both the numerator and denominator. The period of continuous enrollment varied by measure. For example, the continuous enrollment period for the HbA1c testing measure was the measurement year with no more than one gap in enrollment of up to 45 days. This is consistent with the parent measure as this allows for identification and testing during the same measurement year.

-

Refining time frame for numerator to reflect health plans' level of accountability. The numerator was modified to recognize the opportunity that health plans have to ensure that their patients receive care over a longer time period. The existing provider-level PQRS measures mainly assess whether screening and follow-up care was delivered by a reporting provider at the visit (or a previous visit to the same provider). In contrast, health plan measures (including those reported for HEDIS) typically use a look-back period of one year or longer to capture whether any provider delivered the service. We selected the appropriate time frame for our specifications by reviewing clinical guidelines and USPSTF recommendations for screening and follow-up care, and examining the evidence supporting the parent measures. We also sought to align the time frame for the numerator across our measures when appropriate to minimize confusion for health plans that may implement these measures as a group.

-

Strengthening the numerator requirements to reflect health plans' level of accountability and the intensity of services necessary for SMI and AOD populations. Based on stakeholder feedback and guidance from the TEP, we also modified the numerator for several of the screening measures to recognize that health plans have an opportunity to ensure that their patients receive more intensive follow-up care over a longer period of time than could be expected of individual providers, and to recognize that the SMI and/or AOD populations may require more intensive intervention than the general population given the complexity of their health, mental health, and psychosocial needs. For example, the existing provider-level PQRS numerator specification for the unhealthy alcohol use screening measure requires evidence of brief counseling (because this measure is specified for the general population and it is reasonable to hold providers accountable for delivering brief counseling during the visit). Our stakeholder focus groups and TEP recommended that brief counseling was insufficient follow-up for individuals with SMI and encouraged us to strengthen the measure by requiring two events of counseling over the measurement year following the positive screening. All of the screening measures were revised in this fashion.

At various point in the project, we reviewed our specifications with the PQRS measure developers and measure stewards (including CMS and American Medical Association Physician Consortium for Performance Improvement [AMA-PCPI]) to understand the evidence supporting the existing measures and ensure that our specification adhered to the original intent of the measure. We gathered their feedback on the adaptations described above prior to submission to NQF. We also discussed future stewardship of the measures developed as part of this project, and determined that NCQA would serve as the steward of the new health plan measures.

Adaptation of HEDIS measures. Given that the diabetes and hypertension control measures are already specified for health plan reporting for the general population, and have strong evidence to support their applicability to the SMI population, we did not make any adaptation of the exclusions or numerator of these measures. Rather, we limited the denominator to the SMI population and used the existing exclusions and numerator specifications to facilitate comparisons with the general population. During the period of our testing, new guidelines for cholesterol management for people with cardiovascular disease were published. As a result, NCQA retired two diabetes care HEDIS indicators, LDL screening and LDL control. For this reason, we removed these indicators from consideration for this measure set and do not report the results. The HbA1c <7 percent indicator of the diabetes care measure was removed from consideration and is not reported because it is not NQF-endorsed.

B. Specification of Follow-up after Emergency Department Measure

Our measure of follow-up care after emergency department discharge is calculated using only claims data. The specification was modeled on the NQF-endorsed Follow-up After Hospitalization for Mental Illness measure (NQF #0576), for which NCQA is the steward.

Defining the denominator. We sought for this measure to be broadly applicable to all mental health emergency department (MH ED) and AOD emergency department (AOD ED) visits. Based on feedback we received early in the project from our TEP and stakeholders, we limited the denominator to emergency department visits with a primary mental health or AOD diagnosis. There are two denominator populations: MH ED visits and AOD ED visits. The mental health diagnosis codes aligned with NQF measure 0576 while the AOD diagnosis codes aligned with the Initiation and Engagement of Alcohol and Other Drug Dependence Treatment (IET) measure (NQF #0004) to reduce confusion for health plans that may report these measures in the future.

Defining the numerator. The numerator requires an outpatient or partial hospitalization visit with a primary diagnosis of mental health or AOD (mental health diagnosis at follow-up for MH ED discharges and AOD diagnosis at follow-up for AOD ED discharges). We did not restrict the numerator to visits with mental health or AOD practitioners because the TEP and other stakeholders reported that primary care visits should be considered as meeting the numerator requirement given the broad denominator population, and because primary care providers are increasingly providing behavioral health care. The measure yields the following four rates:

- 7-day follow-up after MH ED discharges.

- 30-day follow-up after MH ED discharges.

- 7-day follow-up after AOD ED discharges.

- 30-day follow-up after AOD ED discharges.

IV. APPROACH TO MEASURE TESTING

Following the specification of the measures, we pilot tested the measures using quantitative and qualitative methods. The testing was designed to assess the performance and psychometric properties of the measures and to gather information to inform their eventual implementation. Moreover, the testing was intended to gather information about the importance, scientific acceptability, usability, and feasibility of the measures, as defined in the following NQF measure criteria:

-

Importance. The strength of evidence supporting that a measure concept promotes high-quality care and allows for differentiation in performance.

-

Scientific acceptability. The verification that the psychometric properties of a measure -- validity and reliability -- are strong enough to justify its use to assess quality of care.

-

Validity. The ability of measure specifications to promote accuracy in data collection and measure score calculation to ensure appropriate characterization of performance.

-

Reliability. The ability of measure specifications to promote consistency in data collection and aggregation to ensure that variability in measure score reflects actual variation in performance.

-

Usability. The value of a measure in informing quality improvement activities.

-

Feasibility. The availability of data elements required for the calculation of a measure, whether a measure is susceptible to inaccuracies, and the level of effort involved in collecting and calculating the measure.

This chapter describes the methods used to test each of these criteria. We briefly summarize the overarching testing questions and then describe the specific methods.

A. Testing Questions

The testing questions vary somewhat according to the measure. Because the validity of most of the screening and monitoring measures are already established for the general population, the testing of these measures had a stronger focus on assessing the availability of data to calculate the measure for the SMI/AOD populations (feasibility), disparities in screening, follow-up, or monitoring among the SMI/AOD populations when compared with the general population (importance), whether the measures could be consistently implemented across health plans and chart abstractors (reliability), and whether health plans and other stakeholders find value in the measure results (usability). The testing of the follow-up after emergency department measure had a stronger focus in gathering feedback on the validity of the measure, in addition to examining it importance, reliability, usability, and feasibility.

The following overarching questions guided the testing:

-

Are the measures appropriate for assessing quality of care and do they address a priority condition? Is there room for improvement, and are there gaps in care? (importance)

-

Are measure exceptions or exclusions necessary and appropriate? (validity)

-

As specified, can the data elements and measures be calculated consistently (reliability) and capture the intended information? (validity)

-

Can stakeholders use performance results for quality improvement and decision making? (usability)

-

Can the measures be calculated accurately and without undue burden? (feasibility)

We collected quantitative and qualitative data to test the measures. The quantitative data collection involved gathering data from health plans or using claims data to calculate measure scores and examine various attributes of performance. The qualitative data collection involved gathering feedback from a TEP, multistakeholder focus groups, and public comment. We first describe the approach to quantitative testing of the screening and monitoring the measures and then describe the quantitative testing of the follow-up after emergency department measure. Finally, we describe our approach to collecting feedback on the specifications and measure performance using qualitative methods.

B. Quantitative Testing of Screening and Monitoring Measures

For the screening and monitoring measures, the quantitative testing was designed to answer the questions in Table IV.1. We piloted the screening and monitoring measures at three health plans, which allowed us to examine the performance of the measures if they were implemented following the typical HEDIS reporting processes for measures that use hybrid data sources (that is, using administrative claims data along with medical record review). This allowed us to observe whether health plans could reliably understand the measure specifications and access the necessary data sources and data elements to calculate the measures. Here we describe the characteristics of the health plans and data collection process.

| TABLE IV.1. Quantitative Testing and Analysis of Screening and Monitoring Measures | |||

|---|---|---|---|

| Criterion | Testing Question(s) | Data Source | Data Analysis |

| Importance/Performance Gap | Is performance lower for the SMI and/or AOD population compared with the general population? | Performance results for each subpopulation and the performance results for the general population | Descriptive analysis (mean, range, outliers) of performance by diagnosis, plan, and populations (SMI/AOD versus the general population) |

| Are there differences in performance across plans? | Measure performance across health plans | ||

| Are there differences related to diagnosis or other patient characteristics? | Measure performance by diagnosis and patient demographics | ||

| Feasibility | Are the data needed to define the eligible population available? | The size of the denominator in the plans | Descriptive analysis of the size of the eligible population by diagnosis |

| How large is the eligible population? | |||

| Where are the data needed to assess the numerator (for example, primary care versus mental health records)? | Data on whether the numerator was found in medical/physical health or behavioral health records | ||

| Reliability | |||

| Specifications | Can the denominator definitions be implemented consistently across plans? | Data on denominator prevalence and size | Sensitivity analyses to explore the impact of different definitions on prevalence and sample size |

| Inter-rater Reliability | Are the data required for data element and measure calculation comparable when collected by 2 different chart abstractors? | Data abstracted by 2 abstractors | Agreement using kappa statistic |

| Validity | |||

| Content validity | Do the definitions for the SMI and AOD denominators capture the intended populations? | Data on denominator prevalence and size | Descriptive analyses to explore size of denominator using different specifications |

| Are measure exclusions appropriate? | Performance results with and without measure exclusions | Sensitivity analyses to explore the impact of measure exclusions on measure performance | |

C. Characteristics of Health Plans that Participated in Testing

We sought to recruit three Medicaid health plans that were geographically diverse. We first announced the project via NCQA's HEDIS Users Group listserv, which reached 146 health plans that reported HEDIS measures in 2013. We also sent the announcement to various stakeholder groups, including the Association for Community Affiliated Plans and the Medicaid Health Plans of America. We then conducted informational meetings with health plans that expressed interest. During the meetings, we provided additional information regarding the specifics of the measures and testing plans, and requested that each health plan submit information on its enrollment, product lines, coverage for mental health and substance use services, and accessibility to general medical and behavioral health records.

We then assessed whether the interested health plans met the following desired requirements:

-

Enrolled Medicaid population (including only Medicaid beneficiaries or those eligible for both Medicaid and Medicare [dual eligibles]).

-

Sufficient number of patients with SMI and AOD.

-

Responsible for MH/SA benefits.

-

Access to general medical and behavioral health records for their patients.

-

At least two experienced medical record abstractors available for the testing.

We then conducted follow-up interviews with candidate health plans to confirm that they had the capacity to participate in the testing and discuss potential data access challenges before selecting the final three health plans. We established a memorandum of understanding with each health plan to govern the secure use of the data submitted by the health plans. We provided each health plan with a modest honorarium to offset the costs of data collection.

The final three health plans included a Dual Special Needs Plan (D-SNP) for dual eligibles, a plan that enrolled primarily disabled Medicaid beneficiaries, and a plan that enrolled only adult non-disabled Medicaid beneficiaries. These plans differed in their enrollment size and geographic location/coverage (Table IV.2). For the D-SNP, some community MH/SA services were carved out to a separate Medicaid managed behavioral health organization (MBHO) but the MBHO allowed the D-SNP full access to their data systems and patient records as part of their existing relationship and collaborated with the D-SNP as part of the testing. The other two plans were fully responsible for both medical and behavioral health benefits, and therefore had access to the records for all the selected patients.

| TABLE IV.2. Characteristics of Health Plans that Participated in Pilot Test | |||

|---|---|---|---|

| Health Plan | D-SNP | Medicaid Disabled | Medicaid Adult |

| Location | Multicounty in Mid-Atlantic region | Single county in Mid-west | Single state in West |

| Medicare/Medicaid eligibility | Dually enrolled in Medicaid and Medicare | Enrolled in Medicaid due to disability | Enrolled in Medicaid due to poverty |

| Plan type | HMO and MBHO | HMO | HMO |

| Covered population | 12,755 | 13,431 | 131,033 |

| Covered benefits | HMO: Medical, Medicare covered mental health and AOD, and pharmacy

MBHO: Medicaid community mental health services |

Medicaid medical, pharmacy, mental health, and AOD | Medicaid medical, pharmacy, mental health, and AOD |

D. Health Plan Data Collection

Following the recruitment of the health plans, the primary quantitative data collection consisted of three major components: (1) identification of the denominator samples; (2) submission of administrative/claims data; and (3) abstraction of medical and behavioral health records.

Identification of denominator samples. We asked each health plan to use their administrative/claims data to identify the following random samples of patients:

-