Printer Friendly Version in PDF Format (66 PDF pages)

ABSTRACT

This report describes an extension of the RAND Corporation's evaluation of the Substance Abuse and Mental Health Services Administration's Primary and Behavioral Health Care Integration (PBHCI) grants program. PBHCI grants are designed to improve the overall wellness and physical health (PH) status of people with serious mental illness (SMI) or co-occurring substance use disorders by supporting the integration of primary care and preventive PH services into community behavioral health (BH) centers where individuals already receive care. From 2010 to 2013, RAND conducted a program evaluation of PBHCI, describing the structure, process, and outcomes for the first three cohorts of grantee programs (awarded in 2009 and 2010). That evaluation found wide variation in program structures, a range of implementation barriers, and some consumer-level improvements in PH outcomes (e.g., cholesterol, diabetes management). The current study extends previous work by investigating the impact of PBHCI on consumers' health care utilization, total costs of care to Medicaid, and quality of care in three states.

This report was prepared under contracts #HHSP23320095649WC and #HHSP23337015T between HHS's ASPE/DALTCP and the RAND Corporation. For additional information about this subject, you can visit the DALTCP home page at https://aspe.hhs.gov/office-disability-aging-and-long-term-care-policy-daltcp or contact the ASPE Project Officer, Joel Dubenitz, at HHS/ASPE/DALTCP, Room 424E, H.H. Humphrey Building, 200 Independence Avenue, S.W., Washington, D.C. 20201; Joel.Dubenitz@hhs.gov.

DISCLAIMER: The opinions and views expressed in this report are those of the authors. They do not reflect the views of the Department of Health and Human Services, the contractor or any other funding organization. This report was completed and submitted on October 2016.

TABLE OF CONTENTS

1. INTRODUCTION

- Poor Health Outcomes and High Costs of Care for Adults with Serious Mental Illness

- Integrated Care May Improve Health Care Quality and Overutilization of Intensive Services

- Effects of Integrated Care on Health Care Costs

- Primary and Behavioral Health Care Integration Grants

- Estimating Health Care Quality, Service Utilization, and Cost of Care from Public Payer Claims

- Clinic-Level Differences

- Research Questions

- Selection of States

- Data Sources

- Identification of Primary and Behavioral Health Care Integration Clinics in the Claims Data

- Identification of Comparison Clinics

- Outcome Measures

- Statistical Analyses

- Supplemental Analysis of Continuously Treated Consumers

3. RESULTS

- Sample Descriptions

- Utilization Measures

- Cost of Medicaid Reimbursements

- Quality Measures

- Supplemental Analysis of Continuously Treated Individuals

- Results Summary

4. DISCUSSION

- Utilization of Emergency Department and Inpatient Services

- Cost of Care

- Quality Measures

- Study Limitations

- Conclusion

APPENDICES

- APPENDIX A: Quality and Utilization Measures Considered for Study

- APPENDIX B: Year-by-Year Estimates of PBHCI Effects on Measures of Utilization, Costs, and Quality

LIST OF FIGURES

- FIGURE 2.1: Overlap Between PBHCI Grantee Clinical Activities and Available Billing Data

LIST OF TABLES

- TABLE 2.1: Number of PBHCI Clinics in the States Choses for Analysis by Cohort

- TABLE 2.2: Years of Data Included in Analyses by State

- TABLE 2.3: Numbers and Sample Sizes During the Pre-PBHCI Year for the Comparison Clinics Selected for Each PBHCI Cohort Within Each State

- TABLE 2.4: Comparison of Consumer-Years Enrolled in PBHCI with Consumer-Years Identified in Clinic Caseloads in Medicaid Claims Data, for PBHCI Implementation Years Included in Analyses

- TABLE 3.1: Sizes and Selected Characteristics of the PBHCI and Comparison Groups Samples

- TABLE 3.2: DD Estimates of the Impact of PBHCI on Utilization Measures

- TABLE 3.3: DD Estimates of the Impact of PBHCI on Medicaid Costs

- TABLE 3.4: Impacts of PBHCI on Quality of Care Measures Based on DD Model

- TABLE 3.5: Continuously Treated Sample as a Proportion of the Pre-PBHCI Sample

- TABLE 3.6: Analysis in Samples Restricted to Continuously Treated Consumers

- TABLE 3.7: Summary of DD Results Across States and Cohorts

- TABLE A.1: Utilization and Quality Measures Considered for Study, Drawn from New York State's PSYCKES or NQF

- TABLE B.1: Utilization and Quality Measures in the PBHCI and Comparison Clinics During the Pre-PBHCI and Post-PBHCI Period, State 1, Cohort 1

- TABLE B.2: DD Estimates of the Impact of PBHCI on Utilization and Quality Measures, State 1, Cohort 1

- TABLE B.3: DD Estimates of the Impact of PBHCI on Medicaid Costs, State 1, Cohort 1

- TABLE B.4: DD Results for Cost Measures, State 1, Cohort 1

- TABLE B.5: Utilization and Quality Measures in the PBHCI and Comparison Clinics During the Pre-PBHCI and Post-PBHCI Period, State 2, Cohort 1

- TABLE B.6: DD Results for Utilization and Quality Measures, State 2, Cohort 1

- TABLE B.7: DD Results for Cost Measures, State 2, Cohort 1

- TABLE B.8: DD Results for Cost Measures, State 2, Cohort 1

- TABLE B.9: Utilization and Quality Measures in the PBHCI and Comparison Clinics During the Pre-PBHCI And Post-PBHCI Period, State 2, Cohort 3

- TABLE B.10: Utilization and Quality Measures, State 2, Cohort 3

- TABLE B.11: DD Results for Cost Measures, State 2, Cohort 3

- TABLE B.12: DD Results for Cost Measures, State 2, Cohort 3

PREFACE

Beginning in 2009, the U.S. Department of Health and Human Services (HHS) Substance Abuse and Mental Health Services Administration (SAMHSA) has supported provision of physical health (PH) care services by specialty behavioral health (BH) clinics through its Primary and Behavioral Health Care Integration (PBHCI) grant program. In 2014, the RAND Corporation completed an evaluation report examining the services supported by the PBHCI grants and their impact on health outcomes. The HHS Office of the Assistant Secretary for Planning and Evaluation (ASPE) contracted with RAND for this study to extend RAND's evaluation using Medicaid claims data to examine the impact of PBHCI on utilization of emergency department and inpatient care, costs of care, and quality of care. This report presents results of analyses of the impact of PBHCI grant programs on those outcomes in three states. The report is addressed to policymakers at ASPE and SAMHSA as well as the broader mental health policy and advocacy community.

PBHCI grants were designed to improve the overall wellness and PH status of people with serious mental illness (SMI) or co-occurring substance use disorders by supporting the integration of primary care and preventive PH services into community BH centers where individuals already receive care. From 2010 to 2013, RAND conducted a program evaluation of PBHCI, describing the structure, process, and outcomes for the first three cohorts of grantee programs (one cohort awarded in 2009 and two in 2010). Resulting reports describe wide variation in program structures, a range of implementation barriers, and some consumer-level improvements in PH outcomes (e.g., cholesterol, indicators of diabetes). The current study extends previous work by investigating the impact of PBHCI on consumers' health care utilization, total costs of care, and quality of care received using Medicaid claims data, which were not available in the previous evaluation. Specifically, we address the following research questions:

-

What was the impact of PBHCI on utilization of emergency department and inpatient services?

One of the major motivations for improving the quality of primary care services for adults with SMI is to shift care away from unnecessary or preventable emergency department visits or inpatient hospitalizations. The claims data allowed us to examine utilization of emergency department and inpatient services.

-

What was the impact of PBHCI on costs of care to Medicaid?

Improvements in care for PH conditions are likely to have complex cost implications for Medicaid. The claims data allowed us to examine the impact of PBHCI on the total costs of care per person and to break these costs down by the site of care to gain insight into how PBHCI affected each of these components of total costs of care.

-

What was the impact of PBHCI on the quality of health care for PH conditions for the people treated in PBHCI grantee clinics?

By improving primary care services, PBHCI was expected to improve care for PH conditions. Although the prior evaluation documented some of these improvements, the current study examined the impact of PBHCI on quality of care from a different perspective (Medicaid), which included documentation of preventive health services provided outside of each PBHCI clinic.

The research was conducted in RAND Health, a division of the RAND Corporation. A profile of RAND Health and abstracts of its publications can be found at http://www.rand.org/health.

ACRONYMS

The following acronyms are mentioned in this report and/or appendices.

| ASPE | HHS Office of the Assistant Secretary for Planning and Evaluation |

|---|---|

| BH | Behavioral Health |

| CI | Confidence Interval |

| CMHC | Community Mental Health Center |

| CMS | HHS Centers for Medicare and Medicaid Services |

| DD | Difference-in-Differences |

| ED | Emergency Department |

| HbA1c | Glycated Hemoglobin |

| HHS | U.S. Department of Health and Human Services |

| IMPACT | Improving Mood--Promoting Access to Collaborative Treatment |

| IP | Inpatient |

| LL | Lower Limit of the 95% CI |

| MAX | Medicaid Analytic Extracts |

| NPI | National Provider Identifiers |

| NQF | National Quality Forum |

| OR | Odds Ratio |

| PBHCI | Primary and Behavioral Health Care Integration |

| PH | Physical Health |

| PSYCKES | Psychiatric Services and Clinical Knowledge Enhancement System |

| ResDAC | Research Data Assistance Center |

| RFA | Request for Applications |

| SAMHSA | HHS Substance Abuse and Mental Health Services |

| SMI | Serious Mental Illness |

| TRAC | TRansformation ACcountability |

| UL | Upper Limit of the 95% CI |

| VA | U.S. Department of Veterans Affairs |

| Z | Z-score |

EXECUTIVE SUMMARY

This report describes an extension of the RAND Corporation's evaluation of the U.S. Department of Health and Human Services, Substance Abuse and Mental Health Services Administration's (SAMHSA's) Primary and Behavioral Health Care Integration (PBHCI) grants program. PBHCI grants are designed to improve the overall wellness and physical health (PH) status of people with serious mental illness (SMI) or co-occurring substance use disorders by supporting the integration of primary care and preventive PH services into community behavioral health (BH) centers where individuals already receive care. From 2010 to 2013, RAND conducted a program evaluation of PBHCI, describing the structure, process, and outcomes for the first three cohorts of grantee programs (awarded in 2009 and 2010). That evaluation found wide variation in program structures, a range of implementation barriers, and some consumer-level improvements in PH outcomes (e.g., cholesterol, diabetes management). The current study extends previous work by investigating the impact of PBHCI on consumers' health care utilization, total costs of care to Medicaid, and quality of care in three states.

Background

Adults with SMI suffer disproportionately from PH conditions. Compared with their non-SMI peers, adults with SMI are at increased risk for a range of acute and chronic diseases, including diabetes, cardiovascular disease, respiratory disease, cancer, and infectious disease (Jones et al., 2004; McGinty et al., 2012; Parks et al., 2006; SAMHSA, 2012). Life expectancy estimates for adults with SMI range from eight to 30 years lower than for the general population (Chang et al., 2011; Colton and Manderscheid, 2006; Saha, Chant, and McGrath, 2007; Walker, McGee, and Druss, 2015). Co-occurring medical and BH conditions are also disproportionately costly for public payers of health care, primarily Medicaid and Medicare (Kasper, Watts, and Lyons, 2010; Melek, Norris, and Paulus, 2014). These disparities have been attributed to modifiable risk factors such as smoking, alcohol and substance use, poor nutrition, lack of exercise, obesity, and high-risk sexual behaviors (Parks et al., 2006); side effects of psychotropic medications (Newcomer, 2007); housing instability and low socioeconomic status (Katon, 2003); and limited access to quality medical care (Lawrence and Kisely, 2010).

Fragmentation between the general medical and BH sectors--in terms of clinical practice, administration, and financing--is widely considered to be a significant contributor to the poor overall health outcomes associated with SMI (Druss, 2007; Horvitz-Lennon, Kilbourne, and Pincus, 2006; Committee on Crossing the Quality Chasm: Adaptation to Mental Health and Addictive Disorders, Board on Health Care Services, Institute of Medicine, 2006; Pincus et al., 2007; President's New Freedom Commission on Mental Health, 2003). As such, initiatives that promote medical and BH integration are expected to address the triple aims of health care reform: improved care experiences, improved health outcomes, and reduced per-capita costs (Katon and Unützer, 2013).

Improvements in care experience and health outcomes are expected to result from increased access to primary care and preventive medical services (because of service colocation or facilitated referrals) and increased collaboration and learning across BH and PH care providers (Alakeson, Frank, and Katz, 2010). Reductions in health care costs for adults with SMI are expected to result through decreases in hospitalizations and emergency department visits for preventable health conditions and fewer inappropriate visits to emergency departments (e.g., for primary care needs) (Nolte and Pitchforth, 2014). In practice, however, the effects of integration on health care costs for adults with SMI may be more complex. Given high levels of previously unmet medical needs, integrated care programs for adults with SMI may lead to increased visits to primary and specialty medical care, which can increase the cost of care particularly for consumers who had little to no contact with PH care services before.

In the current study, we examined the impact of PBHCI-funded integrated care for adults with SMI on health care utilization, total costs of care, and quality of care received using Medicaid claims data. Medicaid claims data provide a valuable perspective because they reflect a wide scope of services that (Medicaid-enrolled) individuals receive, which is particularly important given that adults with SMI may be transient (receiving services across multiple locations and health systems) and are likely to receive services across multiple levels of care (i.e., hospital, crisis, emergency, outpatient).

The prior RAND evaluation of PBHCI did not have information on utilization and costs of health care outside of the PBHCI grantee clinics. It also did not have information on utilization, costs, and quality among consumers treated in non-PBHCI clinics to whom the PBHCI enrollees could be compared. The current study was designed to address these limitations and, specifically, to investigate the following research questions:

-

What was the impact of PBHCI on utilization of emergency department and inpatient services?

One of the major motivations for improving the quality of primary care services for adults with SMI is to shift care away from unnecessary or preventable emergency department visits or inpatient hospitalizations. The claims data allowed us to examine utilization of emergency department and inpatient services and to distinguish utilization for PH conditions, where effects are anticipated, from utilization for BH conditions, which are not directly targeted by PBHCI.

-

What was the impact of PBHCI on costs of care to Medicaid?

Improvements in care for PH conditions are likely to have complex cost implications for Medicaid. The claims data allowed us to examine the impact of PBHCI on the total costs of care per person and to break these costs down by the site of care to gain insight into how PBHCI affects each of these components of total costs of care.

-

What was the impact of PBHCI on the quality of health care for PH conditions for the people treated in PBHCI grantee clinics?

By improving primary care services, PBHCI was expected to improve care for PH conditions. Although the prior evaluation documented some of these improvements, the current study examined the impact of PBHCI on quality of care from a different (Medicaid) perspective, which included documentation of services provided outside of each PBHCI clinic. These measures reflect not only the care that was directly provided but the programs' success connecting patients with care from external medical providers. The measures include services that were not provided by the PBHCI clinics, such as screening exams for colorectal cancer and follow-up after discharge from a hospitalization for mental illness.

Methods

This study used Medicaid claims data to estimate the impact of PBHCI grants on utilization, costs of care, and quality, using a difference-in-differences model. This model compared change in the outcomes associated with introduction of the PBHCI program into the grantee clinics with change over the same time period in a set of comparison clinics from the same state that did not receive PBHCI grants. The study was organized as a series of three state-level case studies. States were selected based on a number of state-specific characteristics (e.g., data availability, number of PBHCI grantees).

A group of comparison clinics was selected to represent clinics within each state based on information in the claims data sets. Specifically, we examined four claims-based provider characteristics: pattern of utilization, proportion of claims with a primary diagnosis of BH condition, proportion of claims with a primary diagnosis of schizophrenia, and caseload size. For PBHCI and control clinics, all consumers with at least one visit to the clinic with a diagnosis of a SMI during a year were considered members of that clinic's caseload for that year and, thus, included in analyses.

Three types of outcomes were examined: measures of emergency department and inpatientutilization, costs of care, and quality indicators. Utilization measures included any emergency department or inpatient visits for BH or PH conditions and frequent emergency department or inpatient usage (defined as three or more emergency department visits for a BH condition, four or more emergency department visits or inpatient stays for a PH condition, and four or more emergency departmentvisits or inpatient stays for any condition). Cost outcomes included both binary indicators of whether or not an individual used a type of service (e.g., an inpatient stay) and continuous measures of total costs of care (e.g., the total cost for inpatient stays among individuals with an inpatient stay). Quality of care measures included appropriately receiving services for diabetes monitoring, flu vaccine, cancer screenings, outpatient PH care, and follow-up after hospital discharge.

Results

Utilization, cost, and preventive services were examined in a total of five cohorts of PBHCI clinics: two cohorts in State 1, two cohorts in State 2, and one cohort in State 1. Evidence of PBHCI effects on utilization of emergency department and inpatient services was mixed across cohorts, but two clear patterns emerged with respect to frequent use of these services. First, in all five cohorts, PBHCI was associated with a reduction relative to comparison clinics in the proportion of consumers having four or more emergency department or inpatient visits, and this reduction reached statistical significance in three of the five cohorts. Second, the reduction in frequent utilization was specific to utilization for PH conditions. In three of the five cohorts, PBHCI was associated with a reduction relative to comparison clinics in the proportion of consumers having four or more emergency department or inpatient visits with a primary diagnosis of a PH condition.

For each cohort of clinics, we examined the impact of PBHCI on total costs of care to Medicaid and on costs for specific types of services--outpatient care, emergency department visits, and inpatient stays. PBHCI was associated with a reduction relative to comparison clinics in the total costs of care per consumer in three of the five cohorts. The impact of PBHCI on total cost was not statistically significant in the remaining two cohorts. Reductions in cost for specific types of care varied across cohorts. Statistically significant reductions in cost for outpatient services were found in two cohorts: in cost per user of emergency department services for one cohort and in cost per used or inpatient services for another cohort. Countervailing increases were found for costs per user of inpatients services in one cohort and in two cohorts. PBHCI was associated with higher likelihood of having emergency department-related costs in one cohort and lower likelihood of having emergency department-related costs in another.

Few of the quality of care measures for primary care services were impacted by PBHCI, either positively or negatively. There did not appear to be a pattern to the effects that were found. An exception was a pattern of negative effects of PBHCI on quality indicators for State 3--that is, PBHCI clinic consumers were less likely to have received appropriate services, such as diabetes screenings, than comparison clinic consumers. It is important to note that consumers (in PBHCI or comparison clinics) may indeed have received such services despite these services not being reflected in the claims data, especially if grant funds were used to cover these services.

Conclusion

The current study on the impact of PBHCI on utilization of emergency department and inpatient services, total costs of care, and quality of care received for Medicaid beneficiaries yielded mixed results. We did find some evidence that PBHCI can be successful in producing positive changes in consumer health care utilization patterns. In particular, there was evidence that, in some of the groups of clinics studied, PBHCI reduced frequent utilization of emergency department and inpatient services, increased ambulatory follow-up after an emergency department or inpatient visit, and reduced total per person costs of care to Medicaid. While there was considerable variation in these effects across groups of clinics studied (across states and years awarded), there were no results in which PBHCI significantly increased total per person costs of care to Medicaid. Although our findings regarding the impact of PBHCI on quality of care did not yield positive results, Medicaid claims data may not reflect all services provided to consumers. In particular, care assessed by quality measures such as appropriate diabetes screening may have been paid with grant funds and thus may not be reflected in claims.

Results of this study should be interpreted in the light of the following limitations. First, the study was conducted in three of the 32 states that hosted PBHCI clinics during this time period. Given the variability in the results, even across these three states, it is reasonable to infer that there is wider variability in PBHCI impacts across the country. While the results demonstrate that PBHCI can have positive impacts on utilization and costs, they do not allow us to draw conclusions regarding the overall impact of the program on a national basis. Second, this study was conducted using entire clinic caseloads, while only a subset of individuals were actually enrolled in the PBHCI program. The apparent impact of the program may have been reduced by this more inclusive sample. Third, the PBHCI program requirements were being revised across the cohorts studied here and were further revised for the cohorts that came after. Therefore, these results should be interpreted as reflections of the impact of the early phase of the program. Later cohorts, which followed requirements revised in light of these early experiences, may have had different results.

Our findings raise a number of questions regarding the mechanisms of change that could be further investigated for lessons regarding continuing improvement in care. For example, although PBHCI impacts on total costs of care were similar across cohorts, the pathways through which those outcomes were achieved appear to be different in each cohort. This heterogeneity, which may result from different program implementation strategies or from different pre-PBHCI systems, deserves further investigation.

1. INTRODUCTION

Poor Health Outcomes and High Costs of Care for Adults with Serious Mental Illness

Compared with their peers without serious mental illness (SMI), adults with SMI are at increased risk for a range of acute and chronic diseases, including diabetes, cardiovascular disease, respiratory disease, and infectious disease (Jones et al., 2004; Parks et al., 2006; Substance Abuse and Mental Health Services Administration, 2012). Life-expectancy estimates for adults with SMI are 8-30 years lower than for the general population (Chang et al., 2011; Colton and Manderscheid, 2006), and much of this disparity has been attributed to modifiable risk factors such as smoking, alcohol and substance abuse, poor nutrition, lack of exercise, obesity, and high-risk sexual behaviors (Parks et al., 2006). Other contributing factors include side effects of psychotropic medications (Newcomer, 2007), housing instability and low socioeconomic status (Katon, 2003), and limited access to quality medical care (Lawrence and Kisely, 2010).

Co-occurring medical and behavioral health (BH) conditions are also disproportionately costly for public payers of health care, primarily Medicaid and Medicare (Kasper et al., 2010; Melek, Norris, and Paulus, 2014). For example, the most costly 5 percent of Medicaid beneficiaries account for approximately 50 percent of all Medicaid spending; and, among this top 5 percent, psychiatric illnesses are present among three of the five most prevalent diagnostic pairs (Kronick, Bella, and Gilmer, 2009). A recent economic analysis of Medicare, Medicaid, and commercial claims data found that per-beneficiary costs for treating a wide range of medical conditions were 2-3 times higher for beneficiaries with co-occurring diagnoses of SMI or substance use disorder compared with those without comorbid BH diagnoses (Melek, Norris, and Paulus, 2014). The majority of disproportionate spending was on physical health (PH) rather than BH treatment (Melek, Norris, and Paulus, 2014). This largely reflects a need for improved management of chronic PH conditions, since spending on a range of chronic PH conditions was considerably higher for adults with SMI compared with adults with no SMI diagnosis.

Integrated Care May Improve Health Care Quality and Overutilization of Intensive Services

The United States health care system's traditional separation of medical and BH sectors--in terms of clinical practice, administration, and financing--is widely considered to be a significant contributor to the comparatively poor overall health outcomes associated with mental illness (Druss, 2007; Horvitz-Lennon, Kilbourne, and Pincus, 2006; Committee on Crossing the Quality Chasm: Adaptation to Mental Health and Addictive Disorders, Board on Health Care Services, Institute of Medicine, 2006; Pincus et al., 2007; President's New Freedom Commission on Mental Health, 2003). As such, initiatives that promote medical and BH integration--for example, through colocation of primary and BH care services, clinical changes such as collaborative care teams, or payment reforms--are increasingly common health care reforms (Druss and Mauer, 2010; Katon and Unützer, 2013; Smith et al., 2012). Most integrated care efforts focus on bringing BH care into primary care settings (Butler et al., 2008); however, the reverse model, in which primary medical care is integrated into BH settings, may be a more effective approach for populations with SMI because these individuals are more likely to have established relationships with BH providers than medical providers (Alakeson, Frank, and Katz, 2010).

Integrated care has the potential to address the triple aims of health care reform for adults with SMI: improved care experiences, improved health outcomes, and reduced per-capita costs (Katon and Unützer, 2013). Improvements in care experience and health outcomes are expected to result from increased access to primary care and preventive medical services (because of service colocation or facilitated referrals) and increased collaboration and learning across BH and PH care providers (Alakeson, Frank, and Katz, 2010). The effects of integrated care on health care costs are discussed in the next section.

Effects of Integrated Care on Health Care Costs

Integrated care has been hypothesized to reduce health care costs for adults with SMI through decreases in hospitalizations and emergency department visits for preventable health conditions and fewer inappropriate visits to emergency departments (e.g., for primary care needs) (Nolte and Pitchforth, 2014). In practice, however, the effects of integration on health care costs for adults with SMI may be more complex. Given high levels of previously unmet medical needs, integrated care programs for adults with SMI may lead to increased visits to primary and specialty medical care, which can increase the cost of care particularly for consumers who had little to no contact with PH care services before. Relatedly, a clinic's potential for cost savings may not be realized until an integrated care program has matured such that the identification of new conditions in the clinic population has reached a steady state and the majority of service is managing previously identified health care needs. More generally, experts have warned that, although preventive care initiatives often improve population health and reduce some types of care costs, they are often not cost saving to the health system overall. Specifically, preventive interventions tend to add more to total medical costs than they save (Russell, 2009).

The vast majority of research on the effects of integrated care on health care costs has examined models that integrate BH into medical (typically primary care) settings. In a 2014 report prepared for the American Psychiatric Association, Melek and colleagues estimated the economic impact of integrated medical and BH care on commercial and public payers and posited that "typical cost savings estimates range from 5 percent to 10 percent of total health care costs over a two to four year period" (Melek, Norris, and Paulus, 2014, p. 19). The most robust evidence of such savings is found in studies of programs, such as the Improving Mood--Promoting Access to Collaborative Treatment (IMPACT) collaborative care model (Unützer et al., 2008), which focus on specifically defined consumer populations such as older adults with depression or comorbid depression and diabetes (Katon and Unützer, 2013). In a randomized, controlled trial, consumers assigned to IMPACT had approximately $3,300 lower total health care costs compared with consumers in usual care over a four-year period (one year of intervention and three years of follow-up) (Unützer et al., 2008). No significant cost savings were found after two years of follow-up (Katon et al., 2005; Unützer et al., 2008). On the other hand, in their review of the economic impact of integrated care, Nolte and Pitchforth (2014) reported that cost outcomes are mixed and inconsistent, and described the evidence-base for this topic as weak.

One of the few studies that examined the effects of integration based in mental health settings was conducted within the U.S. Department of Veterans Affairs (VA) health system, where individuals enrolled in a VA mental health clinic were randomized to receive primary care through an integrated care initiative located within the mental health clinic or through a VA general medicine clinic (Druss et al., 2001). In addition to improved PH outcomes and quality of care, consumers in the integrated care group were significantly more likely than controls to experience a primary care visit (91.5 percent versus 72.1 percent, p<0.01) and less likely to have an emergency department visit (11.9 percent versus 26.2 percent, p<0.05) in the year following randomization. No significant differences in total health care costs were observed.

An early analysis of Missouri's Health Home initiative, which includes management of chronic PH conditions through community mental health centers (CMHCs), compared hospital and emergency department utilization for CMHC Health Home enrollees in the year prior to enrollment and the year following enrollment (Department of Mental Health and MO Healthnet, 2013). Individuals with an SMI or serious emotional disorder were autoenrolled in the program if they had Medicaid paid claims data costs of at least $10,000 from July 2010 through August 2011 and if they had contact with a CMHC during that period. Researchers found a 12.8 percent reduction in hospital admission rates and an 82 percent reduction in emergency department use among persons continuously enrolled for 18 months; however, the analysis lacks utilization rates for a nonenrollee comparison group to determine to what degree these reductions can be attributed to the Health Home intervention.

In the current study, we extend the existing literature by describing BH-based integrated-care impacts on health care utilization, Medicaid costs, and quality for adults with SMI. Specifically, utilization, cost, and quality outcomes are compared for individuals served by a diverse set of U.S. Department of Health and Human Services (HHS) Substance Abuse and Mental Health Services Administration (SAMHSA) Primary and Behavioral Health Care Integration (PBHCI) grantee clinics and their matched controls in three demonstration states. PBHCI impacts are examined for grantees from three states that were providing care between 2010 and 2014 (or as limited by the availability of Medicaid data at the time of this research; additional detail about the study period for each state is provided in Table 2.2).

Primary and Behavioral Health Care Integration Grants

In 2009, SAMHSA initiated the PBHCI grants program to support the integration of primary care services into BH treatment settings for adults with SMI, with or without co-occurring substance use disorder.

Since 2009, PBHCI grants have been awarded yearly to annual cohorts of nonprofit community BH centers across the country.[1] Many programs involve partnerships with primary care clinics, such as Federally Qualified Health Centers; however, some BH grantees opt to hire medical providers directly into their agency (Scharf et al., 2014). As of September 2015, SAMHSA has awarded $162,392,053 in grant funds to 187 PBHCI grantees across eight cohorts. Grantees receive four years of funding ($400,000-$500,000 per year) to support their integrated care efforts, and funding is nonrenewable (i.e., an additional PBHCI grant cannot be used to extend a previously funded program, although a single grantee agency may receive several grants to support programs in different locations and serving a different consumer population). A portion of the grant may be used for infrastructure improvements, such as implementing electronic health records that can be shared across BH and medical providers. Grantees are required to regularly collect and submit program-level and consumer-level behavioral and PH data to SAMHSA.

Awardees from the first three cohorts (awarded in 2009 and 2010)[2] were required to provide four core program features: (1) screening and referral for PH conditions; (2) a registry/tracking system for consumer PH needs and outcomes; (3) care management; and (4) illness prevention and wellness support services. Optional program features included colocation of primary care providers (e.g., physicians, nurse practitioners) in the BH setting and embedding nurse care managers within clinical care teams.

Changes to the program over time have included several requirements for accountability, thoroughness in care, and sustainability. Specifically, the first update to the original PBHCI request for applications (RFAs), issued in 2012, newly mandated that grantees provide on-site primary care services and medically necessary referrals and that grantees serve as their clients' health home. Grantees were also required to achieve health information technology Meaningful Use Stage One Standards and to submit a comprehensive sustainability plan. The most recent RFAs, issued in 2015, contains additional requirements, including the use of evidence-based programs in the areas of smoking cessation, nutrition and exercise, and chronic illness self-management, plus protocols for managing blood pressure control from the Million Hearts Campaign, among others.

In 2014, RAND released the first large-scale research study on PBHCI, including the first three cohorts of grantees (n=56, awarded in 2009 or 2010) (Scharf et al., 2014). Data showed wide variation in program structures, a range of implementation barriers, and some consumer-level improvements in PH outcomes (e.g., cholesterol, indicators of diabetes). Programs that offered primary care services on more days of the week and held regular clinical meetings involving both behavioral and primary care providers were more likely to provide integrated care (Scharf et al., 2014). Additional research targeting clinical processes and outcomes in later cohorts of grantees is now underway. This newly commissioned research has the capacity to provide more comprehensive and current information about the impact of PBHCI on consumer service use and clinical outcomes (among other aspects of the program). It does not, however, include an analysis of health care costs or potential cost savings.

Although early descriptions of the PBHCI mission focused on improving consumer health outcomes, the more recent 2015 RFAs listed "reducing/controlling per-capita cost of care" as an explicit goal of the program ("SAMHSA Grant Announcements," 2014). A recent study of two clinics supported by a single PBHCI grant provides the first (and, to date, only) analysis of potential health care savings from PBHCI (Krupski et al., 2016). The authors analyzed outpatient medical, inpatient hospital and emergency department claims, and billing data from the medical center with which both clinics were affiliated. Cost analyses are from the perspective of the health system that provided the claims. One of the PBHCI-supported clinics had a ten-year history of providing integrated services, while the other began providing integrated care upon receipt of the grant. Controls were propensity score matched consumers served by each clinic who were not enrolled in PBHCI. Difference-in-differences (DD) analysis showed that PBHCI consumers were more likely than controls to use outpatient medical services at both clinics. At the clinic with the established integrated care program, the percentage of PBHCI clients using outpatient medical services increased from 80 percent to 92 percent after one year of enrollment in PBHCI, while outpatient service use changed little during the same period (61 percent to 60 percent). At the newer integrated care clinic, the percentage of PBHCI clients using outpatient medical services increased from 39 percent to 76 percent, while the comparison group showed little change in outpatient medical services used (28 percent to 31 percent). At the more established clinic, PBHCI was also associated with a reduction in inpatient hospitalizations and a trend for reduced inpatient hospital costs of $218 per member per month. PBHCI hospital-related cost savings were not observed at the newer clinic. No PBHCI effects were observed on emergency department use or costs at either clinic.

Estimating Health Care Quality, Service Utilization, and Cost of Care from Public Payer Claims

In this study, we investigated the impact of PBHCI on consumers' overall health care utilization, total costs, and care quality using Medicaid claims in multiple clinics across three states. Unlike Krupski et al.'s (2016) analysis of claims from within a local health system, we analyzed utilization, cost of care, and quality using Medicaid claims data regardless of where care was delivered, as they provide the most readily available, detailed, and comprehensive records of service for the most PBHCI grantees.

Medicaid claims data are useful for estimating enrollees' cost of care because numeric service codes are linked to standardized reimbursement rates within states. Unlike claims data from a health system or network of providers, a benefit of state and federal claims is that they reflect the total range of services that an individual receives, which is particularly important for a study of adults with SMI who may be transient (receiving services across multiple locations and health systems) and who are likely to receive services across multiple levels of care (i.e., hospital, crisis, emergency, and outpatient). Medicaid claims data are, in many ways, the best option for creating a complete picture of consumers' quality of care.

At the same time, there are several noteworthy limitations of Medicaid claims, for the purpose of this project. For example, some PBHCI service types may not appear in Medicaid claims because they were uncovered at the time that services were rendered (e.g., peer services) or because providers were unlikely to bill for those services for a variety of reasons, such as policy barriers (e.g., inability to bill for primary care and BH services on the same day) or lack of familiarity with public payer billing at the start of the grant. Medicaid claims also include bundled services and managed care, which do not include information about specific services rendered. For people enrolled in both Medicaid and Medicare (i.e., dual eligibles), Medicaid claims also only represent a portion of services received. Additionally, the individuals who were enrolled by the grantee clinics as PBHCI participants were not asked to consent for linking their personal information with external data sources. For this reason, the analysis in this project had to be conducted using the entire caseload of each clinic being studied. The analysis of the entire caseload may underestimate the impact of PBHCI on the consumers who were enrolled in the program.

Medicaid claims data can be obtained either directly from states or from the HHS Centers for Medicare and Medicaid Services (CMS). CMS's Medicaid data include data from all 50 states and are standardized across states. Limitations of CMS Medicaid data, however, are that the data take several years (about four) to become available and are processed and aggregated so as to obscure some details of services rendered. For example, CMS Medicaid data may include bundled payments in which individual procedures are obscured. CMS data also may include only high-level identifiers for the billing institution associated with each claim, thereby limiting opportunities for clinic-level analyses, when clinics are part of multiclinic institutions. Finally, CMS Medicaid data may also exclude claims for services that are covered in only a subset of states.

Advantages to obtaining Medicaid data directly from states themselves is that the data are likely to include more detail, including clinic-level (instead of institution-level) identifiers and specific services included within payment bundles, allowing for more detailed analyses about the quality and appropriateness of care. State data are typically also available more rapidly than CMS data, thereby allowing for analysis of more recent cohorts of grantees. A particular challenge of working with state-provided Medicaid data is that each data set is unique (with uniquely defined services and service codes), having undergone differing levels of processing. This precludes applying the same analytic algorithm and making direct comparisons of results across states.

In this study, we used CMS Medicaid and state-provided Medicaid data to maximize our ability to characterize the extent, cost, and quality of services received.

Clinic-Level Differences

In addition to state-level differences in policy environments, considerable differences between participating clinics, within states, existed. Clinics within states were hospital affiliated and free standing; state, county, and independently run; and in rural, suburban, and urban areas. Depending on these factors, clinics within a state may have also experienced provider shortages (e.g., rural areas) or space shortages (e.g., in urban settings) in which to provide primary care. Clinics also may have had longstanding relationships with primary care partners at the beginning of the grant period, while others would have had their first experience providing primary care six months (or more) after receiving grant funds. While some clinics struggled to meet recruitment targets, others had demand that exceeded primary care capacity. Even within states, clinics also differed in their capacity to bill Medicaid for integrated care services; for example, some clinics began billing Medicaid right away for primary care services, but other clinics--particularly safety net clinics with less experience billing Medicaid--may have paid for a range of billable services directly from the grant until they created the administrative infrastructure for routine billing, depending on larger trends within the state. The diversity of clinics within and between states included in this analysis enrich and also challenge conclusions to be drawn from this analysis.

Research Questions

The PBHCI program aimed not only to provide services to consumers likely to be undertreated but to have a broad influence on their utilization of intensive health care services, the total cost of health care services, and the quality of the care they receive. The prior evaluation of PBHCI was not able to address these broader impacts because it did not have access to data that could have been used to examine them. In particular, the prior evaluation did not have information on utilization and cost of health care used by PBHCI enrollees outside of the PBHCI grantee clinic or on these outcomes among consumers treated in non-PBHCI clinics to whom the PBHCI enrollees could be compared. This project was designed to address this gap through the analysis of claims data. In particular, our goal was to address the impact that PBHCI had on utilization of emergency department and inpatient services, total costs of care, and quality of care for PH conditions. Specifically, we address the following questions:

-

What was the impact of PBHCI on utilization of emergency department and inpatient services?

One of the major motivations for improving the quality of primary care services for adults with SMI is to shift care away from emergency departments and prevent inpatient hospitalizations. In particular, the goal is to avoid frequent use of these intensive health care services for PH conditions that are inappropriate and could potentially be avoided. (Frequent use may be defined as three or more claims for emergency department or inpatient services.) The claims data allow us to examine utilization of emergency department and inpatient services and to distinguish utilization for PH conditions from utilization for BH conditions, which is not directly targeted by PBHCI.

-

What was the impact of PBHCI on costs of care to Medicaid?

Improvements in care for PH conditions are likely to have complex cost implications for Medicaid. On the one hand, improving primary care services is likely to identify unmet medical needs and thus increase costs of care. On the other hand, improving quality of outpatient care is likely to reduce total costs over time by preventing use of more expensive emergency department and inpatient services. The claims data allow us to examine the impact of PBHCI on the total costs of care per person and to break these costs down by the site of care to gain insight into how PBHCI affects each of these components of total costs of care.

-

What was the impact of PBHCI on the quality of health care for PH conditions for the people treated in PBHCI grantee clinics?

By improving primary care services, PBHCI is expected to improve care for PH conditions. Although the prior evaluation documented some of these improvements, the current study examined the impact of PBHCI on quality of care from a different (Medicaid) perspective, which includes documentation of services provided outside of each PBHCI clinic. These measures reflect not only the care that was directly provided but also the program's success at integrating care with external medical providers.

2. ANALYTIC METHODS

This report uses claims data to estimate the impact of PBHCI grants on utilization, costs of care, and quality using a DD model. The DD model compares change in the outcomes associated with introduction of the PBHCI program into the grantee clinics with change over the same time period in a set of comparison clinics from the same state that did not receive PBHCI grants. The primary advantage of the DD model over other alternatives is its effective control for unrelated changes in the health care system that happened to be occurring at the same time and might have affected the outcomes even in the absence of the PBHCI grants (Howell, Conway, and Rajkumar, 2015).

The analysis is conducted at the clinic level, meaning that information on the characteristics and outcomes for all of the consumers seen in a clinic during a given year, what we call the clinic's caseload for that year, is compared between the PBHCI and comparison clinics. This is the most appropriate level of comparison because PBHCI is a clinic-level intervention. Grants were provided to clinics so that they could provide PH care services to their consumers regardless of whether they were established consumers or new to the clinic. However, it is also important to note that there is a large amount of turnover in clinic caseloads from year to year so that the individuals in the PBHCI and comparison caseloads are not the same during the pre-PBHCI and the PBHCI implementation periods. A supplementary analysis, which we describe at the end of this chapter, addresses this issue by focusing on the subset of consumers treated in both the pre-PBHCI and the PBHCI implementation periods.

This chapter on the methods used in the analysis is organized into seven sections: (1) Selection of States; (2) Data Sources; (3) Identification of PBHCI Clinics in the Claims Data; (4) Identification of Comparison Clinics; (5) Outcome Measures; (6) Statistical Analyses; and (7) Supplemental Analysis of Continuously Treated Consumers.

Selection of States

This project was organized as a series of state-level case studies. Analyses were grouped by state for two reasons. First, Medicaid is a state-administered program, and many regulations that affect integrated care for beneficiaries with SMI vary state to state. Conducting comparisons between PBHCI and comparison clinics within states helps control for these state-level regulatory variations. Second, Medicaid claims data are generally available on a state-by-state basis, whether they are obtained directly from the state itself or from the Federal Government's Research Data Assistance Center (ResDAC). However, it is important to keep in mind that the case study approach, which examines a small number of states in detail, is limited because the states cannot represent all PBHCI programs across the 50 states in which they have been implemented.

In consultation with SAMHSA and the HHS Office of the Assistant Secretary for Planning and Evaluation (ASPE), three states were selected for inclusion in this study based on two primary considerations. First, states with larger numbers of grantees in cohorts 1-5 were prioritized so that the study would have sufficient statistical power. Second, states with Medicaid claims data available from ResDAC or from the state Medicaid office were prioritized so that the analysis would cover as long a time period as possible.

Availability of Medicaid Claims Data

There is no standard mechanism through which researchers can obtain Medicaid claims data directly from states. Some states have offices that will provide data to outside entities but only for projects of particular interest to the state. Others will provide data to researchers for a wider range of projects but at considerable cost. Our team consulted broadly with ASPE, SAMHSA, peers at RAND, and other colleagues to clarify the process by which to obtain data from each of the eight candidate states, whether this project would be of interest to the state Medicaid office, whether we have a contact within the office to help advocate for the project, and the cost associated with processing the data request. As a result of this process, we learned that State 2 would only provide us with a pre-prepared data set that it had recently used for another purpose; this data set met some, but not all, of our analytic needs.

Utility of Medicaid Claims Data

We evaluated the potential utility of the eight candidate states' Medicaid data in two ways. First, we considered whether each data set would allow us to infer which consumers were served by PBHCI clinics by investigating whether the data had reliable clinic-level identifiers associated with individual claims. This approach, described in detail in the next section, was necessary, since we did not have Medicaid identifications (or other identifiers) for individuals served through PBHCI. We also considered whether PBHCI clinics were in close geographic proximity of one another, as clusters of clinics could potentially share consumers, services, and providers, making the identification of PBHCI consumers and services difficult to disentangle, or at least difficult to validate, using approaches other than clinic-level identifications.

We also consulted with peers at RAND, ASPE, and other experts to determine whether services of interest (e.g., tobacco treatment, diabetic eye exam) would be identifiable in claims data, particularly in states with managed care programs, as specific service indicators (warranting a bundled payment) might not have been recorded or reported to the state. Some aggregate indicators in the pre-prepared State 2 claims data were problematic in this way.

Critically, we considered whether the state's Medicaid program covered sufficient individuals and services expected of PBHCI grantees and ruled out states where too few individuals or services would be represented in claims.

Policy and Implementation Issues Limiting the Generalizability of Findings

Finally, we considered the broader policy context of each of the eight candidate states that could affect our ability to draw conclusions about PBHCI impacts on consumers' quality and cost of care.

Based on these factors, and in close consultation with ASPE at all stages of this process, three states were selected for this analysis. PBHCI clinics are funded in annual cohorts, as shown in Table 2.1. Note that clinical services supported by PBHCI funds were scheduled to begin six months after the start of the grant (i.e., in February of the following calendar year).

Data Sources

Estimation of the impact of PBHCI programs requires data on services provided by the clinics and comparison clinics for the time period immediately preceding the beginning of the PBHCI program and time periods during which the program was being implemented. Medicaid claims data for these time periods were sought from two sources: the state Medicaid agencies in the study states and ResDAC, the office that provides standardized claims data sets to researchers under contract with CMS. These two sources are similar; the ResDAC data are in fact standardized data sets derived from the individual state claims data sets. ResDAC Medicaid data are known as Medicaid Analytic Extracts (MAX) files.

The reasons for preferring one of these two data sources over the other are related to timeliness and ease of use; data obtained directly from the state Medicaid agencies are timelier but much more difficult to use than the data from ResDAC. The state data are timelier because they are in a "rawer" form. The data are provided in the format in which they are used for internal state purposes. The data become available through ResDAC only after they have been submitted by the state and processed into a format that is standard across states. Additionally, the state data are much more complex to use because each state uses its own data systems, so knowledge regarding analysis of data from one state does not transfer to other states. Moreover, the state data must be obtained on a state-by-state basis through separate negotiations with each state.

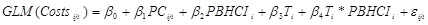

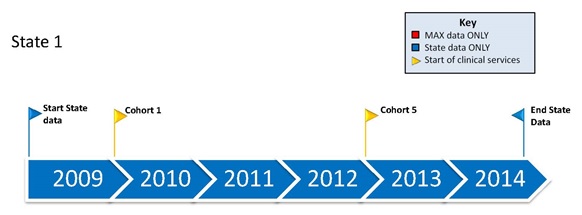

For each of the three states in this study, we obtained both state and CMS data sets. Figure 2.1 shows how the time periods covered by the state and CMS data sets correspond to the time periods during which the PBHCI programs were implemented for each state. For State 1, the state data set covered the entire period from 2009, which is one year prior to the beginning of PBHCI Cohort 1, through 2014, which is two years after the beginning of PBHCI Cohort 5. However, the ResDAC data for State 1 are extremely limited, with no data available since 2010. It is not clear that these data will ever be available in standardized MAX data sets. There is one limitation to the State 1 state data, which is important to note. The data do not include information on inpatient stays with a primary diagnosis of a BH condition. For this reason, outcome measures that involve BH inpatient stays cannot be calculated for State 1.

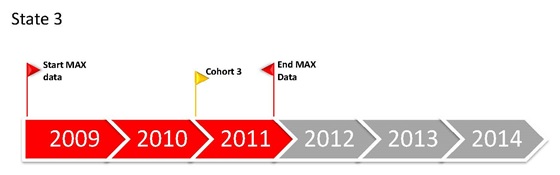

Data are available directly from the state of State 2 for the period covering 2010-2012. However, the data set available from the state is aggregated at the consumer-year level, meaning that individual services and service dates cannot be identified. ResDAC data for State 2 cover the period from 2009 to 2012, enabling analysis of the clinics that began PBHCI services in 2010 and 2011. According to ResDAC, no data will be released for State 2 for 2013. As with the State 1 data mentioned, it is not clear that these data will ever be available in standardized MAX data sets.

Data on State 3 are available from the state covering the period from 2009 through 2013 and from ResDAC covering 2009-2011. Using data from either source, we were able to examine the impact of the PBHCI program that began in 2011. The State 3 All-Payer Claims Database was obtained for the years 2009-2013. However, after substantial effort, we were not able to reliably identify Medicaid recipients in the state-provided data set and were thus unable to analyze the later PBHCI cohorts in the state as planned.

The years included in analyses of the impact of each cohort of clinic grantees within states are summarized in Table 2.2.

Identification of Primary and Behavioral Health Care Integration Clinics in the Claims Data

In the absence of consumer identifiers that could be used to select data on specific individuals who were enrolled in a PBHCI program, we used clinic identifiers to select the entire adult caseloads of clinics that received PBHCI grants. The clinics were identified using clinic and provider-level Medicaid identification numbers, known as National Provider Identifiers (NPIs), in the claims data sets. The NPIs were obtained directly from each clinic to ensure that all the providers who provided services to the PBHCI enrollees were included. All consumers with at least one visit to the clinic with a diagnosis of a SMI during a year were considered members of that clinic's caseload for that year.

Defining the PBHCI sample on the basis of clinic caseloads, rather than individual enrollee identification numbers, introduces a potential for misclassification of consumers who received no PBHCI services as having been exposed to the PBHCI program. Such misclassification will have the effect of reducing any impact of PBHCI because the change among enrollees would be spread across a larger group of individuals than were actually enrolled. This downward bias because of the sampling should be kept in mind while interpreting results.

On the other hand, there are also methodological advantages to defining the group exposed to PBHCI in this way. First, all of the consumers who received care at a PBHCI clinic were potentially affected by the program, whether or not they were enrolled. Thus, comparison at the clinic level--that is, between entire clinic caseloads--is an appropriate level of analysis. Second, it is likely that there were complex factors affecting selection of consumers into the program that would introduce bias when comparing a group of PBHCI enrollees with another group selected through claims data. For instance, PBHCI programs might have made an effort to enroll consumers with high PH needs. Alternately, consumers with high PH needs may have sought out the program. Given the coarseness of Medicaid claims data, adjusting effectively for these selection processes within clinic caseloads might not be possible.

Identification of Comparison Clinics

A group of comparison clinics was selected for each cohort of clinics within each state. The goal was to identify a set of comparable mental health clinics within each state based on information in the claims data sets. To that end, we examined four claims-based provider characteristics:

-

Pattern of utilization: CMHC are distinctive in that the people they treat tend to have frequent regular visits for psychotherapy, rehabilitation, or medication reviews. We characterized utilization patterns simply as the average number of visits per unique person over the study period.

-

Proportion of claims with a primary diagnosis of a BH condition: We expect that of the claims submitted by any specialty mental health clinic, the overwhelming majority would have a BH condition as a primary diagnosis.

-

Proportion of claims with a primary diagnosis of schizophrenia: Even among specialty mental health providers, there is likely to be variation in the extent to which they treat SMI in general and schizophrenia in particular.

-

Caseload size: Similar-sized clinics are likely to respond similarly to factors affecting care delivery. Selecting based on size is also important to ensure adequate sample size within each comparison clinic.

Within each state, a group of comparison clinics was selected that matched the PBHCI clinics in that state with respect to the characteristics listed above. Table 2.3 summarizes the results of the selection process with respect to the number of clinics selected as comparisons for each group of PBHCI clinics. In State 1, we selected two groups of comparison clinics, one set of five clinics for the Cohort 1 clinic and another set of five clinics for the Cohort 5 clinics. In State 2, we selected one group of comparison clinics for both the Cohort 1 and Cohort 3 clinics, since the two cohorts were much closer in time, separated only by one year. Consumers with at least one claim from a clinic and with a diagnosis of SMI were counted as members of that clinic's caseload for that year. Any consumers who received services from both a PBHCI clinic and a comparison clinic were considered PBHCI clinic consumers.

As expected, the numbers of individuals identified as members of the clinic caseloads in the Medicaid claims were larger than the numbers of individuals enrolled in the PBHCI programs (Table 2.4). This was expected because the PBHCI clinics targeted only a subset of their consumers for enrollment and because they were not successful in enrolling all of the consumers they targeted.

Outcome Measures

Three types of outcomes were examined: measures of emergency department and inpatient utilization, costs of care, and quality indicators.

Several considerations went into the selection of utilization and quality measures. (See Appendix A for a more comprehensive list of measures considered for study inclusion and sources of measures.) First, we gave priority to measures that have been approved by the National Quality Forum (NQF), which serves as a clearinghouse for carefully specified and vetted measures of the quality of health care. We also considered measures developed by the New York State Office of Mental Health's Psychiatric Services and Clinical Knowledge Enhancement System (PSYCKES), for which detailed specifications for Medicaid data are available (PSYCKES Medicaid, undated). Second, measures were selected to take advantage of the distinct strengths of the claims data by measuring care provided outside of the PBHCI clinic. The selected measures vary to some extent in this regard. PBHCI grantees were more likely to provide diabetes monitoring directly and to rely on referrals for cancer screenings.

Utilization of Emergency Department and Inpatient Services

The utilization measures used in the study are as follows.

-

Emergency department visit for a BH condition (BH emergency department visit).

-

Emergency department visit for a PH condition (PH emergency department visit).

-

Inpatient stay for a BH condition (not calculated for State 1) (BH inpatient stay).

-

Inpatient stay for a PH condition (PH inpatient stay).

-

Three or more emergency department visits for a BH condition (three or more BH emergency departmentvisits).

-

Four or more emergency department visits or inpatient stays for a PH condition (four or more PH emergency department/inpatient).

-

Four or more emergency department visits or inpatient stays for any condition (four or more any emergency department/inpatient).

As noted, because of the lack of data in the State 1 state Medicaid data set on inpatient stays with a primary diagnosis of a BH condition, some measures could not be calculated for the State 1 cohorts.

Costs

Costs of care were calculated directly from the claims using information on actual payments by Medicaid. These costs exclude copayments paid by recipients of care or payments made by other agencies or programs such as Medicare. Four cost outcomes were defined: (1) total costs; (2) costs for outpatient services; (3) costs for emergency department services; and (4) costs for inpatient services.

Quality Measures

The quality measures were selected from among well-described claims-based measures to assess receipt of preventive or integrated care services. The measures do not assess overall quality of care delivered at the PBHCI or comparison clinics. Rather, they are meant to reflect the potential impact of PBHCI on care that consumers would have received at outside general medical clinics. To receive these services, consumers would need not only to be referred to a provider but also follow-through with the referral and receive the service from the provider. Because these measures assess care that depends on follow-through from both consumers and external providers, performance on these measures constitutes a stringent test of the impact of PBHCI. The following quality measures were examined:

-

Diabetes monitoring: the proportion of individuals with a diagnosis of diabetes who have had at least one claim for an HbA1c test during the year.

-

Flu vaccine: the proportion of all individuals in the caseload with a claim for a flu vaccination during the year.

-

Breast cancer screening: the proportion of women aged 50-74 years with a claim for a mammogram during the year.

-

Cervical cancer screening: the proportion of women aged 24-64 years with a claim for cervical cancer screening during the year.

-

Colorectal cancer screening: the proportion of individuals aged 51-75 years with a claim for a colorectal cancer screening procedure during the year.

-

Any outpatient PH visit: the proportion of all individuals with a claim for one or more outpatient claims with a primary diagnosis of a PH condition.

-

Follow-up after hospital discharge (for mental illness): the proportion of individuals discharged from an inpatientstay (for mental illness) with a BH visit in the subsequent 30 days.

Statistical Analyses

Difference-in-Differences Approach

Our primary approach is to estimate the impact of the PBHCI program using a DD model. This model compares the temporal trend across the pre-PBHCI and PBHCI periods between the PBHCI and comparison consumers. A method that has become increasingly popular in the empirical literature on the effects of policy interventions (Abadie, 2005; Bertrand, Duflo, and Mullainathan, 2001; Card and Krueger, 2000; Conley and Taber, 2005), the appeal of DD estimation comes from its simplicity and its potential to mitigate biases in the comparison between the PBHCI consumers and the comparison group that could be the result of permanent differences between those groups, as well as biases from the pre-post PBHCI comparison of consumers that could be the result of secular trends unrelated to the PBHCI intervention (Imbens and Wooldridge, 2007). The DD model is recommended by CMS for longitudinal evaluations (Howell, Conway, and Rajkumar, 2015).

Model Specification

We examined three types of outcome measures: utilization of emergency department and inpatient hospital services, costs of care, and health care quality. The utilization and quality measures are binary indicators--for example, whether or not an individual had four or more emergency department visits or inpatient stays for a PH condition. The cost outcomes include both binary indicators of whether or not an individual used a type of service (e.g., an inpatient stay) and continuous measures of total costs of care (e.g., the total cost for inpatient stays among individuals with an inpatient stay). Cost is calculated as a continuous variable indicating the amount paid for services by Medicaid.

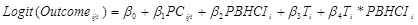

For dichotomous variables, we estimated a series of logistic models similar to (M1).

|

(M1) |

| where the dependent variable indicates the value of the study outcome for the ith consumer in the jth state at time t (t=0 for pre-PBHCI and 1 for PBHCI period) | |

| PC is a vector for the consumer factors | |

| PBHCI is a dichotomous variable to indicate PBHCI and comparison groups | |

| T is a dichotomous variable to indicate the pre-PBHCI and PBHCI periods | |

| T*PBHCI is an interaction between PBHCI program and time, whose coefficient, β4, indicates the DD effect of PBHCI program implementation. | |

Since the individuals are grouped within clinic caseloads, we specified the model with standard errors clustered at the clinic level to take account of within group correlations.

To examine health care costs, we note an important difference across types of costs. While every consumer in our study sample has annual outpatient costs and total annual costs, only a small proportion of them have incurred annual costs of emergency department or inpatient care. To analyze either annual outpatient costs or total annual costs, we chose to use generalized linear models with Gaussian distribution and log link following the recommendations by Manning and Mullahy (2001) as shown in M2:

|

(M2) |

| where εijt is the error term, and other variables are the same as in equation M1 discussed. As in (M1), (M2) was specified with standard errors clustered at the clinic level. | |

For either annual emergency department costs or annual inpatient costs, we chose to estimate a two-part model with the first part examining the probability of using emergency department (or inpatient) care, and the second part examining the emergency department (or inpatient) costs among the emergency department (or inpatient) users. The first part is similar to (M1), while the second part is specified as generalized linear models with Poisson distribution with log link as recommended by Manning and Mullahy (2001).

Supplemental Analysis of Continuously Treated Consumers

The primary analysis was conducted using samples including all individuals seen at each clinic--that is, the entire clinic caseload for the each year of the study. This approach allows for movement of individual consumers in and out of each caseload over time for both the PBHCI and comparison clinics. In most cases, the movement in and out of clinic caseloads was quite large: of the total number of consumers seen in the clinics during the pre-PBHCI and first post-PBHCI years, about 50 percent were seen in both years. While defining the sample in this way provides the best test of the impact of PBHCI, which was implemented at the clinic level and targeted all consumers seen at the clinic, the approach also has limitations that should be acknowledged. In particular, many of the consumers seen in the PBHCI period were new consumers with limited PBHCI exposure. In addition, allowing movement into the clinic caseload introduced the possibility of confounding the DD model because the new consumers may have differed from the prior consumers. To address this limitation, we also conducted analyses focusing on the subset of continuous consumers--that is, those who were seen in the same clinic during both the pre-PBHCI and the post-PBHCI years.

| FIGURE 2.1. Overlap Between PBHCI Grantee Clinical Activities and Available Billing Data |

|---|

|

|

|

| TABLE 2.1. Number of PBHCI Clinics in the States Chosen for Analysis by Cohort | |||

|---|---|---|---|

| Cohort | Funding Year | Clinical Service Start | States 1, 2, and 3 |

| 1 | 2009 | 2010 | 2 |

| 2 and 3 | 2010 | 2011 | 3 |

| 4 | 2011 | 2012 | |

| 5 | 2012 | 2013 | 8a |

| 6 | 2013 | 2014 | |

| 7 | 2014 | 2015 | |

| Total | 13 | ||

|

|||

| TABLE 2.2. Years of Data Included in Analyses of State | |||

|---|---|---|---|

| State | Data Source | Time Period | |

| Pre | Post | ||

| State 1 | State | 2009 | 2010-2013 |

| 2012 | 2013 | ||

| State 2 | ResDAC | 2009 | 2010-2012 |

| 2010 | 2011-2012 | ||

| State 3 | ResDAC | 2010 | 2011 |

| TABLE 2.3. Numbers and Sample Sizes During the Pre-PBHCI Year for the Comparison Clinics Selected for Each PBHCI Cohort Within Each State | ||||

|---|---|---|---|---|

| State | Data Source | PBHCI Cohort | Comparison Clinics | Pre-PBHCI Year Sample |

| 1 | State | 1 | 5 | 1,470 |

| 5 | 5 | 1,586 | ||

| 2 | ResDAC | 1, 3 | 4 | 1,143 |

| 3 | ResDAC | 3 | 4 | 921 |

| TABLE 2.4. Comparison of Consumer-Yearsa Enrolled in PBHCI with Consumer-Years Identified in Clinic Caseloads in Medicaid Claims Data, for PBHCI Implementation Years Included in Analyses | ||||

|---|---|---|---|---|

| Cohort | Year(s) Included | PBHCIb | Medicaid | Ratio of PBHCI to Medicaid |

| State 1, Cohort 1 | 2010-2013 | 1,079 | 2,379 | 0.45 |

| State 1, Cohort 5 | 2013-2013 | 526 | 1,693 | 0.31 |

| State 2, Cohort 1 | 2010-2012 | 1,067 | 2,447 | 0.44 |

| State 2, Cohort 3 | 2011-2012 | 1,753 | 2,322 | 0.75 |

| State 3, Cohort 3 | 2011 | 168 | 3,216 | 0.05 |

|

||||

3. RESULTS

This chapter presents the results of the DD analyses of the impact of the PBHCI programs on the outcomes described earlier. The analyses were conducted by state and, within states, by PBHCI cohort. This chapter reports results in which the data from the PBHCI implementation period are aggregated. Appendix B shows detailed year-by-year breakdowns of the results.

Sample Descriptions

Table 3.1 describes the samples for each of the PBHCI cohorts and comparison groups. The sample sizes include all respondents in the analysis, both those who received services during the pre-PBHCI period and the PBHCI implementation period. The PBHCI sample sizes range from a low of 2,675 for Cohort 1 in State 2 to a high of 5,187 for Cohort 3 in State 3. The sample sizes are generally larger for the comparison groups, with the exception of Cohort 5 in State 1, where the comparison group sample is slightly smaller than the PBHCI sample. The total sample across all cohorts and states is 57,365, 70 percent of whom were seen in comparison group clinics.

There are differences between the PBHCI and comparison group samples across sex, age, and diagnosis as indicated by the figures in bold in Table 3.1. Differences are relatively minor with respect to sex; differences reach statistical significance in two cases (Cohort 1 in State 2 and Cohort 3 in State 3), but the differences are small in magnitude. In contrast, differences in age distribution between PBHCI and the comparison group are found for all cohorts. However, the nature of the age differences is not consistent. The PBHCI cohort is more likely to be in the lower age category than the comparison group in three of the five cohorts. The comparison of the PBHCI and comparison groups in diagnosis was conducted with respect to the proportion of the sample with a diagnosis of schizophrenia (versus other SMI diagnosis). These proportions were nearly identical in two of the five cohorts but were substantially higher in the PBHCI sample in two of the cohorts and higher in the comparison sample in one of the cohorts.

In four of the five comparisons, the PBHCI clinic caseloads included more consumers who were Black or Hispanic and fewer who were White. The exception, the State 3 Cohort 3 clinics, were very similar in race/ethnic composition. Race/ethnicity data should be interpreted with caution because of the large number who may identify with "other ethnicity," which may include misclassified or missing information. The two State 2 cohorts had nearly identical race/ethnicity distributions for both PBHCI and comparison clinic caseloads.

Utilization Measures