A Framework for Evaluating Quality Transparency Initiatives in Health Care

Final Report

Prepared by:

Ha T. Tu Johanna R. Lauer

April 15 , 2008

Prepared for:Office of the Assistant Secretary for Planning and Evaluation (ASPE)U.S. Department of Health and Human Services (HHS)

This report is available on the Internet at:http://aspe.hhs.gov/health/reports/08/quality/report.html

Printer Friendly Version in PDF format (59 pages)

TABLE OF CONTENTS

PROJECT DESCRIPTIONQUALITY TRANSPARENCY LITERATURE REVIEWPROGRAM EVALUATION FRAMEWORKPROGRAM EVALUATION FRAMEWORK AS APPLIED TO QUALITY TRANSPARENCYCASE STUDY 1: CALHOSPITALCOMPARECASE STUDY 2: MASSACHUSETTS HEALTH QUALITY PARTNERSKEY TAKEAWAY POINTSAPPENDIX 1: INTERVIEW RESPONDENTSAPPENDIX 2: KEY SOURCES ENDNOTES

LIST OF TABLES AND FIGURES

FIGURE 1: GENERIC LOGIC MODEL TABLE 1: BENNETT’S HIERARCHY FIGURE 2: GENERIC QUALITY TRANSPARENCY LOGIC MODEL FIGURE 3: GENERIC QUALITY TRANSPARENCY LOGIC MODEL CONTINUEDTABLE 2: GENERIC QUALITY TRANSPARENCY EVALUATION QUESTIONSTABLE 3: GENERIC QUALITY TRANSPARENCY EVALUATION METHODS

PROJECT DESCRIPTION

Research Objectives: To develop a conceptual framework for evaluating the impact of health care quality transparency initiatives. In developing this framework, three major research questions are addressed:

- What are the characteristics and processes of a program evaluation that will result in an effective and unbiased evaluation of a quality transparency initiative?

- What are the most important substantive issues (evaluation questions) that a program evaluator must address in assessing the impact of a quality transparency initiative?

- For those evaluation questions identified as being the most critical, what are the most feasible and cost-effective evaluation methods for measuring performance of the initiative?

Background and Brief Description

Unlike price transparency initiatives in health care, most of which have been launched only within the past few years, some quality reporting programs have been available to the public since the late 1980s. An assessment of early quality transparency programs suggests very limited impact, with consumers and purchasers rarely seeking out the relevant quality information and often not understanding or trusting it.[1]

Recently, quality transparency initiatives have not only increased in number but also gained prominence and visibility for several reasons. First, the Internet has enabled more quality information to be disseminated to broader audiences in a more timely, efficient and low-cost manner. In addition, advocates of health care consumerism have increasingly pushed for the public dissemination of provider quality data in tandem with price data to enable consumers to assess and shop for health services based on perceived value (a price-quality combination preferred by the consumer). The federal government has supported these efforts, with President Bush issuing an executive order in August 2006 requiring each federal agency that administers or sponsors a health care program to make provider quality information available to program beneficiaries or enrollees.[2]

While both private and public, and voluntary and mandatory, quality reporting programs have proliferated, little is known about what impact, if any, these initiatives have had on consumer awareness and shopping behavior or provider quality standards and competition. Indeed, there has been little discussion about how to apply a standardized evaluation framework for assessing the success or impact of quality transparency initiatives, which vary widely in stated objectives, as well as scope, resources and implementation approaches.

The aim of this project is to create a framework for assessing the impact of quality transparency initiatives in health care. This analysis is a companion study to an analysis The Center for Studying Health System Change (HSC) conducted for ASPE in 2007: A Framework for Evaluating Price Transparency Initiatives in Health Care. In this study, we draw on many of the findings reported in the price transparency analysis. In developing the framework for this study, we reviewed the literature on program evaluation and quality transparency and discussed the topic with experts (see Appendices 1 and 2). This report begins with an overview of the literature on quality transparency. Similar to our previous report on price transparency, this section is followed by a discussion of the framework and practice of program evaluation, which incorporates a literature review, insights offered by program evaluation experts, and our own analysis of the aspects of program evaluation most pertinent to assessments of quality transparency programs. The next section of the report is an in-depth discussion of how the standard program evaluation framework would be applied to quality transparency programs. We provide a detailed description of the steps involved in evaluating a “generic” quality transparency program, including the formulation of a logic model, evaluation questions and evaluation design. To provide more concrete examples, this section is followed by two quality transparency evaluation case studies. Although these case studies are by no means intended to be comprehensive evaluations, they highlight key ideas and methods that would be used by an evaluator of such programs. In the final section, we summarize our report with 10 key takeaway points that underline important concepts related to both the design and the evaluation of quality transparency programs.

QUALITY TRANSPARENCY LITERATURE REVIEW

Policymakers and health care researchers have investigated quality transparency for a number of years, and, consequently, there are many studies assessing specific transparency initiatives and the quality of their data.[3] However, the body of literature that provides a conceptual framework for an in-depth analysis of quality transparency issues is more limited. One of the seminal papers, which does objectively discuss the theory of quality transparency, is “The Public Release of Performance Data: What Do We Expect to Gain? A Review of the Evidence,” by Martin Marshall, et al. In this article, Marshall discusses how policymakers have advocated quality transparency as a mechanism to achieve a wide variety of goals: regulating providers of care, ensuring accountability, informing, promoting quality improvement, and encouraging cost control.[4] However, most researchers agree that based on what quality transparency initiatives realistically can achieve, their objectives and target audiences ought to be defined more narrowly. In another article, Marshall offers two general objectives for quality transparency initiatives: (a) to increase the accountability of health care organizations, professionals and managers, and (b) to maintain standards or stimulate improvements in the quality of care provided.[5]

Similar to the objectives, the target audience for quality transparency initiatives also can be misrepresented. Marshall explains how quality transparency programs typically name a variety of target audiences, including consumers, purchasers, physicians and/or provider organizations. In reality, however, each of these groups might not use the program and change their behavior. Although many quality transparency programs are aimed primarily at consumers, the literature is inconclusive about whether consumers actually use the Web sites or reports, and in particular, whether they alter their use of health care services as a result. Several studies have shown that consumers exposed to quality transparency initiatives continue to use hospitals with high mortality rates and that consumer decisions regarding hospitals are more likely to be influenced by anecdotal press reports than risk-adjusted mortality data.[6] Marshall attributes this phenomenon to a number of factors, including consumer difficulty in understanding the information, disinterest in the nature of the information available, lack of trust in the data, problems with timely access to the data and lack of choice. Nevertheless, one study of a specific quality transparency program reveals that consumers exposed to a public report of provider quality were much more likely than other consumers to have accurate perceptions of the relative quality of local hospitals, and these perceptions persisted for at least two years after the release of the report.[7]

In addition to consumers, quality transparency program designers often focus on purchasers of health care (e.g., employers and coalitions). However, evidence from the literature suggests that quality transparency has only a small, though possibly increasing, effect on purchasing behavior.[8] Similarly, although health plans are often involved in quality transparency programs, looking to steer their members to high-quality providers, studies have shown that managed care patients were in fact less likely to have surgery at lower-mortality hospitals.[9]

Individual physicians may also be a target audience for quality transparency initiatives, as they may use the information to either alter their own behaviors or refer their patients to high-performing specialists.[10] Yet, for the most part, individual physicians tend to view quality transparency with skepticism and consider it to be of minimal usefulness, particularly because of statistical concerns associated with profiling individual providers whose patients account for a much smaller sample size than for an entire institution.[11] In contrast, hospitals and provider organizations are widely considered to be the most appropriate and receptive target audience for quality transparency programs. Hospitals can compare their own quality metrics with those of competitors and poorly performing hospitals may be motivated to improve their own quality standards, through a phenomenon known as the “sunshine” effect. Furthermore, since hospitals are sensitive to their public image and potential legal risks, and often have the authority to act on suboptimal levels of performance and promote good standards of practice, they will likely respond to performance data as a “competitive opportunity or risk management imperative.”[12]

Previous research has addressed the importance of selecting quality measures that appropriately capture provider quality and meet the needs of the target audience(s). In general, quality transparency programs use one set or a mix of measures including the following: [13], [14]

- Patient experience—based on the patient’s opinion

- Structural—whether the provider has invested in infrastructure like electronic medical records that could improve the quality of care

- Process—whether the provider offered key therapies and interventions to patients who could benefit from them, based on the recommended guidelines for care

- Outcome—refers to the impact of the medical care provided

Each of these measures captures different aspects of the quality of care. Although outcomes measures are typically considered the most direct method for measuring health care quality, the difficulties associated with measuring outcomes, discussed below, have led experts to generally agree that the most accurate and comprehensive approach for measuring quality is to use a mix of the above measures.[15] However, when using outcomes measures (and sometimes process measures), it is important to carefully risk-adjust the data as differences in outcomes can be caused by variations in the patients’ conditions. Unfortunately, the current science of risk-adjustment is not sufficiently advanced; and, researchers have only been able to identify the clinical characteristics that influence mortality rates for a limited number of conditions. And, the extent to which risk adjustment should include demographic and socioeconomic factors is a matter of ongoing debate. Some experts argue that variables should only be controlled for if they account for a known biological difference (for example, women have smaller vessels, which makes it technically more difficult to perform bypass surgery), while adjusting for race or ethnicity could unfairly mask those hospitals that do not provide culturally competent care.[16] As a result, it is a challenge for quality transparency program designers to identify and use viable methods for risk-adjusting data, while acknowledging that even state-of-the-art methods still have their limitations, and that they will not be universally accepted (especially by providers).

In addition to selecting appropriate quality measures, program designers must also choose the most appropriate and relevant medical conditions for which to report quality metrics. The California HealthCare Foundation’s Quality Initiative details five criteria for selecting these conditions, including the following:

- Clinical significance—prevalence of condition; impact on quality and length of life

- Impact of quality of care on measured performance—conditions whose outcomes are not heavily influenced by factors other than quality (e.g. many co-morbidities)

- Magnitude of variation in quality—those conditions with greater variation in quality have the potential to have the most impact if accurate measures can be reported

- Practicality of measuring quality—important for reducing the reporting burden

- Contribution to the scope of provider performance assessment—whether the information will be relevant to a large number of consumers

These criteria are important to consider when developing a program to assess condition-specific quality; however, this should not be confused with a transparency program aimed at measuring overall hospital or medical group quality. In the latter case, program designers should select measurements that will provide both a comprehensive and accurate depiction of the organization’s/group’s overall quality levels.

In designing a quality transparency program, it is also important to select an appropriate source of data. Although the relevance of different data sources varies depending upon the type of quality measure, it is generally accepted that especially for outcomes measures, clinical data, collected by providers using patient charts, is superior to administrative data, derived primarily from insurance claims.[17] Administrative data are easier and much less costly to collect and review but have several shortcomings, including the following: (1) cases can be missed or misclassified, especially for non-reimbursable diagnoses; (2) pre-operative co-morbidities are often not reported separately from post-operative complications, impacting risk-adjustment; (3) administrative data are most often created for billing purposes rather than for monitoring of clinical care. While some quality transparency programs have developed methods to adjust administrative data, after auditing and comparing it against patient chart data, in an effort to more accurately capture provider performance, these solutions have major limitations.[18] Ideally, quality transparency programs would use clinical data that are both audited and validated to ensure the accuracy of the data and prevent providers from “gaming” the system, but such data collection and reporting approaches are very costly.[19]

There is a risk that quality transparency programs may have unintended adverse consequences, and the less well designed a program, the higher the risk. For example, inadequate risk adjustment of outcomes measures can lead to serious unintended effects: some physicians may avoid sicker patients in an attempt to prevent erosion in their quality rankings,[20] and other physicians (those who continue treating sicker patients) could be subjected to unfairly low quality rankings. A quality program that overemphasizes process measures may cause some providers to ignore their own clinical judgment, or their patients’ unique situations or preferences, and choose health care interventions that will help them achieve “target” quality ratings.[21] And while structural measures are simple to measure, it is often difficult to define their impact on health outcomes. An emphasis on structural measures may cause providers to devote resources to systems that may not actually improve quality of care.[22]

Two additional unintended effects could arise through the poor design of quality transparency programs. The first is an inappropriate focus on metrics that can be easily measured and have less of a reporting burden rather than metrics that are more difficult to measure but are substantively more important.[23] This may have the inadvertent effect of “lowering the bar” for quality of care, because providers will deliver those services on which they are measured. And second, in the absence of a national model for quality transparency programs, numerous entities, including state governments, other public organizations, private companies and health plans, have developed a wide variety of measures and programs for reporting quality, some of which are proprietary in nature (and thus may not provide true transparency to target audiences). As a result, a recent study by Michael J. Leonardi et al., “Publicly Available Hospital Comparison Web Sites,” found that rankings of providers can be quite inconsistent and contradictory, which could discredit quality reports and confuse consumers and purchasers.[24] In response, providers have already expressed considerable frustration and mistrust of quality rankings.[25]

In light of these issues, evaluators must carefully design evaluation questions and methods that take into account both the intended and unintended effects of quality transparency programs. Few studies have systematically developed such an evaluation framework; however, the article by Leonardi does outline several basic criteria, listed below, for assessing quality transparency programs, which could be used to develop a detailed framework.

- Web site accessibility—cost, requirement to sign up and visibility

- Data transparency—data source, statistical method and risk-adjustment

- Appropriateness—variety of types of quality measures (e.g. structural, process and outcomes), and procedure-specific vs. general measures

- Timeliness—frequency of data updates

- Consistency—variation from other transparency Web sites

Furthermore, Bridges to Excellenceâ, which operates physician pay-for-performance programs, recently completed an evaluation of its programs and developed several evaluation questions and methods for measuring their impact.[26] Using a variety of survey techniques, evaluators measured changes in physician/patient engagement, whether the programs were implemented as expected, and if the programs resulted in healthier patients. Although the Bridges to Excellence programs are quite different from typical quality transparency Web sites, the evaluation still provides a useful model for developing a framework for evaluating quality transparency programs.

These background papers, along with other analyses not specifically referenced here but noted in the Sources section, help to provide a framework for evaluating the success or impact of quality transparency programs. Specifically in the context of a program evaluation, the issues raised in these analyses help to shape the evaluation questions that an evaluator needs to ask about any quality transparency initiative under assessment.

PROGRAM EVALUATION FRAMEWORK

1. Types of Program Evaluation

Historically, program evaluations were used to determine the ultimate success or failure of a program. Over the years, however, program evaluation has evolved and become an integral part of the planning and implementation stages of program development. Program evaluation can be much more than a simple analysis of outcomes; it also can be a tool for program improvement. As a result, several kinds of program evaluation have emerged, each tailored to a specific stage of program development.

Although terminology varies slightly throughout the literature, there are three primary types of program evaluation that we have found most useful, including the following:

- Formative evaluation: This evaluation begins during the development of the program and is typically conducted by the program managers. The purpose of a formative evaluation is to develop a logic model and theory of change. A theory of change is a description of the environment in which the program will operate, the individuals involved in the program, the activities that will take place and the outcomes the program hopes to achieve.

- Process evaluation: Unlike typical goals-based evaluations, process studies examine how something happens and whether it has been implemented as planned. Consequently, process evaluations often try to take into account unexpected variables or outcomes.

- Summative evaluation: Also referred to as an outcomes or impact evaluation, this method is used to assess whether a mature project has achieved its goals. Unlike a formative evaluation, summative evaluations are conducted after the implementation of the program by objective observers. The analysis may focus on both long-term and short-term outcomes, as well as the reasons for their success or failure.

For a summative evaluation, it is especially important to choose an unbiased evaluator who will be able to offer an impartial analysis of both the impact of the program, as well as the logic model and theory of change. Although a program designer might initially have a better understanding of the program, an outside observer is more likely to question assumptions regarding causality, leading to a more thorough evaluation.

In the rest of this report, we will focus on summative evaluations as they are most applicable for studying quality transparency initiatives that have already been implemented; however, we will take a broad definition of summative evaluations, by including steps that would normally be conducted separately in formative and process evaluations. This approach can be particularly useful for studying poorly planned programs that have already been implemented. Overall, a generic summative evaluation includes activities in the following order:

- Define primary terms and identify the fundamental purpose of the program and evaluation

- Develop logic model for the program, if it was not already created during a previous formative evaluation

- Using the logic model, formulate evaluation questions that will meet the fundamental purpose of the evaluation

- Develop observable measures that will provide indicators for the evaluation questions drawn from the logic model

- Develop and implement evaluation design and data collection methods

- Revise logic model and/or program goals to reflect a more appropriate theory of change and program objectives

The following section will discuss each of these activities in greater depth, providing examples drawn from quality transparency programs.

2. Steps in a Summative Program Evaluation

2a. Develop definition of primary terms and identify evaluation purpose, program objectives and target audience

It is important to begin by defining the primary terms, such as “quality transparency,” discussed in the program to ensure that all stakeholders agree on what is being evaluated. After working definitions are developed, evaluators must then decide what is the fundamental purpose of the evaluation. Often, an evaluation may be conducted to determine whether a program is having a significant impact that warrants further funding. At its most basic level, however, a broad summative evaluation tries to answer two primary questions: first, whether the program has met its stated goals, and second, whether the goals are optimal. Evaluating optimality would include an assessment of whether program goals are feasible, and whether the program represents a constructive use of resources. It is also the role of the evaluator to question the stated objectives of the program and investigate whether there are any underlying issues driving the program that may or may not have a positive impact on the program outcome. Tied to the analysis of objectives is a review of the program’s target audience. Before beginning the evaluation, the evaluator must also consider whether the target audience is appropriate given the environmental constraints.

2b. Develop logic model

The second step in all evaluations is to develop a theory of change, which is graphically depicted in a logic model: a common tool used to lay out the program’s elements and describe the causal linkages that are assumed to exist for the program to achieve its goals. It is important to begin an evaluation with the development of a logic model, as it is later used to inform the choice of specific evaluation questions and measures.

Planners and managers of well-designed programs would construct a logic model during the initial design process or formative evaluation. Outside evaluators can then use that logic model for the summative evaluation. If a logic model does not already exist, evaluators must create the logic model, using their own independent knowledge, and also by consulting with the program manager and stakeholders when possible.

Although logic models may vary in design, they typically include six major elements, connected by arrows and illustrated below (Figure 1):

Figure 1: General Logic Model

"environmental factors" box with arrows pointing to boxes,( inputs, activites, outputs), and a table with "short-term", "intermediate", and "long-term" in the table contents beneath an "Outcomes" table header.

inputs-arrow pointing to "activities" box.

activities -arrow pointing to "outputs" box, and an arrow pointing from a "barriers" box.

outputs-arrow pointing to table with "short-term", "intermediate", and "long-term" in the table contents beneath an "Outcomes" table header.

The elements of the program are listed within each of the horizontal categories and the external factors acting on the program are listed above and below. Inputs include the resources that are used for the project, such as quality data or project funding. The activities are the actions taken by project managers to achieve the goals of the project; examples include data collection and Web site development. Outputs are the immediate results of the initiative, such as the number of consumers or providers who visited a quality transparency website. Outputs are often confused with outcomes; however, outputs are tied directly to a program activity and provide evidence that an activity has occurred, though not necessarily that a program has achieved its purpose. Outcomes, on the other hand, are the desired accomplishments or changes that show movement toward the program’s ultimate objectives. Outcomes typically are divided into short-term, intermediate and long-term subsets. In the case of quality transparency initiatives, a short-term outcome might be providers’ heightened awareness of their quality ratings; an intermediate outcome might be providers’ development of new quality control initiatives; and a long-term outcome, often the ultimate goal of the initiative, might be improved patient care and consequently better clinical outcomes.

In addition to these basic elements, logic models also include information on the program’s environment and barriers. Environmental factors describe the context in which the program operates. A well-designed program will take into account the environment, though it will not attempt to solve these larger external issues (which are often beyond the reach of the program). In a quality transparency logic model, one environmental factor would be that financial incentives to physicians may disproportionately reward the provision of some services relative to others, in ways that do not reflect optimal quality of care (e.g., physicians being paid more for procedures rather than, for example, discussions regarding medication management, which might benefit the patient more). Program planners and evaluators must be aware of the financial (and other) incentives, which can negatively affect the impact of the quality transparency initiative. A failure to identify such factors can lead to a poorly designed program as well as a flawed evaluation.

Barriers are a subset of environmental factors; they represent those external issues that the program attempts to address. Consequently, each barrier is matched with a program activity that is designed to reduce or eliminate it. Listing the barriers in the logic model allows program managers to identify the necessary steps that must be taken to achieve successful results. In a quality transparency model, a barrier might be that the entities collecting the data might use different data abstraction, coding and reporting practices, preventing the measures from being comparable across providers. A well-designed quality transparency program would develop standardized collection practices, and audit and validate the data, to ensure that it is accurate and comparable across providers.

2c. Formulate evaluation questions

The next step in a broad summative evaluation is the creation of evaluation questions, which guide the focus of the evaluation and delineate the different dimensions in which the program will be judged. The evaluation questions are developed by pairing the logic model with a framework known as Bennett’s hierarchy. Illustrated below (Table 1), next to the corresponding categories of a logic model, Bennett’s hierarchy is a list of the types of evidence that may be examined by an evaluator to determine the overall impact of the program.[27] Information from the lower levels helps to explain the results from the upper levels, which are often more long-term. Additionally, as the evaluator moves up the hierarchy, the evidence often becomes more difficult and expensive to obtain. For example, evidence of actions—behavioral changes in the target audience—may require consumer surveys and focus groups or interviews, while evidence of program resource use may simply require an expenditure review. It is, therefore, important to start at the bottom of the hierarchy and work up the ladder of questions, verifying that the program has met a minimum level of achievement, prior to expending significant resources to answer the upper-level evaluation questions. Evidence from further up the hierarchy, however, generally provides a stronger indication of whether the program has achieved its larger goals.

2d. Develop observable measures

In addition to defining the evaluation questions, researchers must translate the lines of inquiry into observable measures. This step operationalizes the qualitative evaluation questions into measurable indicators. Although the appropriate indicator might be obvious for some evaluation questions, such as those regarding funding, they may be more difficult to select for questions regarding abstract concepts like “leadership” or “knowledge.” For more complex questions, it may be necessary to select several indicators to capture the core issues adequately.

Once the observable measure is chosen for the evaluation question, the second step must be to define what level of change will be considered significant. It is important to define what constitutes significant change prior to beginning data collection as it prevents bias, ensuring that the results of the evaluation are not skewed by the evaluator’s beliefs.

2e. Develop and implement evaluation design and analyze findings

The next step is for evaluators to develop an evaluation design, determining the most precise and feasible methods for gathering data. Although one particular evaluation method might yield the most accurate information, it may be impractical given funding, staffing and time constraints. Therefore, feasibility must be a primary consideration when selecting one or multiple methodological approaches and data collection instruments for each evaluation question.

These evaluation methods fall into two general categories: quantitative and qualitative. An example of a quantitative tool would be a survey of the target audience to understand reactions to the program. Surveys are useful for gauging the response of a large group, but the information gathered is limited to the questions asked on the survey.

In contrast, qualitative methods, such as a focus group, can be helpful in collecting more in-depth information on the thought processes and behavioral changes of program participants. Since a focus group requires a greater time commitment from respondents, the sample size is much smaller than for surveys, though it yields more in-depth information, and is particularly helpful in understanding unexpected program results.

An additional qualitative method that may be used is a direct assessment by the evaluator of the program Web site or other program products. This should include an assessment of the validity and accuracy of the information disseminated by the program. In addition, the evaluator can perform the assessment from the perspective of the consumer—testing, for example, whether the information is presented in clear, easy-to-understand language and whether the Web site is well designed and easy to navigate. Although the evaluator’s assessment from the consumer perspective is not necessarily as credible as input from consumer focus groups, it is a cost-effective method for analyzing program features that affect end-users of the program.

Each of the methods discussed above has different strengths and limitations. Consequently, a mixed-method approach often yields the best results. By using several methods to answer each evaluation question, evaluators can develop a more complete understanding of the effects of the program. In addition, a mixed-method approach can reduce the chance of external factors either inflating or diluting the impact of the program. The influence of such factors can be particularly problematic when measuring long-term outcomes, which are more likely to be affected by the larger environment. Using a rigorous evaluation design will help ensure that evaluation data are collected and analyzed appropriately, and that the resulting evaluation report is based on sound conclusions.

2f. Revise logic model

In some situations, program designers may not have had the resources or expertise to develop a well-constructed program. This may result in a flawed or non-existent logic model and unrealistic program goals, which might become apparent during an evaluation. In this case, an evaluator, using knowledge of the program development process, and by consulting with experts and stakeholders, might offer recommendations on how to improve the program and revise the logic model. This step is called an explanatory evaluation and is sometimes included in a broad summative evaluation. An explanatory evaluation goes beyond simply measuring if the program met its goals and, instead, attempts to answer why the program failed or succeeded. Evaluators analyze the logic model, questioning each of the linkages between the components of the model, and make recommendations for revised activities, inputs and program goals where appropriate.

PROGRAM EVALUATION FRAMEWORK AS APPLIED TO QUALITY TRANSPARENCY

1. Framework of a Summative Evaluation of a Generic Quality Transparency Program

The following section applies the theory of program evaluation to quality transparency programs. To simplify this task, a generic quality transparency program was developed based on reviews of real quality transparency Web sites. The framework for a summative evaluation is then applied to the generic program to highlight the specific issues related to evaluating quality transparency initiatives.

It is important to remember that, unlike the programs discussed in the previous chapter, quality transparency initiatives are subject to the limitations of their environments. As a result, program designers might have to limit their objectives or activities because of time, funding, staffing or other constraints. Therefore, in evaluating quality transparency programs it is important to consider both the requirements for a well-functioning program and the resources available to the program.

The following evaluation questions and corresponding measurement processes can be visualized as a ladder. The uppermost rungs, or evaluation questions regarding long-term outcomes, only need to be reached during evaluations of the most well-designed and well-developed programs (those that show evidence of success on program outputs and short-term outcomes). For some quality transparency programs, it may only be necessary to conduct a mini-formative evaluation, analyzing the evaluation questions on the lowest rung of the ladder—those dealing with inputs or resources. Nevertheless, the following section will discuss the framework for a full summative evaluation, though we have made an effort to identify points at which evaluators might consider halting the evaluation for less-developed programs.

1a. Develop definition of primary terms and identify evaluation purpose, program objectives and target audience

Many organizations, including health plans, federal and state governments, employer groups and not-for-profit entities, are increasingly developing quality transparency programs. These initiatives are designed around a broad range of definitions of “quality health care” and “quality transparency.” For the sake of this paper, however, we use the definition for quality health care developed by the Agency for Healthcare Research and Quality (AHRQ), which states that quality health care is: “Doing the right thing, at the right time, in the right way, to achieve the best possible results.”[28] To focus our evaluation framework, we will use a definition of “quality transparency” that is drawn from several sources in the literature and aims to encourage the development of useful and influential quality transparency programs. Thus our definition of quality transparency is the provision of usable quality information to a specific audience that allows a comparison between a health care provider’s quality of care and a normative or community standard.[29], [30] Quality data are considered usable if the information is meaningful, accurate, comprehensive and reliable.[31]

Similar to the definition of “quality transparency,” the program objectives may vary depending upon the sponsor, resources and environmental factors. Furthermore, the sponsor may have underlying intentions, outside of the program’s publicly stated purpose, that can influence the program design. For example, in the case of insurers, although a program may be marketed as a quality comparison tool, the actual measures might include both quality and cost components, be more heavily weighted toward the latter with an objective of steering enrollees toward lower-cost providers. Therefore, evaluators must objectively question all aspects of the program and consider their effect on the program’s overall impact.

In general, however, quality transparency programs have two general goals, as defined by Marshall in his seminal article: (a) to increase the accountability of health care organizations, professionals and managers, and (b) to maintain standards or stimulate improvements in the quality of care provided.[32] It is not expected, however, that a quality transparency program would immediately meet these goals, but rather that they will eventually be achieved through the theory of change (as illustrated in the logic model discussed below). And while, ideally, a quality transparency program will seek to achieve both of these objectives, in some situations, environmental factors or lack of funds may prevent the sponsor from pursuing them in their entirety. For example, a quality transparency program might focus solely on reporting data for hospitals, rather than including the full spectrum of providers. Additionally, programs reporting on hospital quality might focus only on the most prevalent conditions or procedures. An evaluator, however, may still judge the program positively if it meets its stated goals and does not overstate its intentions. As explained in the previous chapter, a program evaluation is conducted first to determine if the program met its goals, and second to consider whether the goals were optimal and comprehensive.

Implicit in the selection of objectives is the identification of appropriate target audiences. Quality transparency programs may have a variety of target audiences including consumers, providers (both physicians and hospitals), health plans, employers and policymakers. This discussion of a generic quality transparency program will focus on two primary target audiences: consumers and providers. (Secondary target audiences are excluded from this discussion to keep the framework from becoming too detailed and unwieldy, and also because many quality transparency programs do not have secondary audiences such as health plans.) However, it is still important to clearly define whether the program is expected to influence all consumers and providers or a more limited subset. Many quality transparency programs are state-based and, therefore, only seek to influence the target audiences within that state. The consumer audience is further limited to the subset of consumers who are likely to need the services being rated (e.g., inpatient hospital services). Consequently, the evaluator must consider whether program designers have appropriately limited both their objectives and target audience.

1b. Develop logic model

As discussed in the previous chapter, the second step in an evaluation is to develop a logic model, which illustrates the components of the program and their causal linkages. Although the program designers should ideally develop the logic model, this does not always occur, even if the theory of change and objectives are well thought out. As a result, it becomes the role of the evaluator, through consultation with the program managers, to create the logic model so that its components can be analyzed. As an objective observer, however, the evaluator must be careful to delineate the true components, as well as underlying objectives, of the program in the logic model. In addition, the logic model should include all of the environmental factors and barriers that might influence the program, given its objectives.

The components of the logic model and the number of barriers vary depending on the scope of the program. For example, quality transparency programs frequently focus on reporting data for either physicians or hospitals. Depending on this focus, the quality measures will vary, as well as the data collection methods and outreach approaches.

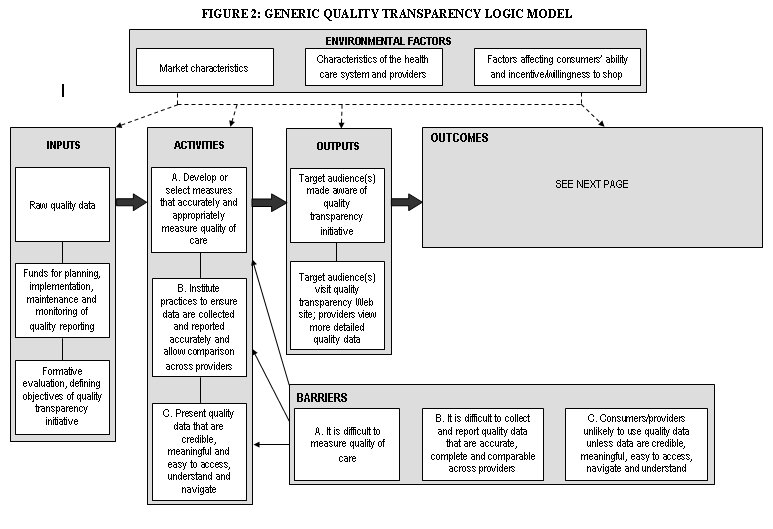

To act as a guide for the creation of quality transparency logic models, we have attempted to create a generic logic model (see Figures 2 and 3). This logic model is not tailored specifically for reporting hospital or physician quality data, or for an insurer vs. a government program. Instead, it attempts to provide a comprehensive list of the activities necessary to achieve the objectives outlined above. In addition, the generic logic model includes the barriers and environmental factors that may affect quality transparency programs and, consequently, should be considered by evaluators when creating logic models for real programs. It may also be used as a comparison tool to identify missing components of real programs’ logic models. The generic logic model is presented graphically and its components are described in detail on the following pages.

Box with "environmental factors" containing boxes:"market characteristics", "characteristics of the health care system and providers", and "factors affecting consumers’ ability and incentive/willingness to shop". From this box there are arrows drawn to boxes with the names: "inputs","activities","outputs", and "outcomes". A "barriers" box sits at the bottom of the image

inputs-contains these boxes: "Raw quality data", line drawn to: "Funds for planning, implementation, maintenance and monitoring of quality reporting", line drawn to: "Formative evaluation, defining objectives of quality transparency initiative". There is also an arrow that points from the "inputs" box to the "activities" box.

activities- contains these boxes: "Develop or select measures that accurately and appropriately measure quality of care", line drawn to: "Institute practices to ensure data are collected and reported accurately and allow comparison across providers", line drawn to:"Present quality data that are credible, meaningful and easy to access, understand and navigate". There is also an arrow that points from the "activities" box to the "outputs" box.

Barriers--contains these boxes: " It is difficult to measure quality of care", "It is difficult to collect and report quality data that are accurate, complete and comparable across providers", and "Consumers/providers unlikely to use quality data unless data are credible, meaningful, easy to access, navigate and understand". Three arrows from the "barriers" box point to,( "Develop or select measures that accurately and appropriately measure quality of care", "Institute practices to ensure data are collected and reported accurately and allow comparison across providers", and the "Present quality data that are credible, meaningful and easy to access, understand and navigate" boxes.

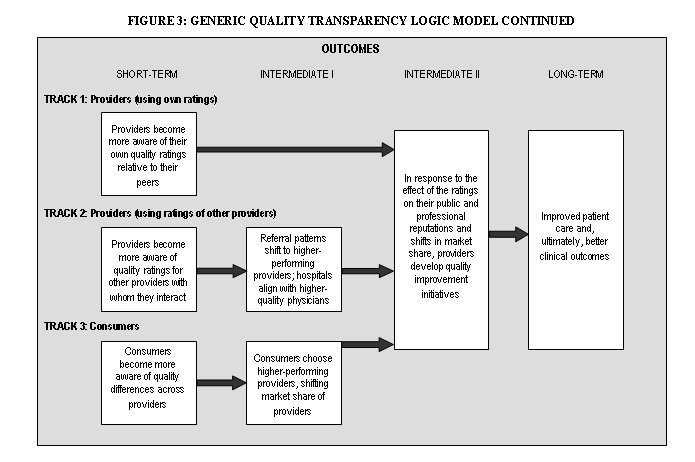

Large box with "Outcomes" at the top with " SHORT-TERM", "INTERMEDIATE I", "INTERMEDIATE II", and "LONG-TERM" as column headings.

Short-term -"TRACK 1: Providers (using own ratings)" above box containing: "Providers become more aware of their own quality ratings relative to their peers" with an arrow pointing toward the "intermediate II" column; "TRACK 2: Providers (using ratings of other providers)" above box containing " "Providers become more aware of quality ratings for other providers with whom they interact", this has an arrow pointing toward a box contaning, " Referral patterns shift to higher-performing providers; hospitals align with higher-quality physicians" in the "Intermediate I" column; TRACK 3: Consumers, above box containing, "Consumers become more aware of quality differences across providers", this has an arrow pointing toward a box contaning, "Consumers choose higher-performing providers, shifting market share of providers" in the "Intermediate" column.

Intermediate I - box containing, "Referral patterns shift to higher-performing providers; hospitals align with higher-quality physicians" with arrow point toward box containing "In response to the effect of the ratings on their public and professional reputations and shifts in market share, providers develop quality improvement initiatives" in the Intermediate II column; box containing "Consumers choose higher-performing providers, shifting market share of providers" , with an arrow pointing toward "In response to the effect of the ratings on their public and professional reputations and shifts in market share, providers develop quality improvement initiatives" in the "Intermediate II" column.

Intermediate II - box containing "In response to the effect of the ratings on their public and professional reputations and shifts in market share, providers develop quality improvement initiatives" with an arrow pointing toward a box containing "Improved patient care and, ultimately, better clinical outcomes" in the "Long -Term" column.

Long-Term-- box containing "Improved patient care and, ultimately, better clinical outcomes".

Inputs

Inputs are the resources necessary for the successful implementation and completion of program activities. The inputs for a quality transparency program include the following:

- Formative evaluation, defining the objectives of the quality transparency initiative

- Funds for planning, implementation, maintenance and monitoring of quality transparency initiative

- Raw quality data (e.g., patient chart data, administrative data)

Without access to appropriate quality data, the creation of a useful quality transparency Web site would be impossible, and without adequate funding, the creation of such a Web site would be difficult at best. Although a formal formative evaluation is not strictly necessary, it is vital to the success of the program that the designers rigorously consider all significant external factors that might affect the program and question both the components of the program and the assumed causal linkages. During this process, the designer should create a logic model and match each barrier with a corresponding activity to ensure that all external factors that must be dealt with to yield the stated objectives are being addressed. This process ensures a logical theory of change and, ultimately, the success of the program.

Environmental Factors

The environmental factors that affect quality transparency programs can be grouped into three conceptual categories: market characteristics, characteristics of the health care system and providers, and factors affecting consumers’ ability and incentive/willingness to shop. Within each of these categories are a number of external factors—listed below—that should be taken into account by planners and evaluators.

- Market characteristics:

- Regional/cultural variations can impact care processes and compliance with best practices.

- Markets characterized by high provider concentration, or provider shortages, may not allow for meaningful shopping. Additionally, providers that lack competition may not be motivated to improve quality.

- Characteristics of the health care system and providers

- The knowledge base regarding quality measurement and risk-adjustment methodologies is still evolving, and ratings based on existing methodologies may not adequately capture providers’ performance.

- Financial incentives sometimes are not aligned with quality measures.

- Provider organizations vary in resources and their ability to invest in infrastructure and quality improvement techniques.

- Competing and inconsistent quality measures across different quality transparency programs can place a heavy reporting burden on providers and confuse both providers and consumers.

- Providers who mistrust the quality reports and/or believe the quality measures or risk-adjustment methodologies are flawed, or who suspect they perform poorly, will not be motivated to participate unless there are financial incentives tied to quality improvement or participation is mandated.

- Physicians may be unwilling to use quality reports and change established referral and hospital admitting practices for reasons including: mistrust of reports, lack of time to consult reports and/or concern about disrupting established relationships.

- Factors affecting consumers’ ability and incentive/willingness to shop

- For services characterized by medical urgency, consumers have no time or ability to comparison shop.

- Consumers may trust word-of-mouth recommendations from their family/friends more than quality ratings.

- Consumers are not likely to use the quality transparency program unless they (or someone close to them) have an imminent need for the types of providers and/or services rated by the program.

- Consumers’ inclination to shop for high-quality providers varies depending on their age, education, general attitudes toward health care and other personal characteristics.

- Some consumers may not be aware that there is variation in the quality of providers and may lack an understanding of what is considered quality care.[33]

- Consumers in a health maintenance organization (HMO) or managed care plan that strictly defines which providers they can visit will have less of an incentive to compare providers’ quality ratings, unless they can afford to go out of network.

- Consumers may be reluctant to change physicians and sever existing relationships even if the physician has been rated poorly.

- The benefits of identifying and then using a higher-quality provider might be outweighed by the potential costs (e.g., increased transportation costs, time, the administrative burden of changing physicians).

Barriers

Unlike the environmental factors listed above, barriers are environmental factors that well-designed and -implemented programs can and should address (at least partially). Accordingly, each barrier should be matched to one or more program activities designed to address that barrier. In our generic logic model, we have broken down the possible barriers into three categories, each containing more specific barriers. Each of these individual barriers, listed below, has a corresponding activity that attempts to “solve” the issue. For a quality transparency program to meet its objectives, the majority (if not all) of the barriers must be addressed.

A. It is difficult to measure quality of care

- Some quality indicators are easier to measure than others; programs may inappropriately focus on easier-to-measure indicators rather than more meaningful indicators of quality.

- Transparency initiatives that only report one type of quality measure may be limited in their usefulness and have unintended effects.

- Although outcomes measures are considered to be the most effective method for evaluating quality of care, initiatives that only report outcomes measures are limited by the current state of knowledge and lack of consensus regarding these measures.[34] Furthermore, when outcomes measures are used, those that are not adequately risk-adjusted will not appropriately capture provider performance.

- Initiatives that only report process measures could encourage providers to solely focus on and overuse certain patient care processes regardless of whether they are the most appropriate treatment for the patient and actually improve health outcomes.[35]

- Initiatives that only report structural or indirect indicators that are less closely tied with actual health outcomes could frustrate providers or inappropriately encourage them to develop new systems that might not actually improve patient care.

- Initiatives that only report measures of patients’ perceptions often reflect communication processes and relationships with providers, which does not always correlate with quality of care and improved clinical outcomes and may be skewed by patients’ conditions.

B. It is difficult to collect and report quality data that is accurate, complete and comparable across providers

- Providers/vendors/health plans may not collect and report data using the same rigorous methods, leading to incomplete or inaccurate data that cannot be appropriately compared across providers.

- Providers may try to “game” the system by reporting higher performance rates than actually occurred.

- Programs using claims data may not accurately capture provider performance.[36]

- A heavy reporting burden may discourage provider participation or lead to careless data collection and reporting techniques.

- A long data-reporting period may obscure provider changes in quality over time. However, a reporting period that is too short may not capture sufficient data to allow for statistically discernible comparisons.

- Misleading quality ratings or data gaps on the Web site can result from poorly designed sampling and statistical methodologies and inaccurate, incomplete or insufficient data.

C. Consumers/providers are unlikely to use quality data unless it is credible, meaningful and easy to access, understand and navigate

Consumers:

- Consumers often are not aware of the existence of quality transparency resources or the benefits of using them.

- Consumers are more likely to respond to a single news report about a poorly performing hospital, rather than complicated quality reports.

- Consumers are unlikely to use Web sites that are not accessible, easy to navigate and understand, or responsive to user questions.

- Consumers may be frustrated by reports whose results make it difficult to distinguish between high- and low-performing providers. In addition, such a rating system would inspire few providers (only those marked as below average) to change their practices.[37]

- Consumers could be overwhelmed by information and unable to decide among providers.

- Consumers may need corresponding price information to assess comparative value across providers.

- Some consumers lack access to the Internet or are less comfortable using the Internet to obtain information.

Providers:

- Community stakeholders, especially providers, may not accept or participate in a program if it is perceived as (i) being imposed on them and (ii) using inappropriate or invalid quality measures and/or methods.

- Providers will not use quality transparency programs that are not adequately documented and do not provide clinically relevant quality data.

Activities

The activities are those actions that must be taken by a program manager to create a quality transparency program that achieves its objectives. The activities below are divided into three general categories, which are displayed on the logic model, and correspond to the barriers that they attempt to address.

A. Develop or select measures that accurately and appropriately measure quality of care

- Choose measures on which to report quality of care that:

- Have clinical significance—are both prevalent and significant

- Are less influenced by factors outside of quality of care—such as co-morbidities

- For which there is sufficient knowledge or evidence of steps that providers can take to improve clinical outcomes—an incurable disease would be inappropriate

- Vary in quality—unlike more automated procedures that are already standardized

- Have a low reporting burden for measuring the quality of care

- Contribute to a wider understanding of the provider’s performance—are not all focused in one area of care

- Are adequately validated and accepted by providers as best practices

- Choose quality measures that accurately capture the quality of care by using a mix of measures, including outcomes, process, structural and patient experience.

- Use the most state-of-the-art methods for risk-adjusting outcomes measures.

- For process measures, develop methodology to ensure that only those patients who should receive the services are being counted.

- Adjust patient experience measures based on the case mix of the patient population (e.g., age, gender, socioeconomics, co-morbidities etc.).

B. Institute practices to ensure data are collected and reported accurately and allow comparisons across providers

- Develop a standardized reporting procedure to ensure that all providers/vendors/health plans are reporting data in a consistent manner for the same distinct procedures or indicators. Program managers may also provide training, software and other support to ease the reporting burden on providers.

- Audit and validate data by comparing it to other sources. (If using claims data, compare a sample to medical records to ensure accuracy.)

- For claims data, after comparison with medical records, develop an algorithm to adjust claims data to correct for misreporting (typically underreporting).

- Choose an appropriate reporting period that allows the target audience to view recent data, but also provides sufficient sample size for statistical purposes.

- Develop rigorous methodologies to ensure completeness, integrity and statistical validity of the data (e.g., accurate assignment of patients to providers; development of valid sampling techniques and statistical testing methods).

C. Present quality data so that it is credible, meaningful and easy to access, understand and navigate

Consumers:

- Provide outreach to make consumers aware of the quality transparency Web site, the importance of clinical quality, and the variation among providers in clinical quality.

- Preface the report with concrete, significant and/or life-or-death examples to encourage the use of the Web site by consumers.

- Design the Web site to be user-friendly: easy to understand, navigate, compare providers, and generate provider-specific quality reports.

- Provide Web site instructions, definitions of each measure, and documentation in clear, accessible language for consumers.

- Provide support to Web site users who have questions or comments.

- Rate each provider relative to multiple benchmarks or cut-points, allowing users to distinguish between meaningful categories of providers.

- Provide decision aids to consumers to help them sort through the ratings and make appropriate decisions regarding their health care providers.

- Provide corresponding price data so that consumers can choose providers that are both high quality and efficient.[38]

- Report quality data through alternative information channels (e.g., print media) for consumers who would be less inclined to use the Internet.

- Provide outreach to other target audiences (health plans, employers, policymakers etc.) to encourage them to use the transparency program.

Providers:

- Engage important stakeholder groups—in particular the provider community that is being evaluated—in the design of the program (especially in the development of quality measures and methods),[39] and seek continuing input.

- Provide outreach to providers to make them aware of the quality transparency program.

- Provide detailed quality data that providers can use to implement quality improvement efforts.

- Provide additional detailed documentation of the Web site to providers.

Outputs

The outputs of the quality transparency initiative are two-fold:

- Target audiences are made aware of the quality transparency initiative.

- Target audiences visit the quality transparency Web site; providers potentially also view more detailed quality data.

Outcomes

The following outcomes tracks are interrelated and converge over time. (See the logic model diagram for further information).

Track 1: Providers (when using their own ratings)

- Short-term: Providers become more aware of their own quality ratings relative to their peers.

- Intermediate II: In response to the effect of the ratings on their public image, providers institute quality improvement initiatives.

- Long-term: Improved patient care and, ultimately, better clinical outcomes

Track 2: Providers (when using ratings of other providers)

- Short-term: Providers become more aware of quality ratings for other providers with whom they interact (e.g., physicians become more aware of ratings for specialists and hospitals to whom they refer or admit patients; hospitals become more aware of ratings for physicians whom they employ or accept on staff).

- Intermediate I: In response, patient referral patterns will shift to higher-performing physicians and hospitals; hospitals will develop relationships with higher-performing physicians.

- Intermediate II: In response to the shift in market share, providers will respond by developing quality initiatives.

- Long-term: Improved patient care and, ultimately, better clinical outcomes

Track 3: Consumers

- Short-term: Consumers become more aware of differences in quality across providers.

- Intermediate I: Consumers will choose higher-performing providers and possibly through the viral effect, tell others (e.g., family, friends) about the quality ratings, which will lead more consumers to shift to higher-performing providers.

- Intermediate II: In response to the shift in market share, providers will respond by developing quality initiatives.

- Long-term: Improved patient care and, ultimately, better clinical outcomes

Unintended Outcomes[40]

- Even if data are risk-adjusted, providers may turn away sicker patients to protect their quality ratings or shift the sickest patients to higher-quality hospitals, which could overwhelm those institutions, unless payment incentives are created to encourage providers to care for sicker patients.[41]

- The use of process measures can encourage providers to focus solely on and potentially overuse certain patient care processes regardless of whether they are the most appropriate treatment for the patient and actually improve health outcomes.[42]

- One long-term outcome of a successful quality transparency program might be that hospitals specialize more and become “focused factories,” a development that could impair access. (Hospitals may decide to discontinue certain service lines because they cannot attract high-quality specialists, or they cannot attract enough patients needing a certain type of care, or a combination of both factors.)

- A potential positive effect might be that general improvements in clinical processes may provide collateral benefits to patients with conditions that are not part of quality measures. These improvements may not be directly measurable.

1c. Formulate evaluation questions

As discussed in the previous chapter on program evaluation, the evaluation questions are drawn from the logic model and meshed with the Bennett Hierarchy, which outlines the types of evidence or evaluation questions that must be gathered or researched to evaluate the program. The following table (Table 2) presents a broad list of evaluation questions based on the generic logic model. Each of the identified activities and barriers has a corresponding question in the table. In a real evaluation, these questions might vary or include sub-questions depending upon the local context. Nevertheless, these evaluation questions may guide evaluators and allow them to compare a real quality transparency program against our generic program, which was developed to achieve the general objectives.

| Logic Model | Bennett Hierarchy | Evaluation Questions |

| Outcomes (Long-term) | Impact |

|

| ||

| Outcomes (Intermediate) | Actions |

|

| ||

| ||

| Outcomes (Short-term) | Learning |

|

| ||

| ||

| Outputs | Reactions |

|

| ||

| Participation |

| |

| ||

| ||

| Activities | Activities |

|

| ||

| ||

| ||

| ||

| Inputs | Resources |

|

| ||

|

The evaluation questions and Bennett Hierarchy represent the framework of the entire evaluation, defining its scope and purpose; however, it should not be considered an immutable structure. Instead, it is helpful to think of the evaluation questions as a ladder, up which the evaluator must climb depending upon the characteristics and limitations of the program. Some quality transparency programs may lack a logical theory of change. For such programs, it is not necessary to consider evaluation questions from the outcomes or even outputs levels, since the program would not be able to achieve a minimum level of success at the inputs or activities levels. This is a pragmatic approach to program evaluation, meant to conserve resources, rather than misuse funds by evaluating programs that are unlikely to have had any impact.

1d. Develop observable measures

As the evaluator moves up the ladder of evaluation questions, he or she must select an observable measure and define the minimum level of success for each evaluation question. This step operationalizes the qualitative evaluation questions and converts them into measurable quantities. Although this is a simple process for evaluation questions on the inputs level, it can be more difficult for questions such as, “Did consumers who visited the Web site become more knowledgeable about the differences in quality of care across providers?” In this case, one indicator of improved knowledge could be the opinion of a participating consumer about whether their knowledge of quality differences has improved as a result of using the Web site. Alternatively, another indicator could be the ability of a consumer, who has actually shopped for and had a particular procedure, to name low-ranked and high-ranked providers for that procedure among providers in their geographic area.[44]

After selecting an appropriate indicator, the evaluator must also define what level of change will be considered significant or meaningful. This step should be taken before any data have been collected and analyzed, so that neither the evaluator nor the sponsor of the evaluation will be influenced by the data results. What constitutes a significant level of change will depend on the specifics of the program, including the resources consumed by the program and overall stakeholder expectations. Consequently, it would not be possible or appropriate to define a generic level of significant change for each evaluation question.

This process of selecting an observable measure and minimum level of success only needs to be completed for those evaluation questions that the evaluator deems necessary for judging the program. As discussed in the previous section, it is unnecessary to identify observable measures for higher levels of questions if the program is poorly planned and cannot pass the input/resources level.

1e. Develop and implement evaluation design and analyze findings

The evaluator’s next task is to develop the evaluation design or methods for each evaluation question and corresponding indicator. For some evaluation questions, such as those on the lower rungs of the ladder, in the inputs and activities levels, the selection of methods is fairly simple. For example, the majority of the activities evaluation questions can be answered through an analysis of the logic model and an assessment of the Web site by the evaluator, in some cases supplemented by interviews with program designers and managers, and an assessment of the Web site by a health literacy expert. For those programs that pass these lower levels of evaluation, however, the evaluator will need to use a wider (and much more resource-intensive) variety of qualitative or quantitative methods to measure the outputs and outcomes.

The evaluation methods must be selected based on both accuracy and feasibility, which can be a difficult trade-off. This issue is exemplified within the generic quality transparency program model by the evaluation question, “How many consumers visited the Web site?” Of the quality transparency programs that we have researched, program managers typically attempted to answer this question by collecting data on the number of Web site “hits,” or visits to the Web site. Although this is a very low-cost method, it does not provide accurate information on the target audience’s use of the program since there is no reliable method to discern whether the “hit” came from a true consumer or from other users such as researchers, government agency staff, commercial entities, etc. In addition, each unique visitor to the Web site can generate a large number of Web hits, so counting the number of Web hits is not an acceptable approximation of the number of Web site users. Consequently, it is necessary to conduct a survey of the target audience to estimate how many people used the Web site. Although this approach is much more expensive, it yields credible results while a Web-hit counter does not.

If an evaluator did conduct a survey, it would be most efficient to include questions relating to several evaluation questions, including the following:

- To what extent are consumers aware of the quality transparency initiative?

- Among those who are aware of the initiative, did they visit the Web site? If not, why not?

- Among those who visited the Web site, did they find the quality information useful in comparing providers? Did they find the Web site clear, accessible and user-friendly? Did they become more knowledgeable about provider quality ratings for the services they needed? Did they use quality information from the Web site to choose a provider?

If resource constraints were not a consideration, the ideal type of consumer survey to conduct would be a large survey based on a probability sample (such as a random telephone survey). Such a survey would generate results that are inferable to the general population, plus or minus the sampling error. However, given the resources likely to be available to most quality transparency evaluations, a large, random consumer survey is certain to be prohibitively expensive, in part because a very large sample likely would be needed to identify a large enough pool of respondents who had used the quality transparency Web site.

A more feasible consumer survey for an evaluator to conduct would be a survey based on a convenience sample. With this type of sample, sampling error is not known, so inferences to the population cannot reliably be made. However, these kinds of surveys, if properly designed and carried out, can provide a useful, relatively affordable way of estimating the magnitude of program outputs and outcomes.

The easiest convenience sample to use would be consumers who visited the quality transparency Web site, and the lowest-cost survey method would be a pop-up survey administered from the Web site. This type of survey could gather information on questions such as the Web site’s perceived usefulness and its effects on consumer knowledge and shopping behavior. However, a pop-up survey (or other survey based only on Web-site users) has important limitations: No information would be obtained about members of the target audience who did not visit the Web site, and no estimates of prevalence of Web site use among the target audience could be generated (because it is not possible to calculate a denominator for the measure).

An alternative convenience sample—one that is broader and more meaningful but also involves higher costs and greater effort than a sample of Web-site users—would be the subset of consumers who need and use the health services that are rated by the quality transparency initiative. One effective approach toward reaching these consumers is for the evaluator to first identify providers of the relevant services and then solicit cooperation from some of these providers in distributing the survey. For example, for a hospital quality transparency initiative, an evaluator might seek the cooperation of local hospitals in distributing copies of a brief survey to discharged patients. With this type of survey, information is not limited to consumers who visited the quality transparency Web site; data also can be collected and analyzed about awareness of the initiative and prevalence of Web site use among the larger pool of consumers needing and using the services rated by the program. In addition to collecting data directly, consumer surveys also serve as a useful tool for identifying respondents who are willing to be contacted later to participate in follow-up interviews or focus groups—methods that can enhance an evaluator’s understanding of consumer perceptions and behavior beyond what can be conveyed in survey questions and responses. Employing a mixed-method approach (consumer survey supplemented by focus groups or interviews) is likely to be the evaluator’s best strategy for gaining a clear understanding of the reach of the program and elements in need of improvement.

The tools used to assess a program’s reach and impact on consumers also are applicable when assessing reach and impact on a program’s other primary audience—providers. A combination of surveys, focus groups and interviews can be employed, depending on the specifics of the quality transparency program, the number and types of providers for whom it is reporting performance, and the resources available to the evaluation. Because provider surveys tend to be very costly to administer, and because these surveys may not capture detailed and nuanced provider responses, conducting interviews may be the most effective approach to understanding providers’ awareness of, use of information from, and reactions to the program.[45] Interviews should include not only the providers being reported by the quality transparency program, but also other providers who interact with (e.g., make referrals to) the rated providers, relevant provider organizations (e.g., state and local medical and hospital associations) and other community stakeholders.

For programs that have shown significant impact at the program output and short-term/intermediate outcome levels, ideally the evaluator should be able to develop measures and methods for assessing the program’s long-term impact. For all program evaluations, this stage represents the most challenging aspect of program evaluation, because (a) long-term outcomes may be very difficult to observe and measure; and (b) long-term outcomes may show a significant level of change because of factors other than the program being evaluated. The latter is particularly true of quality transparency programs, because changes in quality can result from so many factors—the influence of other quality transparency initiatives, pay-for-performance programs and other financial incentives, medical innovations, changes in information technology, market developments, regulatory and other policy interventions—not only occurring simultaneously but often interacting with one another.

To gauge the long-term effects of a particular quality transparency program, conducting interviews with key providers, stakeholders and experts may give the evaluator important insights into how substantial an impact that program might have made in achieving long-term objectives (improving provider performance) and any unintended outcomes the program might have had. If the prevailing opinion of these experts is that the program had made a positive impact on long-term objectives, ideally the evaluator then would be able to validate this by conducting a multivariate quantitative analysis to supplement the initial qualitative data collection approach.