September 2020

Printer Friendly Version in PDF Format (58 PDF pages)

ABSTRACT

Per Section 223(d)(7)(A) of the Protecting Access to Medicare Act (PAMA) of 2014 (Public Law 113-93), the HHS Secretary must submit to Congress an annual report on the use of funds provided under all demonstration programs conducted under this subsection, not later than one year after the date on which the first state is selected for a demonstration program under this subsection, and annually thereafter. Each report shall include assessments of: (1) access to community-based mental health services; (2) the quality and scope of services provided by Certified Community Behavioral Health Clinics (CCBHCs); and (3) the impact of the demonstration programs on the federal and state costs of a full range of mental health services.

This 2019 report informs the third annual report to Congress and details how states have been reporting and utilizing the required quality measures for quality improvement. This report also assesses the costs to CCBHCs for providing the required services, and compares the demonstration payment rates to actual costs.

Subsequent annual reports to Congress on this demonstration will include more details, benefitting from data pending on quality measures and cost reports now being collected by CCBHCs and by demonstration states as required. In addition, claims analyses will be conducted to answer the questions about the demonstration posed by Congress.

This report was prepared under contract #HHSP233201600017I between HHS's ASPE/BHDAP and Mathematica Policy Research to conduct the national evaluation of the demonstration. For additional information about this subject, you can visit the BHDAP home page at https://aspe.hhs.gov/bhdap or contact the ASPE Project Officer, Judith Dey, at HHS/ASPE/BHDAP, Room 424E, H.H. Humphrey Building, 200 Independence Avenue, S.W., Washington, D.C. 20201. Her e-mail address is: Judith.Dey@hhs.gov.

DISCLAIMER: The opinions and views expressed in this report are those of the authors. They do not reflect the views of the Department of Health and Human Services, the contractor or any other funding organization. This report was completed and submitted on October 21, 2019.

TABLE OF CONTENTS

I. BACKGROUND

A. Description of the Certified Community Behavioral Health Clinic Demonstration

B. Goals of the National Evaluation

A. Interviews with State Officials

B. CCBHC Progress Reports

C. Site Visits

D. State Reports of PPS Rates

E. CCBHC DY1 Cost Reports

III. CERTIFIED COMMUNITY BEHAVIORAL HEALTH CLINIC PAYMENT RATES AND COSTS OF CARE

A. How did States Establish the CCBHC Rates? What were the DY1 Rates?

B. To What Extent did CCBHCs Succeed in Collecting and Reporting Information Requested in the Cost Reporting Templates?

C. What were the Total Costs and Main Cost Components in CCBHCs on a Per Visit-Day or Per Visit-Month Basis?

D. How did Visit-Day and Visit-Month Rates Compare with Actual Visit-Day and Visit-Month Costs Incurred during DY1?

E. Did States Change DY2 Rates based on the Experience of DY1?

IV. REPORTING OF QUALITY MEASURES

A. To What Extend do States and CCBHCs Expect to Succeed in Collecting and Reporting Data on the Quality Measures According to the Prescribed Specifications?

B. How have CCBHCs and States used Performance on the Quality Measures to Improve the Care They Provide?

C. What Measures and Thresholds did States use to Trigger QBPs in DY1?

APPENDICES

- APPENDIX A: PPS-2 Population-Specific DY1 Rates and Blended Rates across Clinics

- APPENDIX B: Outlier Payments in PPS-2 States

- APPENDIX C: Distribution of Labor Costs

LIST OF FIGURES

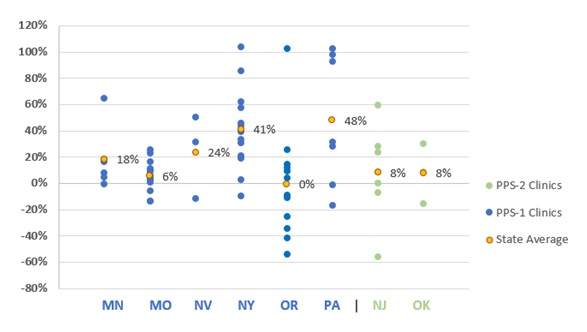

- FIGURE ES.1: DY1 Rates as Percent Above or Below DY1 Costs Per Visit-Day or Per Visit-Month for Clinics by State

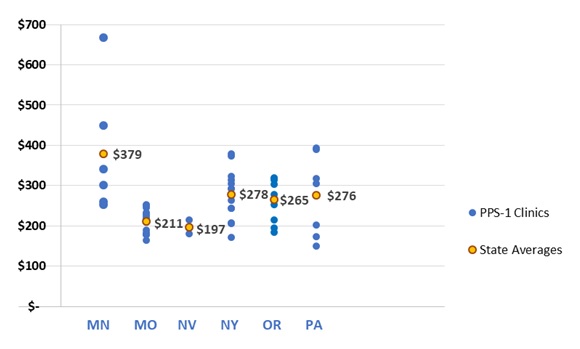

- FIGURE III.1: DY1 Visit-Day Rates for PPS-1 Clinics by State

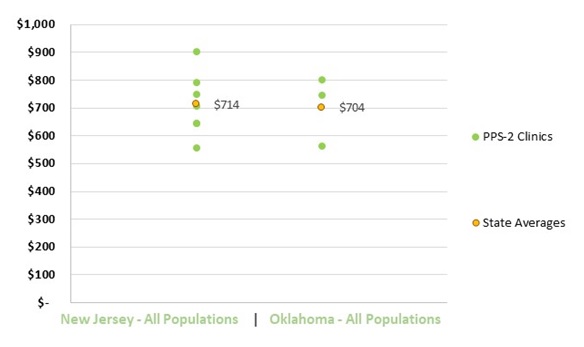

- FIGURE III.2: DY1 Average Blended Visit-Month Rates for PPS-2 Clinics by State

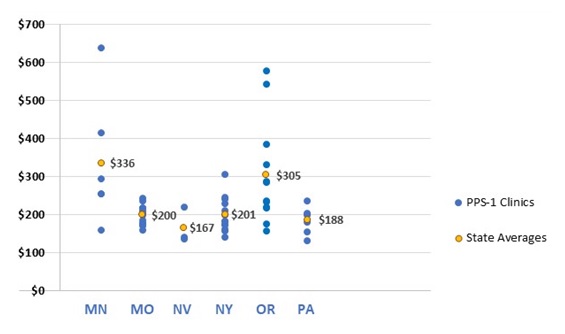

- FIGURE III.3: DY1 Daily Per-Visit Costs for PPS-1 Clinics by State

- FIGURE III.4: DY1 Blended Cost Per Visit-Month for PPS-2 Clinics by State

- FIGURE III.5: Major Cost Components Across All Clinics in DY1

- FIGURE III.6: Proportion of Clinic Costs Allocated to Direct Labor in DY1 by State

- FIGURE III.7: Proportion of Labor Costs by Staff Category Across All Clinics

- FIGURE III.8: DY1 Rate Paid as Share of Cost Per Visit-Day or Per Visit-Month for Clinics by State

- FIGURE C.1: Proportion of Labor Costs by Staff Category Across All PPS-1 Clinics

- FIGURE C.2: Proportion of Labor Costs by Staff Category Across All PPS-2 Clinics

LIST OF TABLES

- TABLE ES.1: Number of CCBHCs, Demonstration Start Date, and PPS

- TABLE ES.2: Quality Measures Used for Determining Quality Bonus Payments

- TABLE I.1: Number of CCBHCs, Demonstration Start Dates, and PPS Model

- TABLE III.1: New Jersey Five-Level Classification for PPS-2 Rates

- TABLE III.2: Oklahoma Six-Level Classification for PPS-2 Rates

- TABLE IV.1: Required CCBHC and State-Reported Quality Measures

- TABLE IV.2: Features of CCBHC EHR and HIT Systems

- TABLE IV.3: Percentage of CCBHCs that Used Demonstration Quality Measures to Support Changes in Clinical Practice by State

- TABLE IV.4: Quality Measures Used to Determine Quality Bonus Payments in DY1

- TABLE IV.5: Estimated Funding Available for QBPs

- TABLE A.1: New Jersey CCBHC Rates for DY1

- TABLE A.2: Oklahoma CCBHC Rates for DY1

- TABLE B.1: Thresholds for Triggering an Outlier Payment in New Jersey and Oklahoma

- TABLE B.2: Number of Threshold Payments Made to Clinics in New Jersey

ACRONYMS

The following acronyms are mentioned in this report and/or appendices.

| ADD | Follow-up Care for Children Prescribed ADHD Medication |

|---|---|

| ADHD | Attention Deficit Hyperactivity Disorder |

| AMA | American Medical Association |

| AMM | Antidepressant Medication Management |

| ASAM | American Society of Addiction Medicine |

| ASPE | HHS Office of the Assistant Secretary for Planning and Evaluation |

| BA | Bachelor of Arts |

| CCBHC | Certified Community Behavioral Health Clinic |

| CDF-A | Screening for Clinical Depression and Follow-Up Plan |

| CMHC | Community Mental Health Center |

| CMS | HHS Centers for Medicare & Medicaid Services |

| DCO | Designated Collaborating Organizations |

| DY | Demonstration Year |

| DY1 | First Demonstration Year |

| DY2 | Second Demonstration Year |

| EHR | Electronic Health Record |

| FTE | Full-Time Equivalent |

| FUH | Follow-Up after Hospitalization for mental illness |

| HEDIS | Healthcare Effectiveness Data and Information Set |

| HHS | U.S. Department of Health and Human Services |

| HIT | Health Information Technology |

| ICD | International Classification of Diseases |

| IET | Initiation and Engagement of Alcohol and other Drug Dependence Treatment |

| MEI | Medicare Economic Index |

| MHSIP | Mental Health Statistics Improvement Program |

| NCQA | National Committee for Quality Assurance |

| NQF | National Quality Forum |

| PAMA | Protecting Access to Medicare Act |

| PCPI | Physician Consortium for Performance Improvement |

| PCR-AD | Plan All-Cause Readmission Rate |

| PHQ | Patient Health Questionnaire |

| PPS | Prospective Payment Systems |

| PPS-1 | PPS First Model/Methodology |

| PPS-2 | PPS Second Model/Methodology |

| PTSD | Post-Traumatic Stress Disorder |

| QBP | Quality Bonus Payment |

| SAA | Adherence to Antipsychotic Medications for Individuals with Schizophrenia |

| SAMHSA | HHS Substance Abuse and Mental Health Services Administration |

| SED | Serious Emotional Disturbance |

| SMI | Serious Mental Illness |

| SRA | Suicide Risk Assessment |

| SUD | Substance Use Disorder |

EXECUTIVE SUMMARY

Section 223 of the Protecting Access to Medicare Act (PAMA), enacted in April 2014, authorized the Certified Community Behavioral Health Clinic (CCBHC) demonstration to allow states to test new strategies for delivering and reimbursing services provided in community mental health centers (CMHCs). The demonstration aims to improve the availability, quality, and outcomes of ambulatory services provided in CMHCs by establishing a standard definition and criteria for CCBHCs and developing new prospective payment systems (PPS) that account for the total cost of providing comprehensive services to all individuals who seek care. The demonstration also aims to provide coordinated care that addresses both behavioral and physical health conditions. CCBHCs and demonstration states must also report a common set of quality measures and report their costs as a condition of participating in the demonstration.

Both the payment and quality reporting requirements are central features of the CCBHC model. Historically, Medicaid has reimbursed CMHCs through negotiated fee-for-service or managed care rates, and there is some evidence that these rates did not cover the full cost of CMHC services.[1] The CCBHC demonstration addresses this problem by allowing states to develop a PPS that reimburses CCBHCs for the total cost of providing care to their patients based on projected costs. Specifically, states selected between two PPS models developed by the U.S. Department of Health and Human Services (HHS) Centers for Medicare & Medicaid Services (CMS) (although states could exercise some flexibility in operationalizing the models). The first model (PPS-1) provides CCBHCs with a fixed daily payment for each day that a Medicaid beneficiary receives services from the clinic (this is similar to the PPS model used by Federally Qualified Health Centers). The PPS-1 model also includes a state option to provide quality bonus payments (QBPs) to CCBHCs that meet state-specified performance requirements on quality measures. The second model (PPS-2) provides CCBHCs with a fixed monthly payment for each month in which a Medicaid beneficiary receives services from the clinic. PPS-2 rates have multiple rate categories--a standard rate and separate rates for special populations that are defined by the state. PPS-2 also requires states to make QBPs based on quality measure performance, and outlier payments for costs above and beyond a specific threshold (that is, payment adjustments for extremely costly Medicaid beneficiaries).

Aligning the payment with the actual cost of care was intended to provide CCBHCs with the financial resources necessary to provide high-quality comprehensive care. In addition, CCBHCs receive PPS payments based on anticipated daily or monthly per-patient cost rather than the cost of specific services provided during any particular patient visit. This allows clinics flexibility in the services they provide and the staffing models they use to meet the needs of individual patients without requiring specific billable services to ensure financial sustainability. Finally, the PPS financially incentivizes the delivery of high-quality care by rewarding performance on quality measures.

In October 2015, HHS awarded planning grants to 24 states to begin certifying CMHCs to become CCBHCs, develop their PPS, and plan for the implementation of the demonstration. To support the first phase of the demonstration, HHS developed criteria (as required by PAMA) for certifying CCBHCs in six important areas: (1) staffing; (2) availability and accessibility of services; (3) care coordination; (4) scope of services; (5) quality and reporting; and (6) organizational authority.[2] The criteria established a minimum threshold for the structures and processes that CCBHCs should have to provide high-quality care, although states may exercise some discretion in implementing the criteria to reflect their particular needs.

CCBHCs must provide coordinated care and offer a comprehensive range of nine types of services to all who seek help, including but not limited to those with serious mental illness (SMI), serious emotional disturbance (SED), and substance use disorder.[3] Services must be person and family-centered, trauma-informed, and recovery-oriented, and the integration of physical and behavioral health care must serve the "whole person." To ensure the availability of the full scope of these services, CCBHCs can partner with Designated Collaborating Organizations (DCOs) to provide selected services. DCOs are entities not under the direct supervision of a CCBHC but are engaged in a formal relationship with a CCBHC and provide services under the same requirements. CCBHCs that engage DCOs maintain clinical responsibility for services provided by a DCO to CCBHC consumers, and the CCBHC provides payment to the DCO.

In December 2016, HHS selected eight states to participate in the demonstration (listed in Table ES.1) from among the 24 states that received planning grants. As required by PAMA, HHS selected the states based on the ability of their CCBHCs to: (1) provide the complete scope of services described in the certification criteria; and (2) improve the availability of, access to, and engagement with a range of services (including assisted outpatient treatment). As shown in Table ES.1, six of the eight demonstration states (representing a total of 56 CCBHCs) selected the PPS-1 model and two states (representing ten CCBHCs) selected the PPS-2 model. As of October 2019, the demonstration will end on November 21, 2019.

| TABLE ES.1. Number of CCBHCs, Demonstration Start Date, and PPS | |||

|---|---|---|---|

| State | Number of CCBHCs | Demonstration Start Date | PPS |

| Minnesota | 6 | July 1, 2017 | PPS-1b |

| Missouri | 15 | July 1, 2017 | PPS-1b |

| Nevada | 3a | July 1, 2017 | PPS-1b |

| New Jersey | 7 | July 1, 2017 | PPS-2 |

| New York | 13 | July 1, 2017 | PPS-1b |

| Oklahoma | 3 | April 1, 2017 | PPS-2 |

| Oregon | 12 | April 1, 2017 | PPS-1 |

| Pennsylvania | 7 | July 1, 2017 | PPS-1b |

| SOURCE: Mathematica/RAND review of CCBHC demonstration applications and telephone consultations with state officials. NOTES: As of October 2019, the demonstration ends in all states on November 21, 2019.

|

|||

Goals of the National Evaluation

In September 2016, the HHS Office of the Assistant Secretary for Planning and Evaluation (ASPE) contracted with Mathematica and its subcontractor, the RAND Corporation, to conduct a comprehensive national evaluation of the CCBHC demonstration. ASPE is overseeing the evaluation in collaboration with CMS. Working with these federal partners, Mathematica and RAND designed a mixed-methods evaluation to examine the implementation and outcomes of the demonstration and to provide information for HHS to include in its reports to Congress.

Specifically, Section 223 of PAMA mandates that HHS's reports to Congress must include: (1) an assessment of access to community-based mental health services under Medicaid in the area or areas of a state targeted by a demonstration program as compared to other areas of the state; (2) an assessment of the quality and scope of services provided by CCBHCs as compared to community-based mental health services provided in states not participating in a demonstration program and in areas of a demonstration state not participating in the demonstration; and (3) an assessment of the impact of the demonstration on the federal and state costs of a full range of mental health services (including inpatient, emergency, and ambulatory services). To date, the evaluation has focused on providing critical information to Congress and the larger behavioral health community about the implementation of the CCBHC model across the eight demonstration states.

In June 2018, Mathematica and RAND submitted to ASPE the report "Interim Implementation Findings from the National Evaluation of the Certified Community Behavioral Health Clinic Demonstration."[4] The report described the progress that states and CCBHCs made (through April 2018) in implementing the demonstration and their successes and challenges. In June 2019, Mathematica and RAND submitted a second report, "Implementation Findings from the National Evaluation of the Certified Community Behavioral Health Clinic Demonstration,"[5] which provided updated information on the demonstration's implementation through April 2019 (approximately the first 22 months of the demonstration for six states and 24 months for the remaining two states).

In this latest report, we describe the costs during the first demonstration year (DY1) and the experiences of states and CCBHCs reporting the required quality measures. Given the novelty of reimbursing CCBHCs through a PPS, state and federal policymakers, and other behavioral health system stakeholders, have an interest in understanding the functioning of the PPS and the extent to which PPS rates covered the full costs of care. In addition, given that the adoption of electronic health records (EHRs) and other health information technology (HIT) has been slower among behavioral health providers than other sectors of the health care system (in part, because these providers have not historically received the same incentives as medical providers to adopt such technologies),[6]stakeholders also have an interest in understanding how CCBHCs made changes to their EHR/HIT systems to facilitate reporting the required quality measures. Stakeholders in the demonstration are also interested in how CCBHCs and states used performance on those measures to improve care and make QBPs to CCBHCs.

The findings in this report draw on data collected from: (1) interviews with state Medicaid and behavioral health officials; (2) progress reports submitted by all 66 CCBHCs; (3) cost reports submitted by all 66 CCBHCs; and (4) site visits to select CCBHCs. Most CCBHCs and states did not submit quality measure performance data to HHS in time for this report. As a result, information in this report regarding quality measures focuses on CCBHCs' and states' experiences reporting the quality measures and the enhancements they made to data collection and reporting systems to facilitate reporting the measures (based on our interviews with state officials), CCBHC progress reports, and site visits to CCBHCs.

A. Findings Regarding CCBHC PPS Rates and Costs

During the planning grant year, states worked with clinics that were candidates for CCBHC certification to set visit-day rates for PPS-1 states or visit-month rates for PPS-2 states. At the end of DY1, the CCBHCs submitted detailed cost reports, which include information on total costs of clinic operations. It is important to note that the rates, which were set prior to the beginning of the demonstration, might differ from the actual costs, reported by the clinics at the end of DY1. This report summarizes the rate-setting process and the costs of providing care in the CCBHCs during DY1. We also highlight potential reasons that the rates differed from the DY1 costs.

Establishment of PPS rates. States set the PPS rates using a formula, wherein projected total allowable costs were divided by the projected number of visit-days (for PPS-1) or visit-months (for PPS-2). To set the rates, states collected data on clinics' historical operating costs and visits using a cost report template provided by CMS. Clinics in seven of the eight participating states did not have experience in collecting and reporting their operating costs prior to the demonstration. In these states, officials reported that collecting this information for the purposes of setting rates was a major challenge for clinics. State officials also reported that they anticipated that the rates during DY1 would differ from the actual DY1 costs due to the limitations of the historical data on costs, particularly for services included in the CCBHC criteria that the clinics either did not deliver or bill separately prior to the demonstration. As a result, states and CCBHCs had to project the costs and number of visits for these new services based on very limited information or uncertain assumptions. Several states provided technical support (such as funding for accounting consultations) to the clinics to improve their cost-reporting capabilities.

The average daily rate across the 56 clinics in PPS-1 states was $264 (median rate was $252, and ranged from $151 to $667). PPS-1 rates were, on average, higher in urban CCBHCs than rural CCBHCs, and in CCBHCs that served a smaller number of clients (as measured by total visit-days) versus those that served a higher number of clients. Urban CCBHCs were likely to have higher rates due to higher labor costs and larger CCBHCs were likely to have lower rates due to apportionment of fixed costs across a larger number of visit-days. PPS-1 rates were also, on average, higher among CCBHCs in which a larger share of their total full-time equivalent staff was dedicated to medical doctors. The average blended PPS-2 rate was $714 in New Jersey and $704 in Oklahoma.[7] PPS-2 rates tended to be higher in CCBHCs that served a smaller number of clients versus those that served a higher number of clients, as measured by the total visit-months.

Cost-reporting by clinics. All the CCBHCs submitted cost reports that were approved by their state governments. However, in discussions with state officials and site visits to CCBHCs, we often heard about the challenges of reporting accurate cost information. To assist CCBHCs in providing accurate cost report information, states reported providing extensive technical assistance to clinic financial and administrative staff during DY1. Some states hired consulting firms to work directly with the CCBHCs on the reports during DY1. State officials in Pennsylvania instituted a "dry run" of the cost reports, which covered the first six months of the demonstration. Having the clinics go through the process of collecting and reporting cost information helped the state identify and address reporting challenges before the first federally mandated cost reports were due. Overall, CCBHCs were ultimately able to provide the information in the cost reports.

Total costs of CCBHC operations during DY1. Across all PPS-1 clinics, the average DY1 visit-day cost was $234 and ranged from $132 to $639. The state average visit-day cost ranged from $167 in Nevada to $336 in Minnesota. Across all PPS-2 clinics, the blended visit-month costs averaged $759 and ranged from $443 to $2,043. The state average visit-month cost was $679 in Oklahoma and $793 in New Jersey.

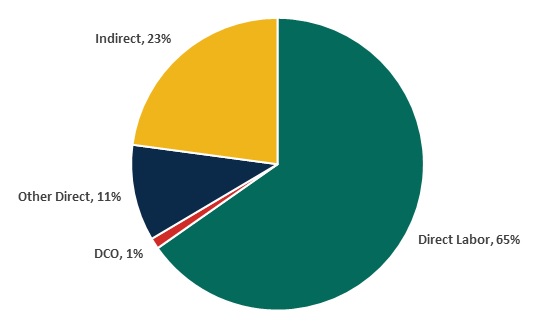

Direct labor costs accounted for 65 percent of the total allowable costs for all CCBHCs. This proportion is similar to the proportion reported for outpatient care centers in the Census Bureau's Service Annual Survey. According to that survey, labor costs account for 68 percent of total outpatient care center costs in 2016.[8] Indirect costs accounted for 23 percent of costs, and other direct costs accounted for 11 percent of costs. The distribution of costs across these categories was similar across states. About 1 percent of DY1 costs were payments by CCBHCs to DCOs. Although the total amount paid to DCOs was a small percentage of costs across all CCBHCs, among the 34 CCBHCs that had DCOs, the proportion of total costs paid to DCOs ranged from 0.02 percent to 14 percent and averaged 2 percent. The percentage of costs allocated to direct labor, indirect, other direct, and DCOs were similar for PPS-1 and PPS-2 states.

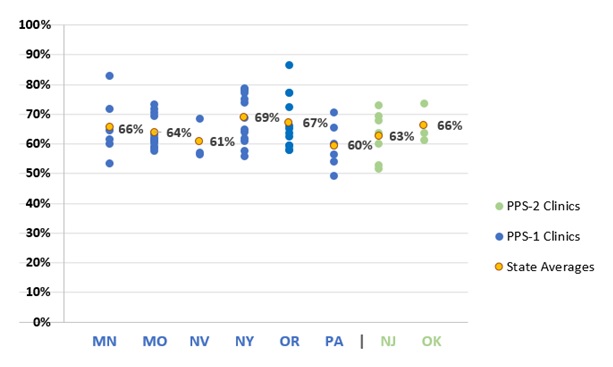

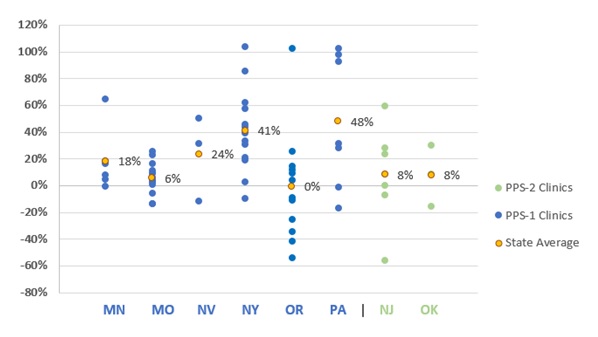

Rates relative to costs during DY1. In seven of the eight demonstration states, the rate per visit-day or per visit-month was higher, on average, than the cost per visit-day or per visit-month during DY1. As illustrated in Figure ES.1, four of the eight states had rates that, on average, were no more than 10 percent higher than costs, and four of the states had rates, on average, more than 10 percent higher than costs, ranging from 18 percent to 48 percent above cost on average. In Oregon and New Jersey, the rates were similar to costs on average, but the rate to cost ratio varied widely across clinics. In contrast, the rate to cost ratios for Missouri CCBHCs are closely grouped around the state average.

| FIGURE ES.1. DY1 Rates as Percent Above or Below DY1 Costs Per Visit-Day or Per Visit-Month for Clinics by State |

|---|

|

| SOURCE: Mathematica and the RAND Corporation analysis of DY1 CCBHC cost reports. NOTE: A positive percentage indicates how much the rate was greater than the cost and a negative percentage indicates how much the rate was less than the cost. |

There are at least two potential reasons for the tendency of the CCBHC rates to be higher than costs during DY1. First, as described above, state officials indicated in our interviews that the rates were set under the assumption that the CCBHCs would be fully staffed throughout the demonstration project. Although state officials recognized that not all CCBHCs would be fully staffed at the outset of the demonstration, it was important to set the rates under this assumption in order to avoid constraining hiring. If staff positions went unfilled, the clinic would have lower costs than had been anticipated and their costs would be lower than their rate. Second, as we described in a separate report, CCBHCs made efforts to increase access to services, including introduction of "open-access" systems where consumers could receive same-day appointments.[9] During site visits, several CCBHCs reported increases in the volume of consumers they see. Visit-days and visit-months would also increase if consumers were seen more frequently, on average, than the historical data on which the rates were set would suggest. If the number of consumer visits increased, while the costs were relatively constant, the actual costs per visit-day or visit-month would be lower than had been anticipated. Moreover, if the staffing costs were lower than anticipated while the number of visit-days or visit-months were greater than anticipated, the divergence between the rates and costs would be magnified.

Changes to rates for the second demonstration year (DY2). States were able to raise or lower their PPS rates for DY2 to bring rates into closer alignment with costs. The states could use a combination of re-basing (that is, re-calculation of the rates based on the DY1 cost reports), or inflation adjustment, using the Medicare Economic Index (MEI) (a measure of inflation in the health care sector). Six of the demonstration states re-based CCBHC rates: Minnesota, New Jersey, New York, Nevada, Oklahoma, and Pennsylvania. Oregon and Missouri chose to only adjust the rates between DY1 and DY2, based on the MEI. As state officials explained, their decision to adjust, not re-base, was related to not feeling comfortable with the length of time and the availability of cost, utilization, and staff hiring data to appropriately inform re-basing the rates.

B. Findings regarding CCBHC quality measure reporting

CCBHC criteria specify 21 quality measures for the demonstration, including nine clinic-reported measures and 12 state-reported measures. Clinic-reported quality measures are primarily process measures that focus on how clinics are achieving service provision target (for example, time to initial evaluation, whether screening and services were provided) and are based on clinical data typically derived from EHRs or other electronic administrative sources. State-reported measures focus on CCBHC consumer characteristics (for example, housing status), screening and treatment of specific conditions, follow-up and readmission, and consumer and family experiences of care. (See Table IV.1 in the report for a list of the measures and potential data sources that CCBHCs and states use to calculate the measures.)

Development of infrastructure to report measures. Nearly all clinics (97 percent) across all states made changes to their EHRs or HIT systems to meet certification criteria and support quality measure and other reporting for the CCBHC demonstration. The most commonly reported changes were modification of EHR/HIT specifications (for example, data fields; forms) to support collection and output of data required for quality measure reporting, and the addition of features to allow the electronic exchange of clinical information with DCOs and other external providers. State officials reported investing considerable resources, including extensive technical assistance in some cases, prior to and following the demonstration launch to ensure that participating clinics had appropriate data systems in place to meet the demonstration quality reporting requirements. This highlights the importance of building-out technological infrastructure for the demonstration to support data collection for mandated quality reporting.

In addition, many clinics modified approaches to screening and the use of standardized tools to assess specific indicators (for example, implementing the Patient Health Questionnaire (PHQ-9) to assess symptoms of depression for the 12-month depression remission measure). During site visits, many CCBHC staff reported that similar screening tools had been used prior to the demonstration, but virtually all sites reported implementing changes to screening protocols (for example, the frequency with which screenings were conducted) and how screening data were used in clinical practice, including how and where results were displayed in a consumer's chart. These changes were typically accompanied by extensive staff trainings and frequent data reviews to ensure provider compliance with screening and data entry procedures.

Successes and challenges reporting measures. Many clinics experienced challenges in the early stages of the demonstration with data collection and reporting the CCBHC-reported measures. In interviews with state officials during DY1, all states reported that many clinics initially experienced challenges with their EHR/HIT systems, particularly when collecting and aggregating data needed to generate quality measures (for example, querying databases to specify the correct numerators and denominators within a given timeframe). State officials most often reported challenges associated with CCBHCs' lack of familiarity with the required measure specification and difficulty obtaining certain variables, such as new service codes or new population subgroups, from clinic EHRs. Many clinic staff echoed these concerns during interviews on CCBHC site visits. In the early stages of the demonstration, many clinics relied upon ad hoc strategies to overcome these challenges and facilitate data collection and reporting. To help clinics resolve these early challenges, state officials provided ongoing technical assistance in the form of training webinars and direct support through multiple channels (phone, online, in-person) to: (1) explain the measures and the information needed from the CCBHCs to report on each of them; (2) provide examples of how to extract information and calculate measures from EHR data (for example, what queries to run; what numerators and denominators to use; etc.); and (3) explain how to complete the reporting template. By the end of DY2, officials in all states reported that the majority of issues surrounding CCBHC-reported quality measures had been resolved.

Use of quality measures to inform quality improvement. Although CCBHCs and states were not required to use quality measure data to monitor or improve the quality of care they provide, both state officials and clinics reported using quality measure data to support a wide range of quality improvement efforts. For example, officials in all states reported using quality measures data to support ongoing monitoring and oversight of CCBHCs (for example, to assess compliance with certification criteria). In addition, Pennsylvania utilized a "dashboard" that displayed CCBHC performance on quality measures and allowed individual CCBHCs to readily compare their performance against other CCBHCs in the state. Many clinics also reported using CCBHC quality measures to support quality improvements, although the use of individual quality measures (for example, time to initial evaluation; depression remission; suicide risk assessment [SRA]) varied depending on site-specific areas of focus.

| TABLE ES.2. Quality Measures Used for Determining Quality Bonus Payments | ||

|---|---|---|

| Required or Optional for Determining QBPsa | States with QBPs that Used the Measure to Determine QBPsb | |

| CCBHC-Reported Measures | ||

| Child and adolescent major depressive disorder: SRA (SRA-BH-C) | Required | All |

| Adult major depressive disorder: SRA (SRA-BH-A; NQF-0104) | Required | All |

| CDF-A | Optional | MN |

| Depression Remission at 12 months (NQF-0710) | Optional | None |

| State-Reported Measures | ||

| Adherence to Antipsychotic Medications for Individuals with Schizophrenia (SAA-BH) | Required | All |

| Follow-Up After Hospitalization for Mental Illness, ages 21+ (adult) (FUH-BH-A) | Required | All |

| FUH, ages 6-21 (child/adolescent) (FUH-BH-C) | Required | All |

| Initiation and Engagement of Alcohol and other Drug Dependence Treatment (IET-BH) | Required | All |

| PCR-AD | Optional | MN, NV, NY |

| Follow-up Care for Children Prescribed ADHD Medication (ADD-C) | Optional | None |

| Antidepressant Medication Management (AMM-A) | Optional | None |

| SOURCE: Appendix III -- Section 223 Demonstration Programs to Improve Community Mental Health Services Prospective Payment System (PPS) Guidance (Available at https://www.samhsa.gov/sites/default/files/grants/pdf/sm-16-001.pdf#page=94. Accessed July 26, 2019) and data from interviews with state Medicaid and behavioral health agency officials conducted by Mathematica and the RAND Corporation, February 2019. NOTES:

|

||

Quality Bonus Payment (QBP) programs. QBP programs were optional for states that implemented PPS-1 and required for states that implemented PPS-2. CMS specified six quality measures that states were required to use if they implemented a QBP program; states could choose from among an additional five measures or ask for approval for use of non-listed measures (required and optional measures are listed in Table ES.2). All demonstration states except Oregon offered bonus payments based on CCBHCs' performance on quality measures. Pennsylvania, Missouri, New Jersey, and Oklahoma used only the six CMS-required measures to determine bonus payments. Minnesota, Nevada, and New York also used the CMS-optional measure for Plan All-Cause Readmission Rate (PCR-AD) in addition to the six CMS-required measures. In addition to the six required measures, Minnesota also used the CMS-optional measure Screening for Clinical Depression and Follow-Up Plan (CDF-A) in determining QBPs, and New York added two state-specific measures based on state data regarding suicide attempts and deaths from suicide.

States varied in the criteria they used to award QBPs. In some states, CCBHCs could qualify for the QBP during DY1 simply by reporting the quality measures. Several states assessed performance on the quality measures during the first six months of the demonstration and used that information to set improvement goals for the remainder of DY1. Some states decided to weight some measures more heavily than others. As of Spring 2019, Missouri and Nevada had assessed CCBHC performance relative to the QBP program standards, and, in both states, all CCBHCs met the criteria. Officials from the other five states with QBPs reported that they were still receiving or analyzing data to finalize determinations of QBPs.

C. Future Evaluation Activities

In Summer 2020, we will update this report to include findings from the DY1 quality measures and DY2 cost reports. That report will provide updated information for the evaluation questions described in this report. In addition, we plan to address a number of additional evaluation questions related to changes in rates, costs, and cost components over time. We will also examine if states' changes to rates resulted in closer alignment with actual costs.

We are in the process of obtaining Medicaid claims and encounter data from states to examine the impacts of CCBHC services on hospitalization rates, emergency department service utilization, and ambulatory care relative to within-state comparison groups (Medicaid beneficiaries with similar diagnostic and demographic characteristics who did not receive care from CCBHCs). Depending on the availability of data within each state, we expect that the impact analyses will use approximately four years of Medicaid claims/encounter data (up to a two-year pre-demonstration period and a two-year post-implementation period). We will report these findings in our final report in May 2021, along with updated findings that draw on both years of CCBHC cost reports and quality measures.

I. BACKGROUND

A. Description of the Certified Community Behavioral Health Clinic (CCBHC) Demonstration

Section 223 of the Protecting Access to Medicare Act (PAMA), enacted in April 2014, authorized the Certified Community Behavioral Health Care (CCBHC) demonstration to allow states to test new strategies for delivering and reimbursing services provided in community mental health centers (CMHCs). The demonstration aims to improve the availability, quality, and outcomes of ambulatory services provided in CMHCs by establishing a standard definition and criteria for CCBHCs and developing a new payment system that accounts for the total cost of providing comprehensive services to all individuals who seek care. The demonstration also aims to provide coordinated care that addresses both behavioral and physical health conditions.

In October 2015, the U.S. Department of Health and Human Services (HHS) awarded planning grants to 24 states to begin certifying CMHCs to become CCBHCs, develop new prospective payment systems (PPS), and plan for the demonstration's implementation. To support the demonstration's first phase, HHS developed criteria (as required by PAMA) for certifying CCBHCs in six important areas: (1) staffing; (2) availability and accessibility of services; (3) care coordination; (4) scope of services; (5) quality and reporting; and (6) organizational authority. The criteria established a minimum threshold for the structures and processes that CCBHCs should have to provide high-quality care, although states may exercise some discretion in implementing the criteria to reflect their particular needs.

States used the planning grants to select a PPS model, develop PPS rates, and develop the infrastructure to support the demonstration. The HHS Centers for Medicare & Medicaid Services (CMS) developed two PPS models that participating states could implement. The first model (PPS-1) is a daily rate, similar to the PPS model used by Federally Qualified Health Centers. PPS-1 pays CCBHCs a fixed amount for each day that a Medicaid beneficiary receives CCBHC services. The payment is the same regardless of the type or volume of services the beneficiary receives on that that day. States that adopted the PPS-1 model also had the option of including a quality bonus payment (QBP) mechanism--a payment above the standard PPS rate based on performance on quality measures.

The second model (PPS-2) is a monthly rate that pays a fixed amount to the CCBHC for each month in which a beneficiary receives CCBHC services. The payment is the same regardless of the number of visits the beneficiary makes in a month or the types or volume of services they receive. The PPS-2 model has multiple rate levels--a standard rate and separate monthly rates for special populations defined by state-specified clinical conditions. CMS required that states implementing the PPS-2 model include a QBP mechanism, and an outlier payment mechanism (a supplemental payment to cover extremely high cost consumers). However, CMS allowed states flexibility to design the criteria and payment amounts for their QBP mechanisms and the thresholds and amounts for their outlier payments.

Both PPS models are "cost-based," meaning that the rates are intended to cover the actual costs of operating the CCBHC to provide the scope of services required in the certification criteria. The cost-based rate gives clinics the flexibility to structure their services and financial management systems in a way that enables them to provide the full scope of services without having to bill for each of these services individually. CMS requires that CCBHCs participating in the demonstration submit annual cost reports with details of their total operating costs. In addition, participating CCBHCs and states must submit to HHS performance data for a core set of quality measures specified in the criteria. States could also elect to require CCBHCs to submit additional quality measures.

In December 2016, HHS selected eight states to participate in the demonstration from among the 24 states that received planning grants. As required by PAMA, HHS selected the states based on the ability of their CCBHCs to: (1) provide the complete scope of services described in the certification criteria; and (2) improve the availability of, access to, and engagement with a range of services (including assisted outpatient treatment). HHS selected Minnesota, Missouri, Nevada, New Jersey, New York, Oklahoma, Oregon, and Pennsylvania to participate in the demonstration. As summarized in Table I.1, 66 CCBHCs are participating across the eight states; only two states elected the PPS-2 model. As of October 2019, the demonstration will end on November 21, 2019.

| TABLE I.1. Number of CCBHCs, Demonstration Start Dates, and PPS Model | |||

|---|---|---|---|

| State | Number of CCBHCs | Demonstration Start Date | PPS |

| Minnesota | 6 | July 1, 2017 | PPS-1b |

| Missouri | 15 | July 1, 2017 | PPS-1b |

| Nevada | 3a | July 1, 2017 | PPS-1b |

| New Jersey | 7 | July 1, 2017 | PPS-2 |

| New York | 13 | July 1, 2017 | PPS-1b |

| Oklahoma | 3 | April 1, 2017 | PPS-2 |

| Oregon | 12 | April 1, 2017 | PPS-1 |

| Pennsylvania | 7 | July 1, 2017 | PPS-1b |

| SOURCE: Mathematica/RAND review of CCBHC demonstration applications and telephone consultations with state officials. NOTES: As of October 2019, the demonstration ends in all states on November 21, 2019.

|

|||

The participating CCBHCs must provide coordinated care and offer a comprehensive range of nine types of services to all who seek help, including but not limited to those with serious mental illness (SMI), serious emotional disturbance (SED), and substance use disorder (SUD). Services must be person and family-centered, trauma-informed, and recovery-oriented, and the integration of physical and behavioral health care must serve the "whole person." To ensure the availability of the full scope of these services, CCBHCs can partner with Designated Collaborating Organizations (DCOs) to provide selected services. DCOs are entities not under the direct supervision of a CCBHC but are engaged in a formal relationship with a CCBHC and provide services under the same requirements. CCBHCs that engage DCOs maintain clinical responsibility for services provided by a DCO to CCBHC consumers, and the CCBHC provides payment to the DCO.

B. Goals of the National Evaluation

In September 2016, HHS Office of the Assistant Secretary for Planning and Evaluation (ASPE) contracted with Mathematica and its subcontractor, the RAND Corporation, to conduct a comprehensive national evaluation of the CCBHC demonstration. ASPE is overseeing the evaluation in collaboration with CMS.

Working with these federal partners, Mathematica and RAND designed a mixed-methods evaluation to examine the implementation and outcomes of the demonstration and to provide information for HHS to include in its reports to Congress. Specifically, Section 223 of PAMA mandates that HHS submit annual reports to Congress that include: (1) an assessment of access to community-based mental health services under Medicaid in the area or areas of a state targeted by a demonstration program as compared to other areas of the state; (2) an assessment of the quality and scope of services provided by CCBHCs as compared to community-based mental health services provided in states not participating in a demonstration program and in areas of a demonstration state not participating in the demonstration; and (3) an assessment of the impact of the demonstration on the federal and state costs of a full range of mental health services (including inpatient, emergency, and ambulatory services). To date, the evaluation has focused on providing critical information to Congress and the larger behavioral health community about the strategies that CCBHCs employ to improve care. As more data become available, the evaluation will describe the effects of the demonstration on consumer outcomes and costs.

Purpose of report. This report describes the costs during the first demonstration year (DY1) and the experiences of states and CCBHCs reporting the required quality measures. The payment system and required reporting of quality measures are integral to the CCHBC model and innovative in the context of community-based mental health services. Historically, Medicaid has reimbursed these providers using negotiated fee-for-service or managed care rates tied to specific services. In contrast, the PPS provides a fixed payment for every day (in the case of PPS-1) or every month (in the case of PPS-2) that a patient has at least one service. These payments do not change based on the amount of services a patient receives on a given day or within a month, with the exception of outlier payments in the PPS-2 mechanism, described below.

Analyses of Medicaid payments to CMHCs prior to the CCBHC demonstration found that these payments were in most cases below the costs of providing care, and that the new PPS rates for CCBHCs would likely be higher than historical Medicaid payments for mental health services.[10] States set the PPS rates based on the actual costs of providing care using a relatively simple formula. Specifically, states divided the total cost of providing care by the number of anticipated visit-days (in the case of PPS-1) or visit-months (in the case of PPS-2). States relied on historical cost data to set the PPS rates but they also had to make assumptions about the number of visits and costs for the full scope of services required by the CCBHC criteria (most CCBHCs added services to meet the demonstration requirements, and therefore did not have historical information on costs for every type of CCBHC service). This uncertainty with respect to the costs of care for CCBHCs, coupled with uncertainty about the number of visits that consumers would make, led to considerable uncertainty about how well the rates would match the actual costs incurred during the demonstration.

Finally, in all states except New York, CCBHCs did not submit cost reports prior to the demonstration. This reporting requirement introduced a more detailed and sophisticated level of accounting to clinics. CCBHCs' experiences collecting and reporting the cost reports can inform future efforts to apply cost-reporting requirements to CMHCs.

The demonstration also requires that states and CCBHCs report a standard set of quality measures. Given that the adoption of electronic health records (EHRs) and other health information technology (HIT) has been slower among behavioral health providers than other sectors of the health care system (in part, because these providers did not historically receive the same incentives as medical providers to adopt such technologies), the evaluation examined how CCBHCs' made changes to their EHR/HIT systems to facilitate reporting the required quality measures and how both CCBHCs and states used performance on those measures to improve care and make QBPs to CCBHCs.

This report answers the following evaluation questions:

-

How did the states initially establish the CCBHC rates? What were the DY1 rates?

-

To what extent did CCBHCs succeed in collecting and reporting information requested in the cost-reporting templates?

-

What were the total costs and main cost components in CCBHCs per visit or per month in DY1?

-

How did anticipated costs per visit or per month compare with actual costs incurred in DY1?

-

Did states change the second demonstration year (DY2) rates based on the experience of DY1?

-

To what extent do states and CCBHCs expect to succeed in collecting and reporting data on the quality measures according to the prescribed specifications?

-

How have CCBHCs and states used their performance on the quality measures to improve the care they provide?

-

What measures and thresholds did states use to trigger QBPs in DY1? How much funding did states set aside for QBPs?

We will update this report in Summer 2020. The updated report will include an analysis of the performance of the CCBHCs on the required quality measures during DY1 and an analysis of cost reports from DY2. The final evaluation report will include an analysis of the impact of the demonstration on health care utilization and quality of care using Medicaid claims data.

II. DATA SOURCES AND METHODS

Mathematica and RAND collected and analyzed the following data for this report: (1) interviews with state officials; (2) progress reports obtained from CCBHCs; (3) site visits to CCBHCs; (4) state reports of CCBHC PPS rates; and (5) CCBHC DY1 Cost Reports. This chapter describes these data sources and our analytic methods.

A. Interviews with State Officials

We conducted three rounds of telephone interviews with state behavioral health and Medicaid officials involved in leading implementation of the demonstration in each state. We conducted the first and second rounds of interviews at two points in DY1--September to October 2017 and February to March 2018, respectively. We conducted the third round toward the end of DY2--February to April 2019.

The first round of interview questions gathered information about early implementation, decisions made during the demonstration planning phase, early successes and challenges in fulfilling the certification requirements and following the data collection and monitoring procedures, and anticipated challenges or barriers to successful implementation. The second round of interviews gathered information on interim successes and challenges since the time of the initial interview, success in implementing demonstration cost-reporting procedures and quality measures, and early experiences with the PPS systems and QBPs (if applicable). The third round of interviews collected information on the same categories covered in the second round of interviews, with an emphasis on any changes in implementation successes and challenges experienced in DY2.

Mathematica and RAND conducted a total of 29 interviews (ten during each of the first two rounds, and nine in the third). In the first two rounds of interviews, behavioral health and Medicaid officials in six states participated in the interviews together to reduce scheduling burden and provide comprehensive answers; we conducted two separate interviews with behavioral health and Medicaid officials in two states. During the final round of interviews, officials in one state elected to conduct separate interviews for each group of state officials--one with behavioral health officials and one with Medicaid officials. Each interview lasted approximately 60 minutes.

Two researchers conducted each interview, with one leading the interview and one taking notes. We asked interviewees' permission to audio record the discussions to ensure the accuracy and completeness of interview notes. Following the interviews, we organized the interview information into categories defined by our evaluation questions. We summarized interviewees' responses for each state and then identified cross-state themes in the findings.

B. CCBHC Progress Reports

In Spring 2018 (DY1) and Spring 2019 (DY2), CCBHCs submitted online progress reports to Mathematica that gathered information about their staffing, training, accessibility of services, scope of services, HIT capabilities, care coordination activities, and relationships with other providers. The questions in the Spring 2019 progress report were almost identical to those in the 2018 progress report, with a few minor changes to reduce burden for CCBHC respondents and update the referenced timeframes. We collaborated with the demonstration lead in each state to conduct outreach to clinic leadership via phone and email before and during the collection of the progress reports to encourage their participation and answer any questions. All 66 CCBHCs completed the progress reports for 2018 and 2019--a 100 percent response rate. Findings in this report draw on both the 2018 and 2019 progress reports.

C. Site Visits

In February and March of 2019 (DY2), we conducted site visits to clinics in four demonstration states (Missouri, Oklahoma, Oregon, and Pennsylvania). In collaboration with our federal partners, we used information from the CCBHC progress reports described above and examined transcripts from the first and second round of interviews with state officials to select two CCBHCs within each state (three in Pennsylvania) to visit. We selected the final group of clinics to be diverse in terms of the following characteristics: urban-rural designation; location and proximity to other CCBHCs; size and number of CCBHC service locations; implementation of intensive team-based supports, Assertive Community Treatment, and Medication-Assisted Treatment; and any innovative engagement strategies or mobile/community-based supports.

During the site visits, we conducted in-depth discussions with clinic administrators and front-line clinical staff about how care has changed following implementation of the demonstration. Interview topics included: successes and barriers related to CCBHC staffing, steps clinics have taken to improve access to care and expand their scope of services, the CCBHCs' experience with payments and the PPS, and quality and other reporting practices. We asked interviewees' permission to audio record the discussions to facilitate our analysis. Following the interviews, we organized the interview information into categories defined by the CCBHC certification criteria, and the research team reviewed these data to identify cross-site themes.

D. State Reports of PPS Rates

Mathematica and RAND asked state officials to provide the rates that they paid each of the CCBHCs. For PPS-1 states, we report the average, median and range of rates across CCBHCs. For PPS-2 states, for which there are separate rates for each population category, we calculated a blended rate by weighting the category specific rates by the actual distribution of consumers across the rate categories (based on information contained in the cost reports, described below). We used Pearson correlation coefficients to examine the relationship between clinic characteristics, such as clinic size or rural versus urban location, and the rates. The evaluation team did not have access to the cost reports that CCBHCs completed during the rate-setting process. As a result, information in this report about the rate-setting process was based on interviews with state officials.

E. CCBHC DY1 Cost Reports

We obtained data on CCBHC costs during DY1 from the standardized cost reports that states were required to submit to CMS during the first half of 2019. States submitted the cost reports to CMS for all 66 CCBHCs in Excel format, and we obtained them from CMS and conducted the analysis using Excel. We reviewed the cost reports and communicated with state officials to obtain clarifying information as needed.

The cost reports include information on clinic operating costs and the total number of clinic visit-days (PPS-1) or visit-months (PPS-2) that occurred during the DY. Visit-days are unique days on which a consumer received at least one service, and visit-months are months in which a consumer received at least one service. The reports include all visit-days or visit-months for all consumers, not only visits covered under Medicaid or the PPS. The operating costs include both direct costs, such as labor and medical supplies, and indirect costs, such as rent payments.

Although clinics used the same CMS-provided form to report cost information, there were some differences across clinics and across states in the reporting. These differences required harmonization by the evaluation team for purposes of comparison. New Jersey's cost reports covered an earlier time period (the year prior to the demonstration) than the cost reports from the other demonstration states. We applied the Medicare Economic Index (MEI) to the cost data to adjust for the time difference. Missouri cost reports were split into two reports; one reporting costs for specialized services and the other reporting costs for comprehensive services. We then followed the procedure used by the state to combine information from the two reports to calculate a single cost estimate.

We conducted several types of analyses using the cost report data:

-

Total cost per visit-day or visit-month calculations and cost component analyses. We used the cost reports to calculate the total costs per visit-day or visit-month for each clinic, depending on whether the clinic was in a state with a PPS-1 or PPS-2 system. We calculated cost per visit-day or visit-month by dividing the total costs reported for the DY1 period by the total number of visit-days or visit-months. In addition, we used the detailed cost breakdowns provided in the reports to compare the CCBHCs with respect to the proportions of their total cost that was devoted to various cost components, including staff types. The breakdown of costs into cost components (for example, direct, indirect) was calculated as a share of total allowable costs. We also examined DCO costs because the DCO mechanism is a unique feature of the CCBHC model meant to allow CCBHCs flexibility to contract out some services within the PPS mechanism. DCO costs could cover a wide range of services, depending on the role the DCO played in the CCBHC of which it was a part.

-

Labor costs. We examined labor costs in greater detail because they are the largest cost component. We developed staffing categories to facilitate consistent comparisons across the clinics and states despite variability in the original staff classifications.

-

Costs versus rates. We compared DY1 costs with the PPS rates (as reported by the states). We report this comparison as the percentage by which the rates were higher or lower than the costs.

While interpreting the cost report information, we found some limitations of the data. Some states used different methods to allocate and present direct and indirect costs, and in some cases the costs cover different time periods. This is most evident in the classification of staffing, for which we devised a classification system to enable comparisons across CCBHCs and states. We did not independently audit the cost reports for accuracy. Nonetheless, these cost reports are the first source of information available on the financial administration of CCBHCs and they provide insight into the model's functioning.

III. CERTIFIED COMMUNITY BEHAVIORAL HEALTH CLINIC PAYMENT RATES AND COSTS OF CARE

This chapter describes the PPS, rates, and costs of CCBHCs, drawing on data from interviews with state officials and the DY1 cost reports. We first describe the PPS rates and how they varied across CCBHCs within and across states. We then present the actual DY1 costs and the major cost components. Finally, we summarize DY1 PPS rates relative to actual DY1 costs.

A. How did States Establish the CCBHC Rates? What Were the DY1 Rates?

Establishing the rates. States set the PPS rates for each CCBHC by dividing projected total allowable costs by the projected number of visit-days (for PPS-1) or visit-months (for PPS-2). In the case of PPS-2, states used the same formula to set rates for each of the special populations defined by the state's rate schedule. Although the formula for calculating the rates is simple, the rate calculation requires accurate data for calculating the allowable costs and number of visit-days or visit-months. According to state officials, collecting this data prior to the beginning of the demonstration was a challenge for states and the clinics that were to become CCBHCs.

To set the rates, states collected data on clinics' historical operating costs using a cost report template provided by CMS. In New York, the clinics have historically been required to submit detailed, audited cost reports to the state--the CMS rate-setting form was filled in using information from these reports. However, clinics in the seven other states participating in the demonstration did not have experience completing these types of cost-reporting forms or reporting their operating costs. In these states, state officials reported that collecting this information was a major challenge for clinics. Several states provided technical support to the clinics, such as funding for accounting consultations, to improve their cost-reporting capabilities. States conducted desk reviews of the cost reports submitted by clinics to ensure accuracy.

In addition to the information from the cost reports on clinics' historical operating costs, the rate-setting process also required information on changes to those costs that were anticipated due to the implementation of the CCBHC certification criteria. Since the clinics would be broadening their scope of services to meet the criteria, they would generally be increasing their total operating costs. However, because there was a lack of historical data on the actual costs of providing the enhanced scope of services, the additional costs had to be estimated. To estimate the additional costs, CCBHCs applied market rates for additional staff, spending on training or infrastructure, and other anticipated costs approved by the states.

Clinics were also required to estimate the number of visit-days or visit-months they would have over the course of DY1. While the clinics had historical information on patterns of service utilization, they may not have collected information on visit-days or visit-months prior to the demonstration. In addition, as CCBHCs, they planned to change their internal organization of care delivery and make extensive efforts to increase access to care. Due to these efforts, they could anticipate that the number of visit-days or visit-months would be quite different during the demonstration than they had been historically. However, the clinics lacked accurate methods for precisely estimating the impact that becoming a CCBHC would have on the number of visit-days or visit-months they would have in a year.

Because states set PPS rates for DY1 by dividing the projected total allowable costs by the projected number of visit-days or visit-months, there are two ways the rates could diverge from the actual visit-day or visit-month costs incurred during DY1. First, the projected total costs of operating the CCBHC could be different from the actual total costs. This could happen, for example, if the CCBHC hired higher or lower salaried staff than anticipated or incorporated services that were more expensive to provide than anticipated. Second, the PPS rates could be different than the actual costs if the actual number of consumer visit-days or visit-months was higher or lower than anticipated. For example, if the clinic increased the number of visit-days or visit-months beyond the expected number, while their total costs remain constant, their actual cost per visit-day or visit-month would be lower than anticipated.

The evaluation team did not have access to the data nor the calculations used to set the DY1 rates. Therefore, we were unable to identify specific data limitations that may have led to inaccuracy in the rate-setting. However, state officials indicated in our interviews that they were aware of these data limitations and expected the rates to be inaccurate to a certain degree during DY1. For instance, officials in one state noted that the rates would differ from the actual costs because the rates were calculated under the assumption that the CCBHCs would be fully staffed from the beginning of the demonstration. However, the state officials expected that the CCBHCs would require some amount of time to hire staff, and that consequently they would not incur the full amount of anticipated costs. Similarly, staff turnover at a CCBHC during the year could reduce CCBHC costs, since they would not be paying staff costs for positions that were unfilled. If the incurred staffing costs were below projections, then the actual CCBHC operating costs would be lower than anticipated and the CCBHCs would be paid at a rate above their actual costs.

DY1 rates. DY1 rates varied across CCBHCs and states. The average daily rate across the 56 clinics in PPS-1 states was $264 (median rate was $252). Across all states, PPS-1 rates ranged from $151 to $667, a four-fold difference.

As shown in Figure III.1, PPS-1 rates varied across clinics within states, as well as across states. For some states, such as Minnesota and Pennsylvania, rates varied widely across clinics, whereas in other states, such as Missouri and Nevada, the rates varied less across clinics. The state average rates ranged from a low of $197 in Nevada to $379 in Minnesota.

-

Across all PPS-1 states, clinics in rural areas had on average slightly lower rates ($254 on average) compared with those in urban areas ($271 on average). This may be due to lower staffing costs or other factors.

-

Across all PPS-1 states, clinics with a higher volume of consumer visit-days had lower rates ($229 on average) than clinics with lower volume of consumer visit-days ($298 on average). Rates may be lower in clinics with more visits due to economies of scale.

-

Across all PPS-1 states, clinic rates were positively associated with the proportion of the clinic's total full-time equivalent (FTE) staff that were medical doctor positions (psychiatrists or other medical doctors). The correlation between the proportion of FTE staff that were medical doctors and the rates was 0.4.

| FIGURE III.1. DY1 Visit-Day Rates for PPS-1 Clinics by State |

|---|

|

| SOURCE: Mathematica and the RAND Corporation analysis of DY1 CCBHC cost reports. |

PPS-2 rates are structurally more complicated than PPS-1 rates. The PPS-2 has multiple rate categories, one rate for the "standard" population and additional rates for special populations (that is, consumers who met criteria for certain conditions expected to have different costs on average). CMS allowed states to define their special populations and associated rates for the demonstration. As shown in Table III.1 and Table III.2, New Jersey and Oklahoma use different definitions for their special populations. Both states included individuals with SMI and SUD as special populations. However, the states differed with respect to other special populations: New Jersey included individuals with post-traumatic stress disorder (PTSD) and SED as special populations whereas Oklahoma designated individuals into special populations based on age, homelessness, and the presence of first-episode psychosis. The special population rates were higher on average than the standard population rates, although this pattern did not hold for all CCBHCs (see Appendix A for the rates for each CCBHC). In addition, four CCBHCs in New Jersey and one in Oklahoma applied the same rate to more than one special population. In some cases, this was done when a clinic had zero cases in one of the categories during the year prior to DY1 (the year on which the rates were based). In other cases, clinics averaged costs across two or more categories to arrive at a single rate, due to small sample sizes.

| TABLE III.1. New Jersey Five-Level Classification for PPS-2 Rates | |||||

|---|---|---|---|---|---|

| Standard Population | Special Population 1 | Special Population 2 | Special Population 3 | Special Population 4 | |

| Population definition | Individuals who do not have an ICD-9 or ICD-10 diagnosis code corresponding to any of the following special populations | SMI | SUD | PTSD | SED |

| Average rate across clinics | $627 | $748 | $795 | $750 | $724 |

| SOURCE: New Jersey CCBHC Demonstration Application Part 3. NOTE: Standard population: Individuals who do not have an ICD-9 or ICD-10 diagnosis code corresponding to any of the special populations within the reporting period. See Appendix A for special population definitions. The state used the term severe emotional disorder (SED). |

|||||

To compare the PPS-2 rates within and across states, we calculated a blended rate for each CCBHC using the standard population rate and each of the special population rates. For each clinic, we weighted each population rate by the number of visit-months in that category in DY1 according to the cost reports and then calculated the average for the clinic. We then calculated the average across the clinics to report a state average.

Across the ten PPS-2 clinics in Oklahoma and New Jersey, the average blended rate was $711, and the median blended rate was $727. The blended rates ranged across CCBHCs from a low of $558 to a high of $902.

| TABLE III.2. Oklahoma Six-Level Classification for PPS-2 Rates | ||||||

|---|---|---|---|---|---|---|

| Standard Population | Special Population 1 |

Special Population 2 |

Special Population 3 |

Special Population 4 |

Special Population 5 |

|

| Population definition | Individuals who are not classified in any of the following special populations | High-risk SMI or co-occurring SUD | High-risk SED or co-occurring condition | Adults with significant SUD | Adolescents with significant SUD | Chronic homelessness or first time psychosis episode for children and adults |

| Average rate across clinics | $636 | $993 | $1,135 | $1,055 | $1,010 | $830 |

| SOURCE: Oklahoma CCBHC Demonstration Application Part 3. NOTE: Standard population: Individuals who are not classified in any of the 5 special populations during the reporting period. See Appendix A for special population definitions. |

||||||

As shown in Figure III.2, the average blended rates in New Jersey and Oklahoma were similar to each other, $714 and $704 respectively. The range across clinics in the blended rates was wider in New Jersey than in Oklahoma, which is not surprising given the larger number of CCBHCs in that state. The blended rates were lower on average in clinics with higher numbers of visit-months, similar to the finding with respect to rates and visit-days in the PPS-1 clinics.

Contrary to the pattern in PPS-1 rates, the PPS-2 rates were higher in rural areas than in urban areas. The average blended rate in rural areas was $852 and the average blended rate in urban areas was $676. The lowest blended rate in a rural clinic, $801, was higher than the highest blended rate in an urban clinic, $793. This finding should be interpreted with caution given the very small sample size, which includes only two rural clinics, one in each PPS-2 state. It is unclear if this pattern reflects general conditions in rural versus urban areas or simply the particular conditions of the small number of clinics in the PPS-2 states.

| FIGURE III.2. DY1 Average Blended Visit-Month Rates for PPS-2 Clinics by State |

|---|

|

| SOURCE: Mathematica and the RAND Corporation analysis of DY1 CCBHC cost reports. NOTES: The figure presents a blended rate for each clinic. The blended rates were calculated by averaging across the population rates, weighting each population rate by the proportion of visit-months to which it was assigned. |

The PPS-2 states also specified outlier payment thresholds for the standard population and each of the special populations distinguished in their rate schedule. Appendix B summarizes the outlier payment thresholds for each state. Unlike the PPS-2 rates, the thresholds applied to all CCBHCs within the state and were not specific to each CCBHC. The thresholds were set at higher levels of cost in Oklahoma than they were in New Jersey.

B. To What Extent did CCBHCs Succeed in Collecting and Reporting Information Requested in the Cost-Reporting Templates?

Cost-reporting was challenging for most CCBHCs. In discussions with state officials and site visits to CCBHCs, we often heard about the challenges of reporting accurate cost information. For example, interviewees from a number of states reported that many clinics--particularly those with limited experience in preparing cost reports--had some initial difficulty in completing cost forms. Of the states participating in the demonstration, only New York had a history of requiring cost reports from specialty mental health clinics. To assist CCBHCs in providing accurate cost information, states reported providing extensive technical assistance to clinic financial and administrative staff. The technical assistance began during the planning year, and, in some cases, continued throughout the demonstration. Some states hired consulting firms to work directly with the CCBHCs on the reports during DY1. State officials in Pennsylvania instituted a "dry run" of the cost reports, which covered the first six months of the demonstration. Having the clinics go through the process of collecting and reporting cost information helped the state identify and address reporting challenges before the first federally mandated cost reports were due.

Interviewees noted that many clinics initially experienced challenges with reporting anticipated costs, due to limited familiarity with PPS and uncertainty over the extent to which staffing and the number of consumers served would change as a result of new services and efforts to increase access to care. During nearly all clinic site visits, financial and reporting staff members also noted challenges in anticipating costs.

Some of the challenges in reporting cost information became clear from our analyses of the data that were reported. In particular, some clinics struggled to accurately report staff costs and FTEs. The cost reports included details on staff types, the salaries and benefits associated with each staff type, and staff work time (as measured in FTEs); however, this information was reported in varying ways and sometimes with significant gaps. Wherever we noticed data omissions, errors, or inconsistent reporting methods, we requested via email supplemental information from states and clinics, and states and clinics were highly responsive to our questions. We incorporated what we learned from states into our analyses of the cost reports.

Inconsistency in the reporting of staff cost information does not impact rate calculations, which are based on total costs. However, they do impact our ability to analyze the cost distribution by staff type in a consistent fashion across states and CCBHCs. Below, we detail some examples of specific reporting challenges identified through our analysis of the cost reports:

-

All states except Missouri reported staff categories that CMS had pre-populated in the Excel cost report workbook. One clinic in Minnesota and one in Oregon also submitted their own unique staff categories and did not report cost data for any of the staff categories in the CMS cost report template.

-

Several clinics did not report any FTEs, or omitted FTE data for certain staff categories. However, all but one CCBHC supplied full information on FTEs after we requested it.

-

Some clinics included anticipated FTEs in their cost reports and some did not.

-

Consultant FTEs were included in FTE totals by some CCBHCs and excluded by others. We requested this information when it was omitted and added it to our calculations.

-

Some clinics excluded a portion of salary costs for staff when salaries were paid in part by other entities.

C. What were the Total Costs and Main Cost Components in CCBHCs on a Per Visit-Day or Per Visit-Month Basis (depending on the PPS model)?

Total costs. Across all PPS-1 clinics, the average DY1 visit-day cost was $234 and ranged from $132 to $639. Figure III.3 shows the distribution of visit-day costs across clinics and the average visit-day cost for each state. The state average visit-day cost ranged from $167 in Nevada to $336 in Minnesota. For some states, such as Minnesota and Oregon, the visit-day costs varied widely across CCBHCs within the state, while in others, such as Missouri and New York, the costs were tightly clustered around the mean value.

| FIGURE III.3. DY1 Daily Per Visit Costs for PPS-1 Clinics by State |

|---|

|

| SOURCE: Mathematica and the RAND Corporation analysis of DY1 CCBHC cost reports. |

| FIGURE III.4. DY1 Blended Cost Per Visit-Month for PPS-2 Clinics by State |

|---|

|

| SOURCE: Mathematica and the RAND Corporation analysis of DY1 CCBHC cost reports. |

Across all PPS-2 clinics, the blended visit-month costs averaged $759 and ranged from $443 to $2,043. Figure III.4 shows the distribution of visit-month costs across clinics and the average visit-month costs for each state. The state average visit-month cost was $679 in Oklahoma and $793 in New Jersey. Oklahoma had fewer clinics, and their range was tighter than that observed in New Jersey.

We did not include outlier payments in the calculation of the visit-month costs reported above. None of the clinics in Oklahoma reported any outlier payments. Of the seven clinics in New Jersey, five reported receiving outlier payments, and the number of outlier payments ranged from 37 to 1,522. Appendix B provides information about outlier payments.

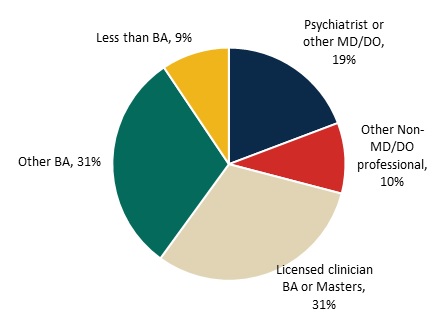

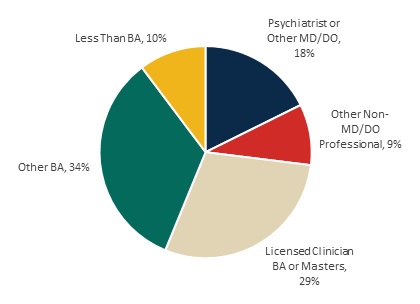

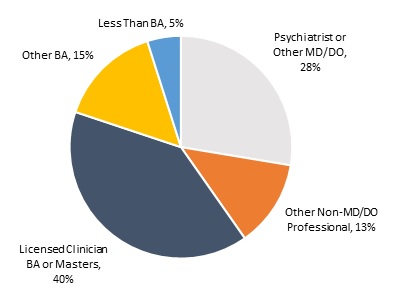

Major components of CCBHC costs. Direct labor costs accounted for 65 percent of the total allowable costs for CCBHCs (Figure III.5). This proportion is similar to the proportion reported for outpatient care centers in the Census Bureau's Service Annual Survey. Outpatient care centers include specialty mental health clinics, such as CMHCs, as well as general medical facilities, such as primary care offices. According to that survey, labor costs account for 68 percent of total outpatient care center costs in 2016.[11] Indirect costs accounted for 23 percent of costs, and other direct costs accounted for 11 percent of costs.